Gemma 4 Goes Truly Open Source — Why Google Switched to Apache 2.0

Google released 4 Gemma 4 models under Apache 2.0 — no MAU limits, full commercial freedom. The 31B Dense scores 89.2% on AIME and 84.3% on GPQA Diamond. The 26B MoE nearly matches it with only 3.8B active parameters. Here is the full breakdown.

April 4, 2026 · AI News

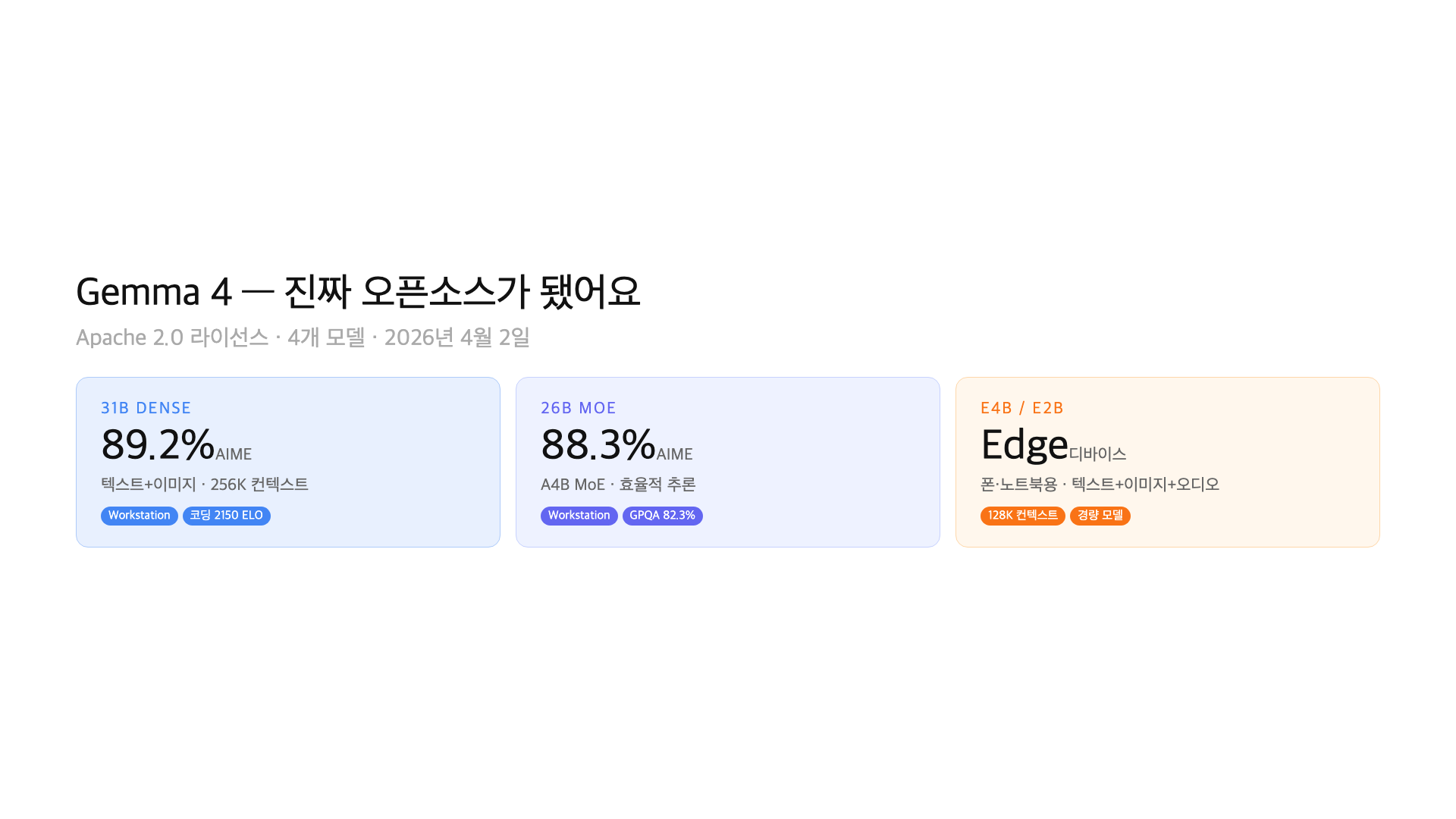

On April 2, Google released four Gemma 4 models: 31B Dense, 26B MoE, E4B, and E2B. Server-grade to laptop-grade, all at once. But the bigger story is not the models themselves. It is the license. Gemma 4 ships under Apache 2.0.

Previous Gemma versions used a custom “Gemma Terms of Use” license. It had monthly active user (MAU) caps and complex usage conditions. Apache 2.0 has none of that. Full commercial use, modification, redistribution — all free. Google just removed every friction point that kept enterprises from adopting Gemma.

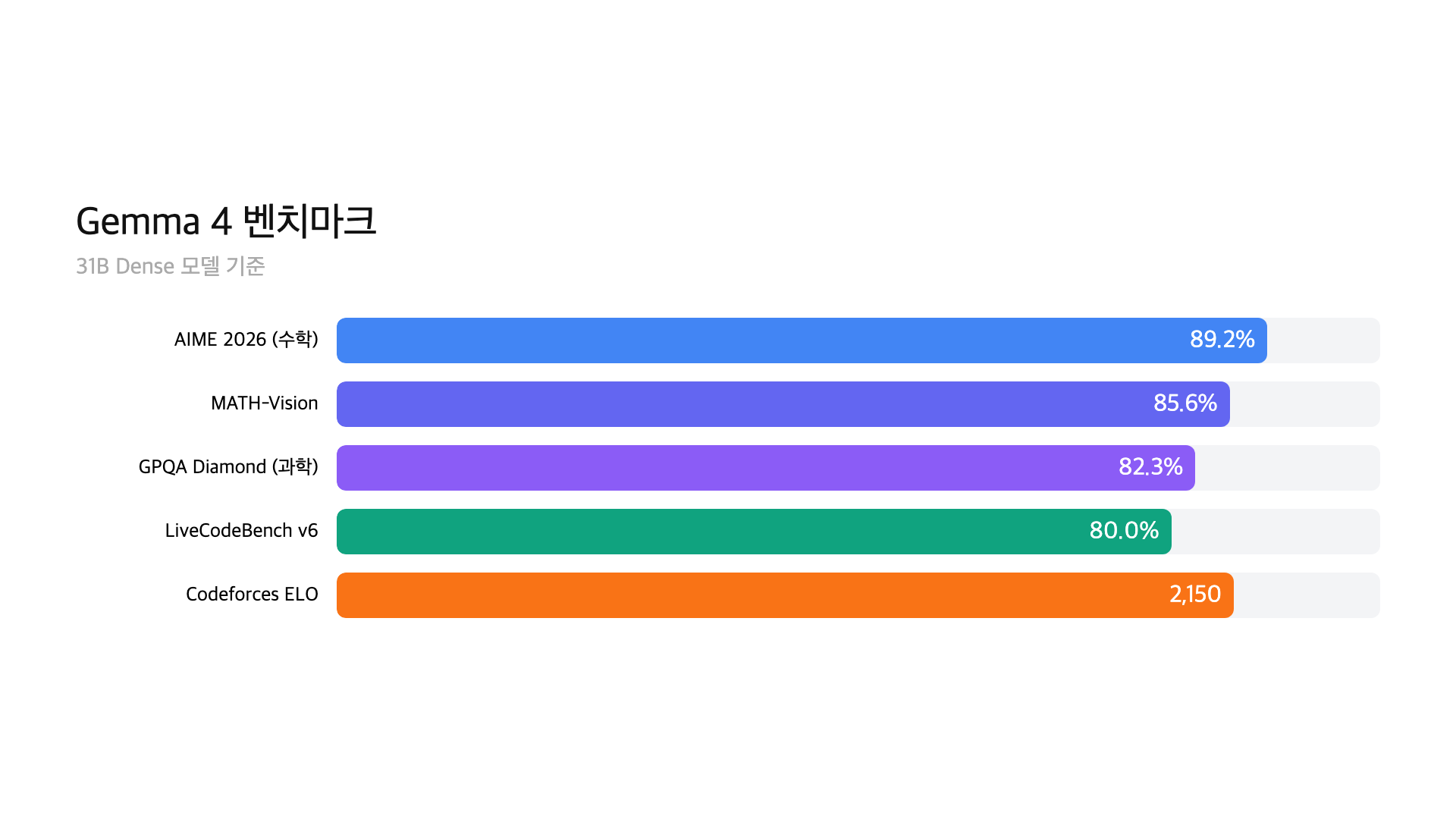

Performance jumped too. The 31B model hits 89.2% on AIME and 84.3% on GPQA Diamond. That is the top of the open-source leaderboard at this model size. Here is the full breakdown.

– 4 models: 31B Dense + 26B MoE (server/workstation) + E4B + E2B (edge/laptop)

– License: Apache 2.0 (no MAU limits, full commercial freedom)

– 31B benchmarks: AIME 89.2%, LiveCodeBench 80.0%, GPQA Diamond 84.3%

– Available now on HuggingFace, Ollama, and Google AI Studio

Why Apache 2.0 changes everything

Gemma 1 through 3 used “Gemma Terms of Use” — a custom license that looked open-source but was not quite. It had MAU (monthly active user) caps. Certain use cases were restricted. Every enterprise had to run it through legal review before deployment.

Apache 2.0 is an OSI-approved open-source license. No commercial restrictions. No MAU limits. You can modify it, redistribute it, build a product on top of it, and charge money for it. It is more permissive than Meta’s Llama License.

Why did Google switch? They were losing the open-source race to Llama (Meta), Qwen (Alibaba), and Mistral. The custom license was a dealbreaker for enterprise adoption. When a developer hears “needs legal review,” they pick a different model. Apache 2.0 removes that barrier entirely.

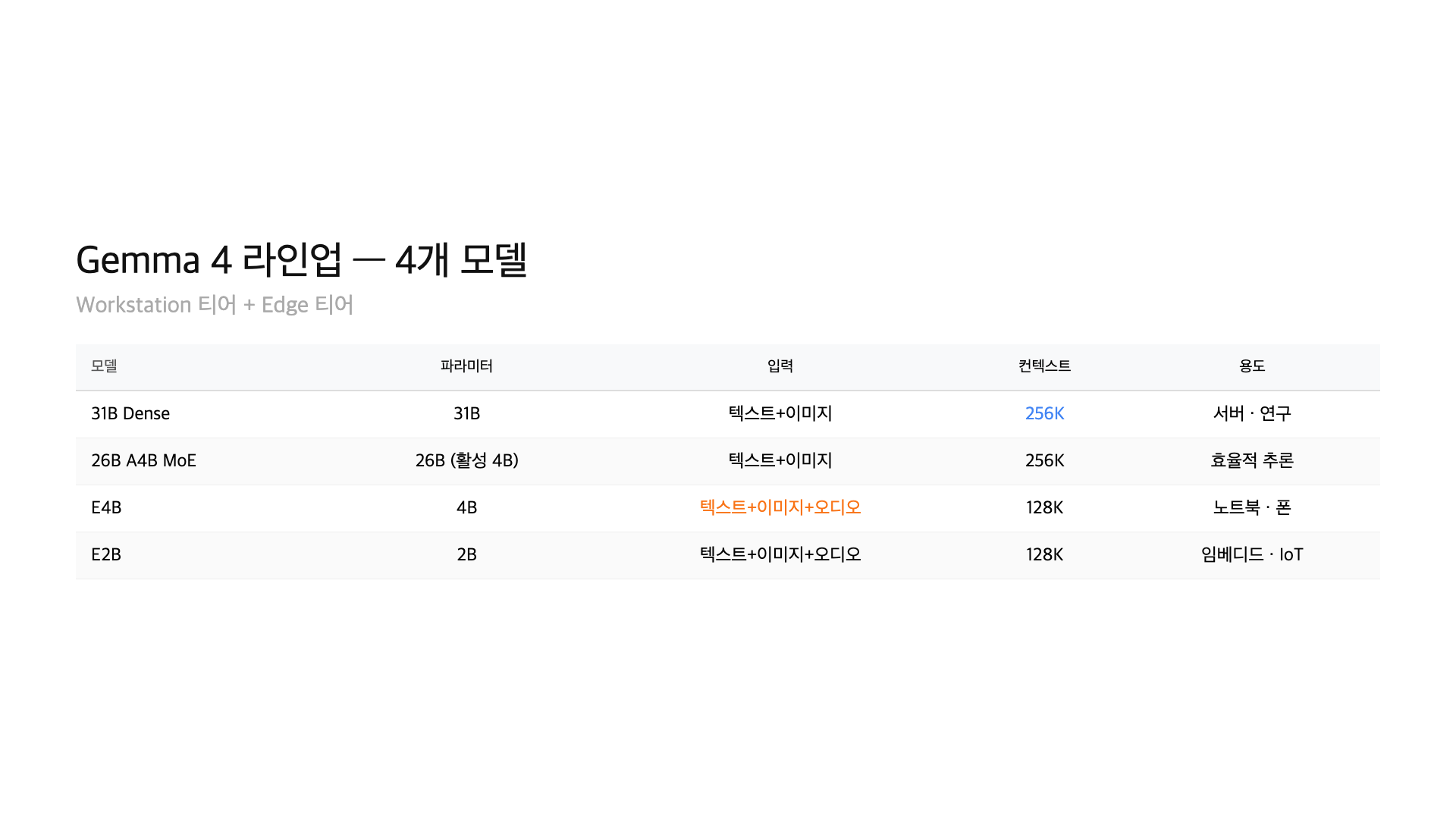

All 4 models at a glance

| Model | Parameters | Architecture | Context | Modalities | Target |

|---|---|---|---|---|---|

| 31B Dense | 31B | Dense | 256K | Text + Image | Server |

| 26B MoE | 26B (3.8B active) | MoE (A4B) | 256K | Text + Image | Workstation |

| E4B | 4B | Dense | 128K | Text + Image + Audio | Edge / Laptop |

| E2B | 2B | Dense | 128K | Text + Image + Audio | Edge / Mobile |

An interesting detail: the large models (31B, 26B) handle text and image. The small models (E4B, E2B) add audio support. Google wants edge devices to process voice input locally — no cloud round-trip needed.

All models are derived from Gemini 3 research. Google distilled its proprietary research into lighter open-source packages.

31B Dense — the open-source heavyweight

The 31B Dense is the largest model in the Gemma 4 lineup. It supports a 256K context window and accepts multimodal input (text + image).

The benchmark numbers stand out. AIME (math competition problems): 89.2%. LiveCodeBench (coding): 80.0%. GPQA Diamond (graduate-level science): 84.3%. Codeforces Elo: 2150. At this model size, it is the best open-source model available.

To put that in perspective, this is approaching GPT-4 level from a year ago. A free, locally-runnable 31B model reaching this performance is the 2026 reality. For companies wanting to run AI without API costs, this is a serious option.

26B MoE — only 3.8B active parameters

MoE stands for Mixture of Experts. Imagine an office with 12 specialists. When a question comes in, only the 2-3 relevant experts stand up to answer. The rest stay seated. Total headcount is 12, but the active workforce per question is 2-3.

The 26B MoE works exactly this way. Total parameters: 26B. Active parameters per inference: 3.8B. This means faster generation, lower memory usage, and lower compute costs — while maintaining near-full-size performance.

How close? AIME: 88.3%. GPQA Diamond: 82.3%. That is only 1-2% behind the 31B Dense. If your workstation has limited memory, the 26B MoE gives you the best performance-per-GB ratio in the Gemma 4 lineup.

E4B and E2B — AI that runs on a laptop

E4B and E2B are edge models — designed for laptops, tablets, and phones. The “E” stands for Edge.

Their standout feature is triple modality: text, image, and audio. The larger models only handle text and image. The smaller ones add audio processing. Google wants on-device voice commands to work locally without sending data to the cloud.

E4B is about 9.6GB on Ollama. E2B is about 7.2GB. A 16GB Mac runs E4B comfortably. E2B works on 8GB Macs. Context window is 128K — shorter than the large models’ 256K, but sufficient for most use cases.

Benchmark comparison — how does it stack up?

| Benchmark | Gemma 4 31B | Gemma 4 26B MoE | Qwen 3.5 32B | Llama 4 Scout |

|---|---|---|---|---|

| AIME 2025 | 89.2% | 88.3% | 85.1% | 78.0% |

| LiveCodeBench | 80.0% | 76.5% | 74.2% | 70.8% |

| GPQA Diamond | 84.3% | 82.3% | 79.8% | 74.5% |

| Codeforces Elo | 2150 | 2020 | 1950 | 1800 |

31B Dense leads every benchmark. 26B MoE trails by 1-4% — remarkable given that only 3.8B parameters are active per inference. Both beat Qwen 3.5 32B and are well ahead of Llama 4 Scout.

Keep in mind: this is an open-source comparison. Large proprietary models like Claude Opus 4.6 and GPT-5.4 still outperform them. But a free, locally-runnable 31B model hitting these numbers was unthinkable a year ago.

Where to use Gemma 4

Three platforms have immediate support. HuggingFace for downloading model weights. Ollama for local execution with a single command. Google AI Studio for browser-based testing.

ollama run gemma4 # E4B (default, 9.6GB)

ollama run gemma4:e2b # E2B (7.2GB)

ollama run gemma4:26b # 26B MoE (18GB)

ollama run gemma4:31b # 31B Dense (20GB)

Apache 2.0 means no legal review needed for enterprise deployment. The previous Gemma Terms of Use required checking MAU limits and commercial eligibility. That friction is gone.

Fine-tuning is also possible. LoRA-based lightweight fine-tuning is the common approach, and since it is Apache 2.0, you can redistribute fine-tuned versions freely.

FAQ

Q. What is the relationship between Gemma 4 and Gemini 3?

Gemma 4 is derived from Gemini 3 research. Gemini 3 is Google’s proprietary API model. Gemma 4 is a lighter, open-source version that anyone can run locally or deploy commercially.

Q. Why does the Apache 2.0 license matter?

No commercial restrictions. The previous Gemma Terms of Use had MAU caps and required legal review. Apache 2.0 allows unrestricted commercial use, modification, and redistribution. It is more permissive than the Llama license.

Q. What is a MoE model?

Mixture of Experts. It is like an office with 12 specialists where only 2-3 answer each question. Total parameters: 26B. Active per inference: 3.8B. Less memory, faster speed, nearly the same performance as the full-size model.

Q. Can I run Gemma 4 on my Mac?

Yes, via Ollama. E2B needs 8GB RAM, E4B needs 16GB, 26B MoE needs 18GB+, and 31B Dense needs 64GB+. Apple Silicon MLX acceleration is enabled automatically since Ollama v0.19.0.

Q. Is Gemma 4 better than GPT-5 or Claude?

It tops the open-source benchmarks at its size class. But large proprietary models like GPT-5.4 and Claude Opus 4.6 still outperform it. The key advantage is cost — Gemma 4 is free to run with no API fees.

Wrap-up

Gemma 4 has two stories. First, Apache 2.0 makes it genuinely open-source — no MAU limits, no legal review needed, full commercial freedom. That is a real shift in Google’s open-source strategy. Second, the 26B MoE model delivers near-31B performance with only 3.8B active parameters. You can run a competitive AI model on a Mac.

The open-source AI landscape keeps expanding. Llama, Qwen, Mistral, and now Gemma — developers have real choices across model sizes and use cases. If you want to try Gemma 4 on your Mac, check out our Ollama setup tutorial.

This article was written on April 4, 2026, based on Google’s official announcement (April 2, 2026) and HuggingFace model cards. Benchmark figures are from Google’s self-reported measurements and may differ from independent evaluations.

GoCodeLab tests AI models hands-on and reports honestly. Subscribe for more updates.