April 2026 AI Model Rankings — Claude vs GPT vs Gemini, Who’s #1?

LMSYS Chatbot Arena rankings for April 2026. Claude Opus 4.6, GPT-5.4, and Gemini 3.1 Pro are in a statistical dead heat around 1500 Elo. Claude leads coding at 1561 Elo. Open-source models are closing the gap fast. Updated monthly.

April 4, 2026 · AI News · Updated monthly

“What is the best AI model right now?” I get this question at least once a week. The problem is the answer keeps changing. In February 2026, Gemini 3 Pro was number one. In April, Claude Opus 4.6 and GPT-5.4 are in a statistical tie. Rankings shift month to month.

That is why I built this monthly AI model ranking table. Based on LMSYS Chatbot Arena, organized by overall, coding, and open-source categories. Not just benchmark scores — I also cover which model fits which use case.

LMSYS Chatbot Arena is run by UC Berkeley researchers. Users compare two model responses in a blind test and pick the winner. Results are scored using an Elo system, like chess ratings. Because it measures real user preferences rather than synthetic benchmarks, it is the most trusted ranking in the industry.

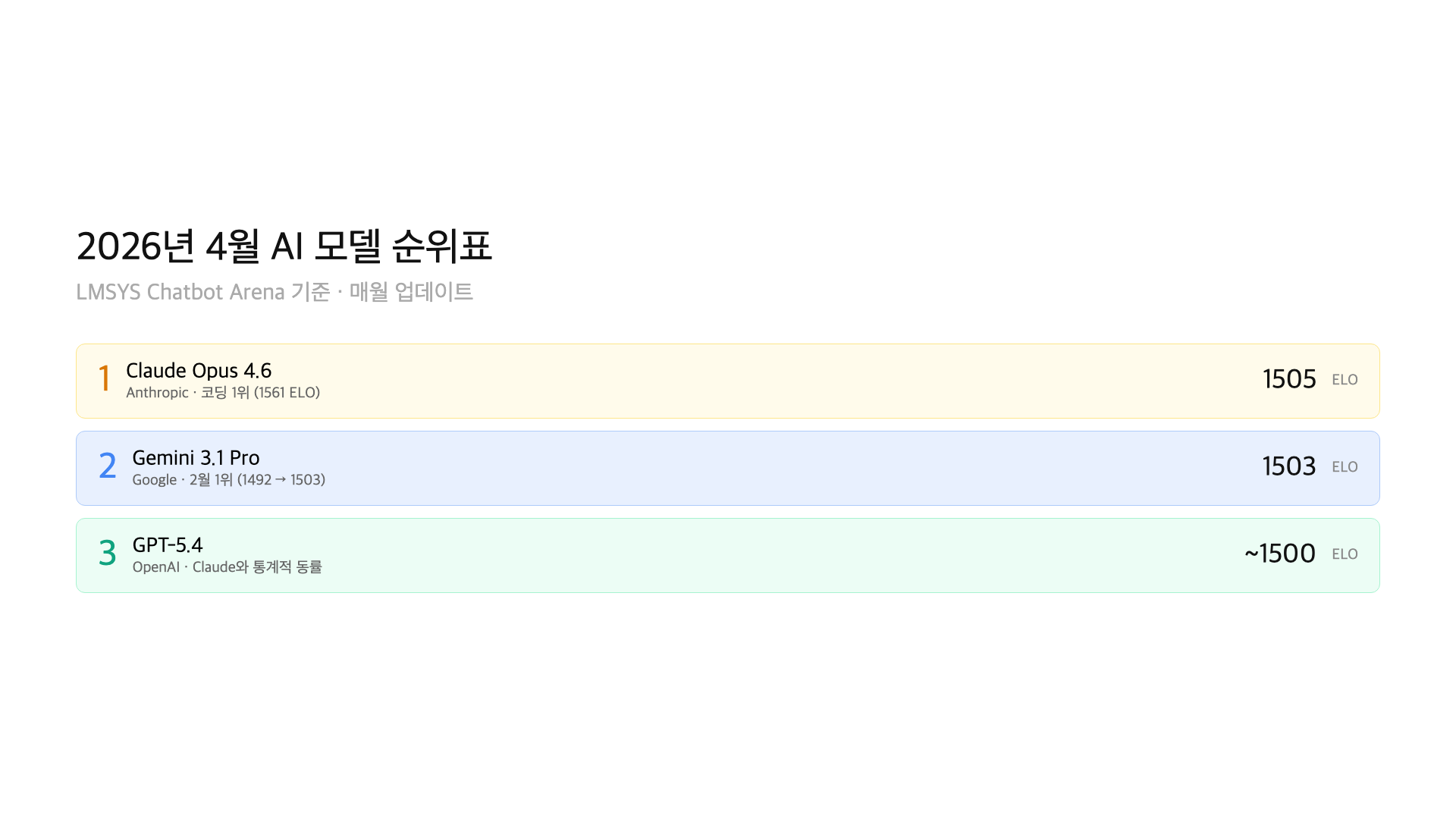

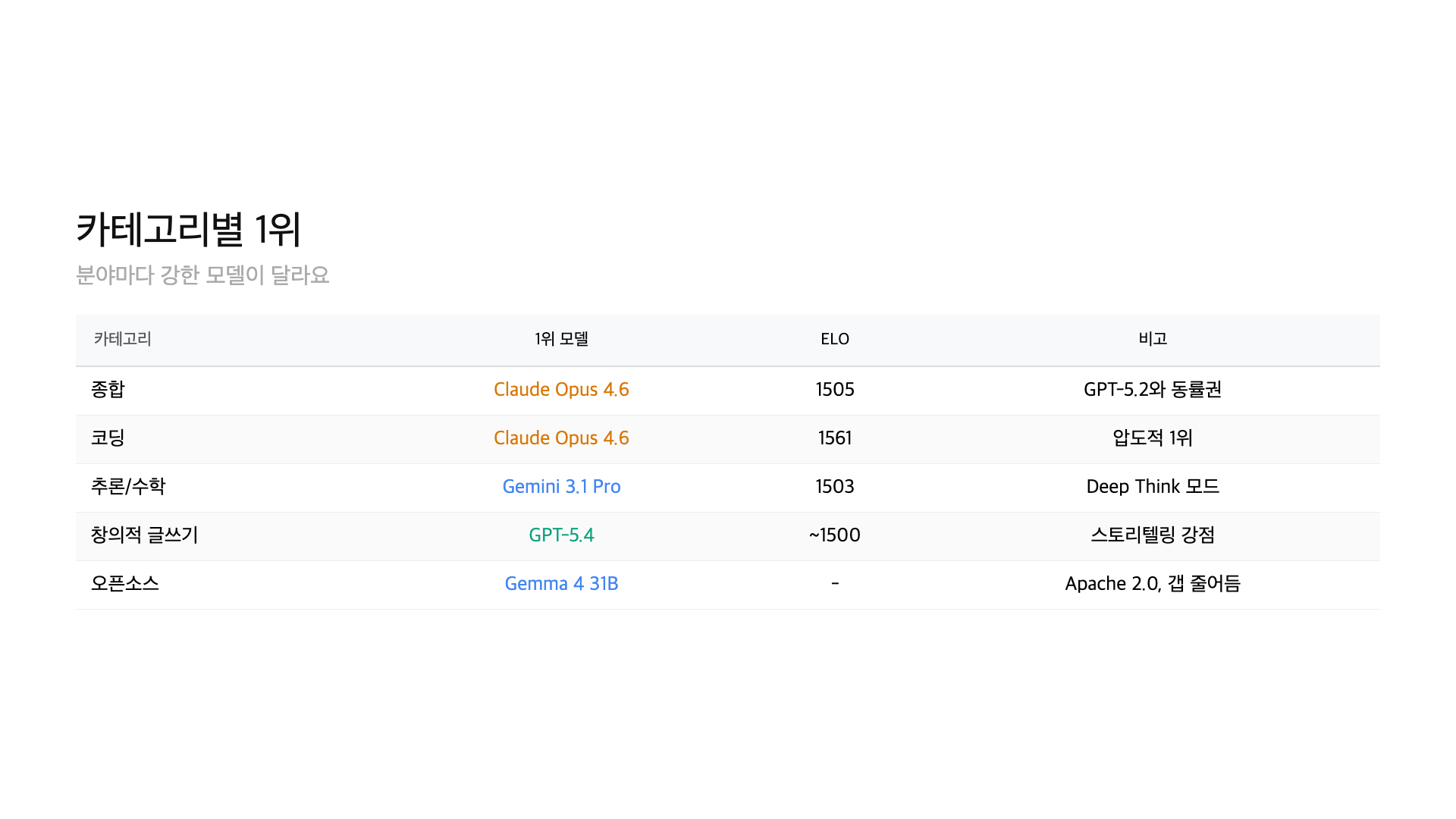

– Overall: Claude Opus 4.6 (~1505 Elo), Gemini 3.1 Pro (~1503), GPT-5.4 (~1500) — statistical dead heat

– Coding: Claude Opus 4.6 leads at 1561 Elo, SWE-bench 80.8%

– Science (GPQA Diamond): Gemini 3.1 Pro 94.3%, Claude Opus 91.3%

– Math (USAMO 2026): GPT-5.4 at 95.24%

– Open-source: DeepSeek V4, Llama 4, Qwen 3.5, Gemma 4 closing fast

How to read the rankings — what is Elo?

Elo is a rating system from chess. Two players (models) compete. The winner’s score goes up, the loser’s goes down. In Chatbot Arena, users blindly compare two model responses and pick the better one. Their votes feed the Elo calculation.

Here is how to interpret the numbers. Under 10 points: statistically insignificant — treat them as equal. 30+ points: noticeable quality gap. 50+ points: clear winner.

One caveat: Elo scores reflect “average user preference.” In specific domains like coding, creative writing, math, or multilingual, rankings can look completely different. That is why you should check category-specific rankings, not just the overall number.

Overall Top 10

Rankings change frequently. Check lmarena.ai for the latest numbers.

| Rank | Model | Elo | Developer | Type |

|---|---|---|---|---|

| 1 | Claude Opus 4.6 | ~1505 | Anthropic | Proprietary |

| 2 | Gemini 3.1 Pro | ~1503 | Proprietary | |

| 3 | GPT-5.4 | ~1500 | OpenAI | Proprietary |

| 4 | GPT-5.2 | 1495 | OpenAI | Proprietary |

| 5 | Grok 4.20 | 1488 | xAI | Proprietary |

| 6 | Claude Sonnet 4.6 | 1482 | Anthropic | Proprietary |

| 7 | DeepSeek V4 | 1475 | DeepSeek | Open Source |

| 8 | Gemini 3 Pro | 1470 | Proprietary | |

| 9 | Llama 4 405B | 1462 | Meta | Open Source |

| 10 | Qwen 3.5 72B | 1455 | Alibaba | Open Source |

The top 3 are within 5 points of each other. That is a statistical dead heat — Claude Opus 4.6, Gemini 3.1 Pro, and GPT-5.4 are functionally equivalent in overall quality. On any given day, a remeasurement could reshuffle these positions.

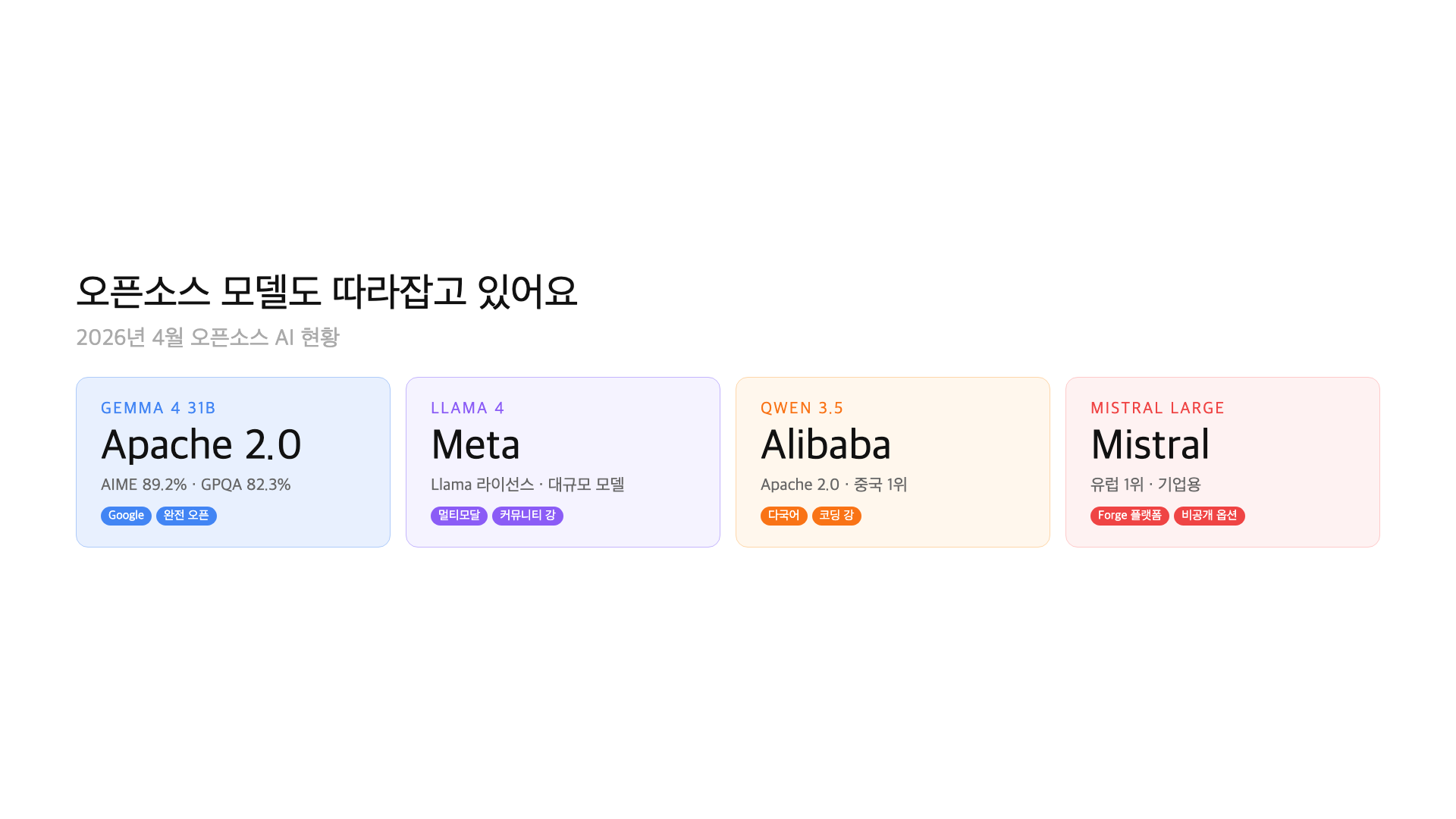

The standout is number 7, DeepSeek V4. An open-source model within 30 points of the top. A year ago, open-source trailed by 100+ points. That gap is closing fast.

Coding Top 10

| Rank | Model | Elo (Coding) | Developer |

|---|---|---|---|

| 1 | Claude Opus 4.6 | 1561 | Anthropic |

| 2 | GPT-5.4 | 1542 | OpenAI |

| 3 | Gemini 3.1 Pro | 1528 | |

| 4 | Claude Sonnet 4.6 | 1520 | Anthropic |

| 5 | DeepSeek V4 | 1510 | DeepSeek |

| 6 | Grok 4.20 | 1498 | xAI |

| 7 | GPT-5.2 | 1492 | OpenAI |

| 8 | Qwen 3.5 72B | 1478 | Alibaba |

| 9 | Llama 4 405B | 1465 | Meta |

| 10 | Mistral Large 4 | 1452 | Mistral |

In coding, Claude Opus 4.6 has a clear lead. The 19-point gap over GPT-5.4 is meaningful — it is where noticeable differences start. Claude excels at legacy code refactoring and complex debugging. SWE-bench score: 80.8%.

On the open-source side, DeepSeek V4 at number 5 is remarkable. Qwen 3.5 at number 8 is also strong. If you want to avoid API costs for coding assistance, these are viable options.

Open-source model rankings

| Rank | Model | Elo (Overall) | Parameters | License |

|---|---|---|---|---|

| 1 | DeepSeek V4 | 1475 | MoE | MIT |

| 2 | Llama 4 405B | 1462 | 405B | Llama License |

| 3 | Qwen 3.5 72B | 1455 | 72B | Apache 2.0 |

| 4 | Gemma 4 31B | 1438 | 31B | Apache 2.0 |

| 5 | Mistral Small 4 | 1425 | 22B | Apache 2.0 |

The gap between open-source number 1 (DeepSeek V4) and overall number 1 (Claude Opus 4.6) is 30 points. A year ago this gap exceeded 100. Open-source is catching up, and the numbers prove it.

For locally-runnable models, Gemma 4 and Mistral Small 4 stand out. Gemma 4 E4B runs on a laptop. Mistral Small 4 at 22B offers strong instruction-following in a compact package.

By use case — which model should you pick?

Coding and development: Claude Opus 4.6 is number 1. It excels in long codebase understanding, refactoring, and debugging. If cost matters, Claude Sonnet 4.6 offers strong performance per dollar.

General chat and writing: Claude Opus 4.6, GPT-5.4, and Gemini 3.1 Pro are all competitive. Pick based on personal preference.

Math: GPT-5.4 leads with 95.24% on USAMO 2026. Gemini 3.1 Pro scores 74%. For math-heavy workloads, GPT is the clear choice.

Science (GPQA Diamond): Gemini 3.1 Pro leads at 94.3%. Claude Opus follows at 91.3%. Both are strong; Gemini has the edge in graduate-level science questions.

Multilingual and translation: Gemini 3.1 Pro leads. Google’s multilingual training data gives it an advantage across many language pairs.

Cost-sensitive / local: Gemma 4 (E4B/31B), Qwen 3.5, Llama 4. No API costs, runs on your own hardware. Good for privacy-sensitive applications.

First half of 2026 — what changed?

The biggest shift: there is no dominant leader anymore. In 2025, GPT-4 held number 1 for months. In 2026, Claude, GPT, and Gemini take turns at the top. Gemini 3 Pro was number 1 in February at 1492 Elo. By April, Claude Opus 4.6 leads at around 1505.

The second shift: open-source is catching up. DeepSeek V4, Llama 4, and Qwen 3.5 have all reached the level of last year’s top proprietary models. Smaller models are improving too. Gemma 4 E4B is a practical model that runs on a laptop.

Third: specialization matters more than ever. Even when overall scores are similar, models differ sharply by domain. “Best overall” matters less than “best for my specific use case.”

Benchmark limitations — rankings are not everything

LMSYS Chatbot Arena is the most trustworthy benchmark available, but it has limitations. Testing skews toward English. Single-turn comparisons do not capture multi-turn conversation or agent capabilities well.

There is also the benchmark optimization problem. Models can be tuned to score well on benchmarks without proportional real-world improvement. Treat these rankings as reference points, not absolute truth. The best approach is to test with your specific use case.

API pricing and response speed matter too. When models are performance-equivalent, cost and latency become the deciding factors. That is a topic for a separate comparison.

FAQ

Q. What is LMSYS Chatbot Arena?

A benchmark platform by UC Berkeley researchers. Users compare two AI model responses in a blind test and pick the winner. Results are scored using an Elo system. It reflects real user preferences rather than synthetic benchmarks.

Q. What is the best AI model right now?

As of April 2026, Claude Opus 4.6, Gemini 3.1 Pro, and GPT-5.4 are in a statistical tie. There is no single “best” — it depends on your use case. Claude leads coding, Gemini leads science and multilingual, GPT-5.4 leads math.

Q. Can open-source models compete with proprietary ones?

The gap is shrinking fast. DeepSeek V4 is within 30 Elo points of the top. A year ago the gap was 100+. Locally-runnable models like Gemma 4 and Qwen 3.5 are becoming viable for production use.

Q. Is a 10-point Elo difference significant?

No. Under 10 points is statistically insignificant — treat those models as tied. 30+ points is where real differences begin. 50+ points means a clear winner.

Q. Do these rankings change monthly?

Yes, nearly weekly. New model releases, updates, and accumulated votes cause fluctuations. This article is updated monthly. Always check the publication date.

Wrap-up

The AI model market in April 2026 is a three-way race. Claude, GPT, and Gemini trade the lead monthly. Open-source models are climbing fast from below. The question has shifted from “which is the best?” to “which is best for my use case?”

This ranking table will be updated monthly. New model releases and major shifts will be reflected as they happen. Bookmark this page for a quick monthly check.

This article was written on April 4, 2026, based on LMSYS Chatbot Arena (lmarena.ai) data. Rankings change frequently — check the latest numbers directly. Updated monthly.

GoCodeLab tests AI models hands-on and reports honestly. Subscribe for more updates.