Anthropic Accidentally Leaked Claude Mythos — The “Most Powerful” AI Ever

Anthropic Accidentally Leaked Claude Mythos — The “Most Powerful” AI Ever

On this page (9)

March 30, 2026 · Trend

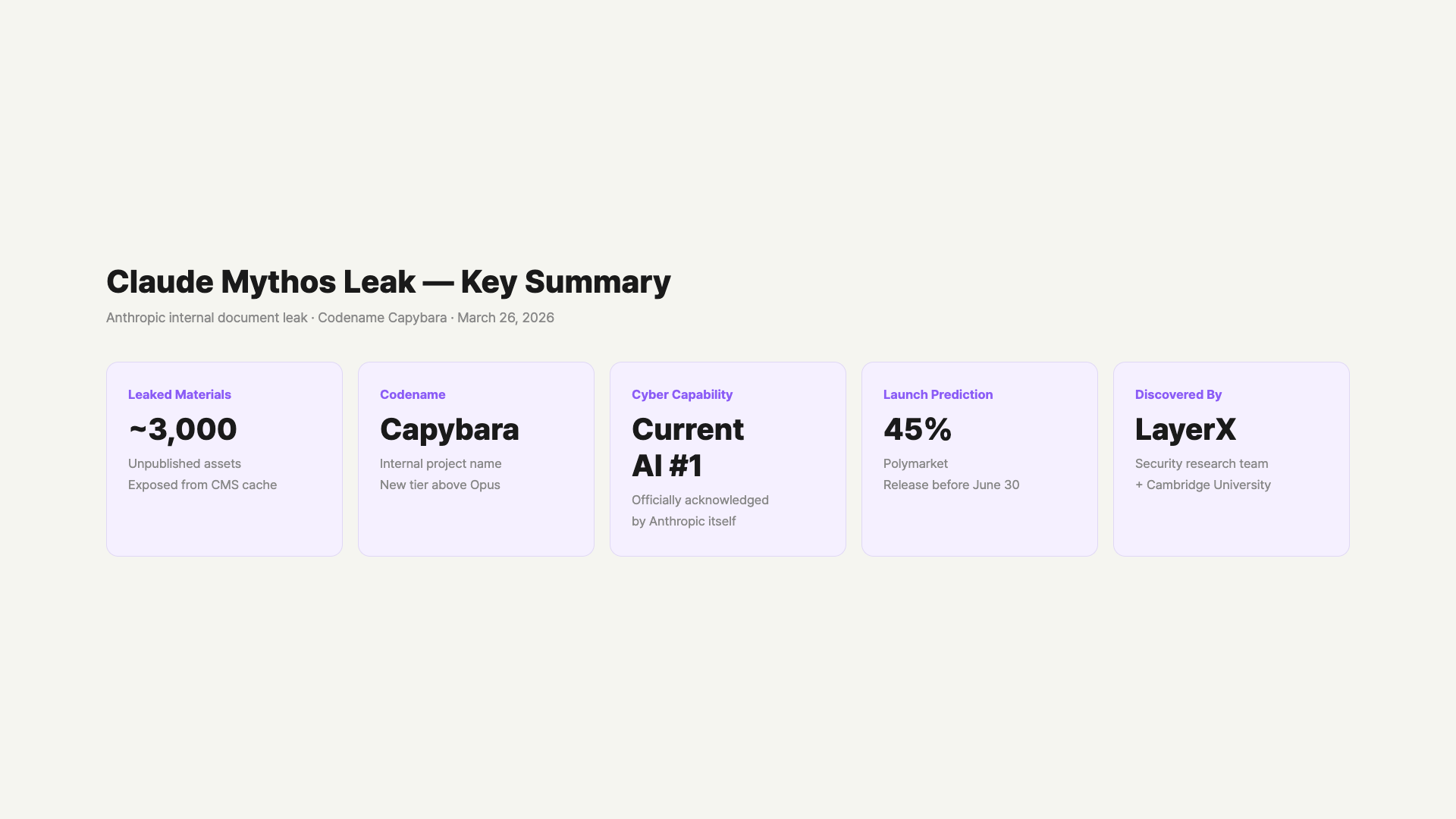

Last week saw the most talked-about incident in the AI industry. Anthropic accidentally exposed information about a new model that hadn’t even been announced yet. Unpublished blog drafts were sitting on a publicly searchable server, and Fortune discovered and reported on them. That happened on March 26.

The leaked content included the model name, performance figures, and internal warnings. The model is called Claude Mythos, with the internal codename Capybara. Anthropic stated “training is complete and it’s the most powerful AI we’ve ever built.” But they also said it carries “unprecedented cybersecurity risks.”

Within 24 hours of the leak, social media was buzzing. Cybersecurity stocks dropped across the board, and prediction markets opened on Polymarket. If “the most powerful AI ever” is real, we broke down what changes and how.

– Claude Mythos = codename Capybara, a new tier above Opus

– ~3,000 unpublished assets exposed in an external data cache

– Coding, reasoning, and cybersecurity benchmarks all significantly improved vs Opus 4.6

– “Most advanced cyber capabilities of any AI” — Anthropic’s own warning

– Cybersecurity stocks dropped across the board, Bitcoin also fell

– Anthropic is directly briefing senior government officials on cyber risks

– Currently only limited early access for defensive cybersecurity researchers

How Did It Leak? — How 3,000 Unpublished Assets Got Exposed

The cause was a configuration error in Anthropic’s content management system (CMS). Unpublished blog drafts were stored in an externally searchable data cache. They weren’t publicly listed, but anyone with the URL could access them.

Two security researchers made the discovery. Roy Paz from LayerX Security and Alexandre Pauwels from Cambridge University found the exposed data cache. About 3,000 unpublished assets were accessible, including Claude Mythos-related drafts. Fortune contacted Anthropic, and access was blocked only after the report was published.

Anthropic officially acknowledged it as “human error.” Many found it ironic that a company that prioritizes security above all made such a mistake. After the leak, Anthropic confirmed the model’s existence, saying “it’s the most powerful AI we’ve developed to date, representing a step change in performance.”

What Is Claude Mythos?

The internal codename is Capybara. It’s a new tier above Opus, Claude’s current top model. According to leaked documents, Capybara was designed as a separate model layer from the Opus series — larger in size with a different reasoning approach. Some analysts consider Mythos to be effectively “Opus 5.”

Anthropic defined this model as a “general-purpose model.” It’s not just strong in one area — it performs consistently high across coding, reasoning, cybersecurity, and scientific analysis. The leaked documents included language calling Capybara “the most powerful AI ever.”

How Is It Different From Opus?

Claude’s current top model is Opus 4.6. Capybara is a tier above, and leaked documents mention it will be more expensive. Anthropic also directly stated “the cost is too high for general release at this time.” It’s not just better performance — the operating costs are on a different level entirely.

Actual Performance — How Much Better Is It?

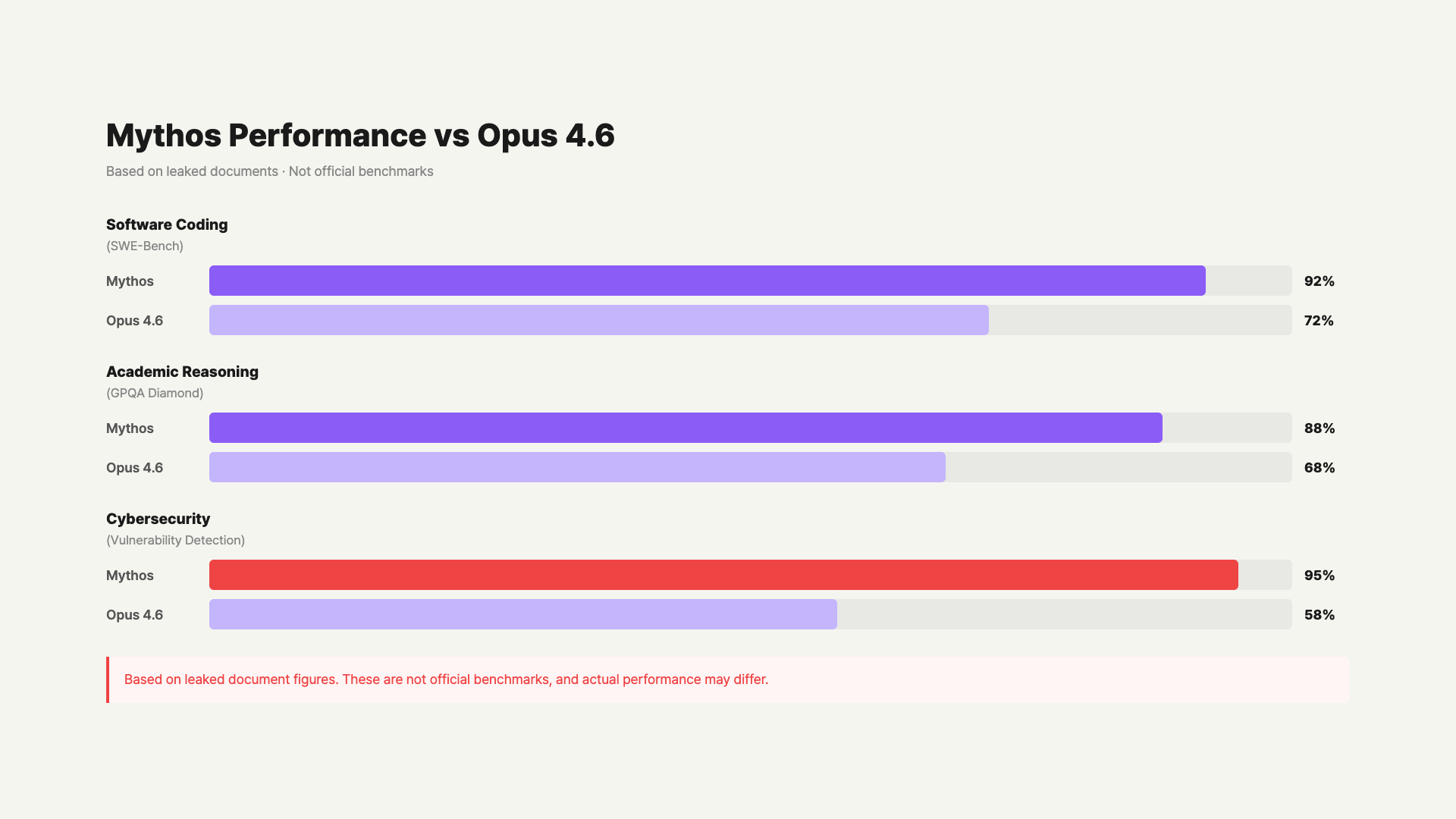

The benchmark results from the leaked document show a significant gap. Compared to Opus 4.6, Mythos scored much higher across all three areas: software coding, academic reasoning, and cybersecurity. Only partial figures were revealed in the leak, but there seems to be a good reason they used the term “step change.”

| Area | Claude Opus 4.6 | Claude Mythos (Capybara) |

|---|---|---|

| Software Coding (SWE-Bench) | Top tier | Significantly improved (“dramatically higher”) |

| Academic Reasoning (GPQA Diamond) | Top tier | Highest among existing public models |

| Cybersecurity Capabilities | Strong | “Most advanced of any existing AI” |

| Operating Cost | High | Higher (unconfirmed) |

| General Availability | Available | Early access (limited) |

Since these comparison numbers are leak-based, they should be taken with some caution. But the fact that Anthropic itself uses the phrase “step change” suggests this is clearly beyond a typical incremental improvement.

Claude Mythos vs Opus 4.6 Performance Comparison (Based on Leaked Info) / GoCodeLab

Why Did They Call It Dangerous?

The most striking part of the leaked documents is the cybersecurity warning. Anthropic internally assessed that “it’s more advanced in cyber capabilities than any other AI.” Its ability to rapidly find and exploit software vulnerabilities has significantly increased. The documents used language like “it can exploit vulnerabilities in ways that far outpace current defenders.”

That’s why Anthropic decided not to release this model to the general public immediately. Instead, the first early access group is limited to defensive cybersecurity researchers. The logic is to help defense before offense, giving defenders “a head start” before the model is widely available.

Anthropic’s warning doesn’t mean Mythos is dangerous per se — it means it could be dangerous if misused. A company recognizing risks and adjusting its release approach is actually a responsible approach. Some view “a company that warns first” as more trustworthy.

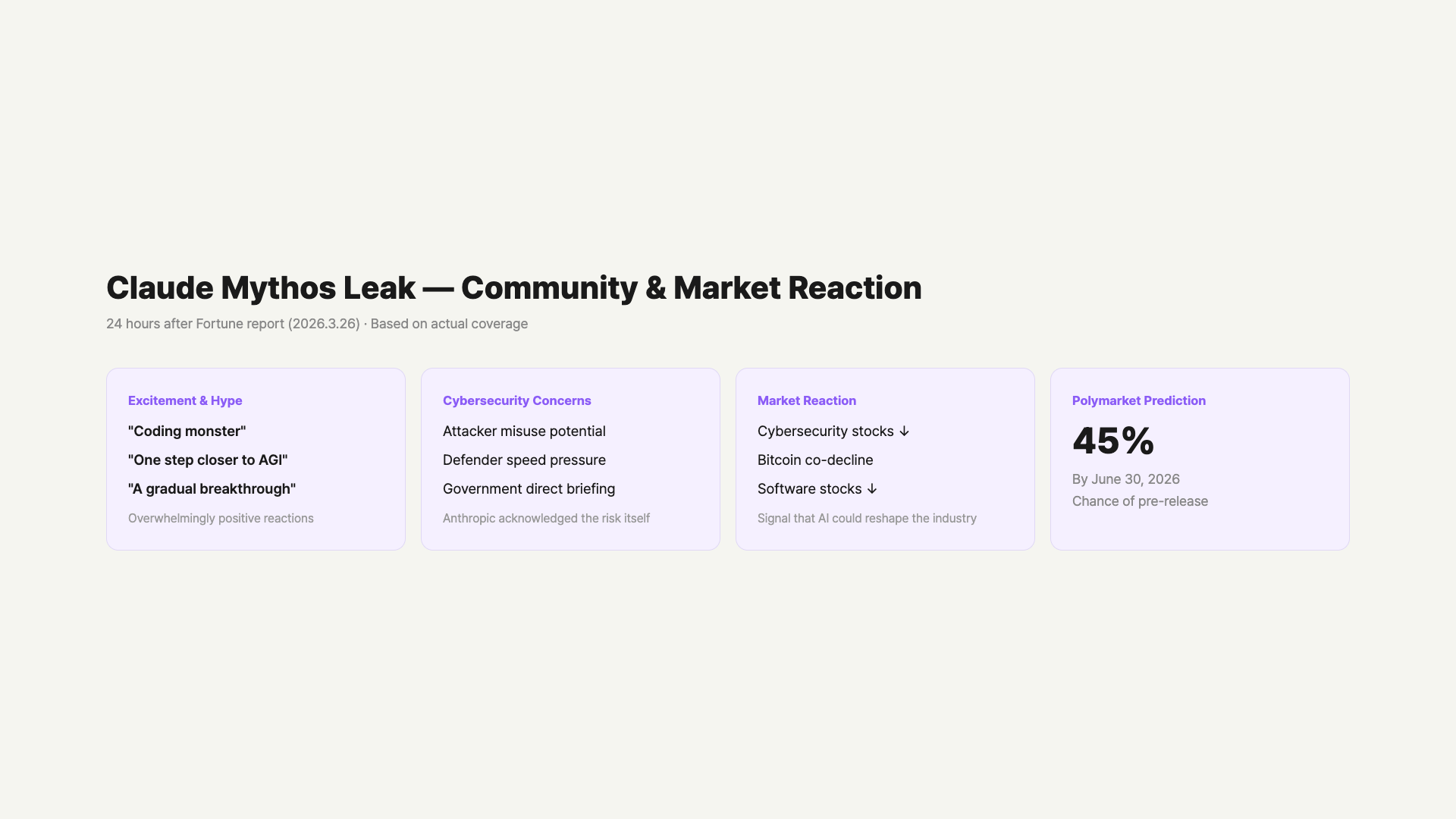

How Did the Community and Markets React?

Right after the leak, reactions exploded on X (Twitter) and Reddit. Excitement was overwhelming, with comments like “coding monster,” “one step closer to AGI,” and “the step change is real.” The exact phrase from Anthropic calling it “the most powerful” went viral, fueling the hype. Anticipation was especially high among engineers and researchers who actively use Claude for development.

On the other hand, cybersecurity concerns were also prominent. Many expressed anxiety pointing out that “Anthropic themselves said it’s the most dangerous.” Reports indicate that after the leak, Anthropic has been directly briefing senior government officials about this model’s cybersecurity risks. Some maintained skeptical views, saying “it’s an unreleased model, probably exaggerated” or “the leak itself might be intentional marketing.”

Market reaction was even more immediate. Cybersecurity stocks dropped across the board. Bitcoin and software stocks moved in tandem. The market read it as a signal that “AI could render existing cybersecurity solutions obsolete.” On Polymarket (a crypto-based prediction market), a market opened on “Will Claude Mythos be publicly released by June 30, 2026?” — currently trading at 45% probability.

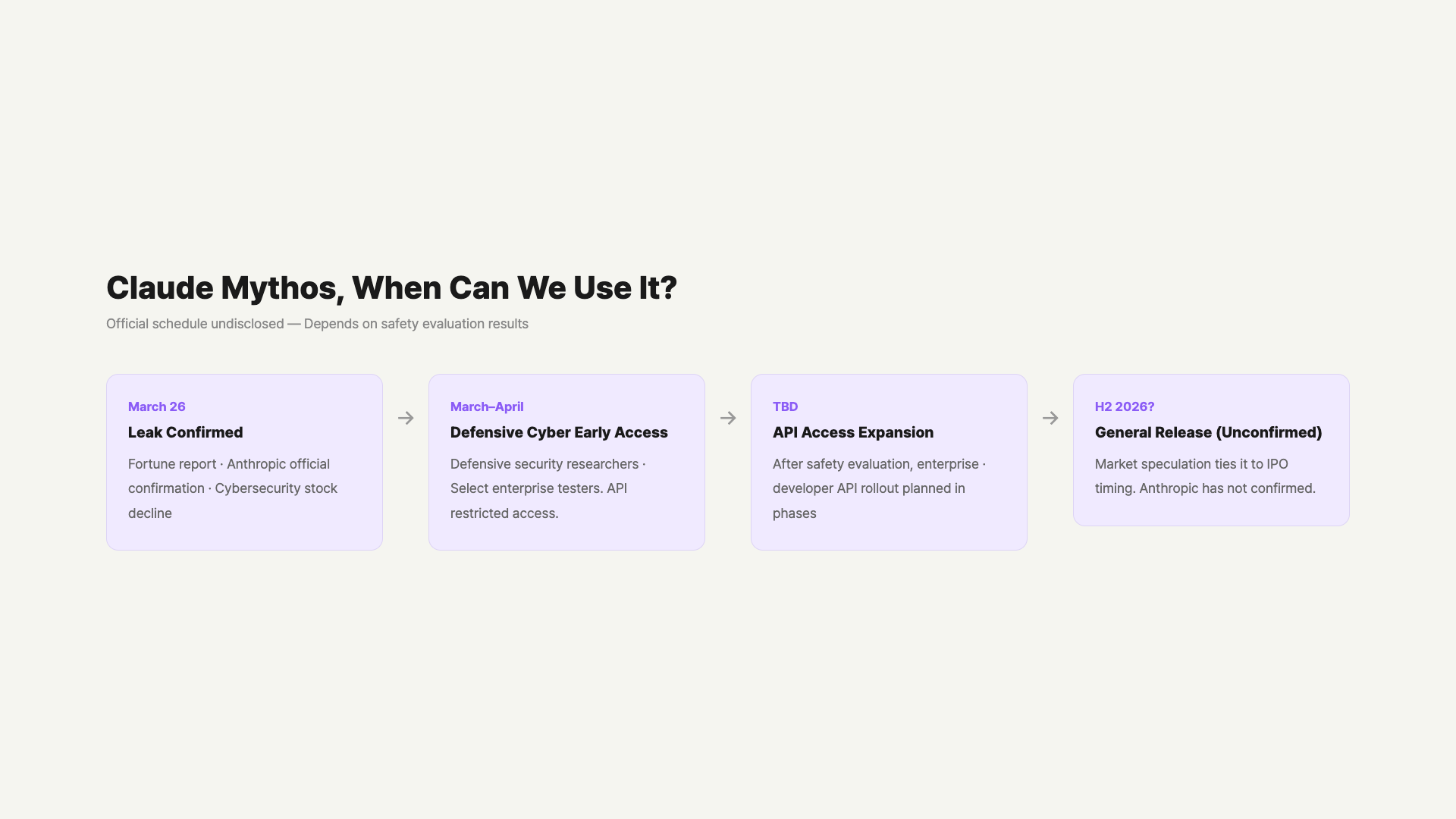

Can You Use It Now?

Currently, only a small group of early access customers can test it. Anthropic plans to gradually expand access through the Claude API. The first phase targets researchers and companies focused on defensive cybersecurity, with broader API access opening in later phases.

When general users will be able to use it on Claude.ai hasn’t been specified. Anthropic stated the release timeline “depends on safety evaluation results, not commercial plans.” Each phase requires passing safety evaluations before moving to the next.

Bloomberg reported that Anthropic is pursuing an October 2026 IPO with a projected valuation of around $380 billion. Publicly launching “the most powerful AI” around the IPO would obviously boost valuation. In this context, some analysts expect the Mythos release to align with the IPO timeline. However, Anthropic has not officially confirmed any release schedule.

What Does This Mean for the AI Industry?

The Mythos leak reveals two main things. First, the performance gap between AI models is widening faster than expected. Opus 4.6 came out not long ago, yet a model described as a “step change” above it already exists. Compared to competitors like GPT-5.4 and Gemini 3 Deep Think, you can feel how fast the AI performance race is moving.

Second, AI companies are increasingly disclosing safety concerns proactively. Where before they tried to hide risks, now speaking up first is becoming the way to build trust. Anthropic’s “defenders first” principle can be seen in this context. How the balance between offense and defense shifts in cybersecurity will be the biggest story going forward.

FAQ

Q. Are Claude Mythos and Capybara the same model?

Yes, they’re the same model. Capybara is the internal codename, and Mythos is the public name that appeared in the leaked draft. Which name Anthropic will officially launch with hasn’t been finalized. Some expect it to be “Claude Opus 5,” but Anthropic hasn’t made any official naming announcement.

Q. I’m using Opus 4.6 — should I switch when Mythos comes out?

No need to switch immediately. Mythos will be more expensive given its higher performance. For typical tasks, Sonnet 4.6 or Opus 4.6 is still plenty. Mythos makes sense for high-difficulty coding, security analysis, or complex academic research — cases where you’re hitting the limits of current models.

Q. They mentioned cybersecurity risks — is it safe to use?

Anthropic’s warning is about the risk if the model is misused. It doesn’t mean it’s dangerous for regular users. A company recognizing risks and applying access restrictions, phased releases, and a defenders-first principle is actually a safety measure. Watching rather than worrying is the appropriate response.

Q. When will regular users be able to use it?

No official timeline. Anthropic only said the decision depends on safety evaluation results. Some speculate it could align with Anthropic’s IPO schedule (expected H2 2026), but this isn’t confirmed. Currently it’s in early access, with API availability expanding gradually.

Q. Will this leak hurt Anthropic?

In the short term, there’s some reputational damage. A security-focused company accidentally exposed ~3,000 unpublished assets. But as the model’s performance became known, expectations also rose. As seen in the cybersecurity stock drops, the industry is taking this model seriously. Without the leak, they would have prepared quietly — instead, they’re getting even more attention.

Q. What exactly is Anthropic’s safety evaluation?

Anthropic is well known for its AI safety research. Before releasing new models, they conduct risk assessments under their Responsible Scaling Policy. If a model exceeds thresholds in areas like cybersecurity, biological risks, or autonomy, they delay the release. Mythos triggered those thresholds in cybersecurity, which is why they adopted a defense-community-first access policy.

Wrap-Up

Claude Mythos isn’t a publicly released model yet. It became known through a leak, and while Anthropic confirmed its existence, there’s been no formal announcement. We’ll need official benchmarks to know exactly how different the performance is. For now, we can only sketch an outline based on leaked information.

Two important signals emerged from this leak. Anthropic using “step change” means this isn’t a simple performance improvement. And an AI company proactively acknowledging risks and adjusting its release approach is evidence that the industry is gradually maturing.

We’ll compare it side by side with GPT-5.4 and Gemini 3 Deep Think after the official release — hands-on testing, then we’ll talk.

When Claude Mythos officially launches, GoCodeLab will be among the first to test it hands-on and share our findings.

This article was written on March 30, 2026. Claude Mythos information is based on leaked documents and Anthropic’s official comments. As pre-announcement information, actual specs may differ.

Related: Claude Sonnet 4.6 Launch Roundup · MCP Protocol Surpasses 97 Million Installs · Gemini vs Claude vs ChatGPT Comparison