Open Source AI Showdown — Llama 4 vs Gemma 4 vs DeepSeek V4 vs GLM-5.1

Four open-source LLMs compared by benchmarks, licensing, pricing, and local deployment. A 2026 selection guide.

목차 (10)

- 1. Full Comparison — At a Glance

- 2. Llama 4 Maverick — The 1M-Token Giant

- 3. Gemma 4 — Frontier AI That Runs on a Laptop

- 4. DeepSeek V4 — 1 Trillion Parameters, 1/50 the Price

- 5. GLM-5.1 — The New #1 in Coding Benchmarks

- 6. Detailed Benchmark Comparison

- 7. License and Pricing Comparison

- 8. Recommendations by Use Case

- 9. FAQ

- 10. Conclusion

April 2026 · AI Trends

Open-source AI models have started beating paid ones. GLM-5.1 took the #1 spot on SWE-Bench Pro, surpassing Claude Opus 4.6 and GPT-5.4. And it is open source — free to use.

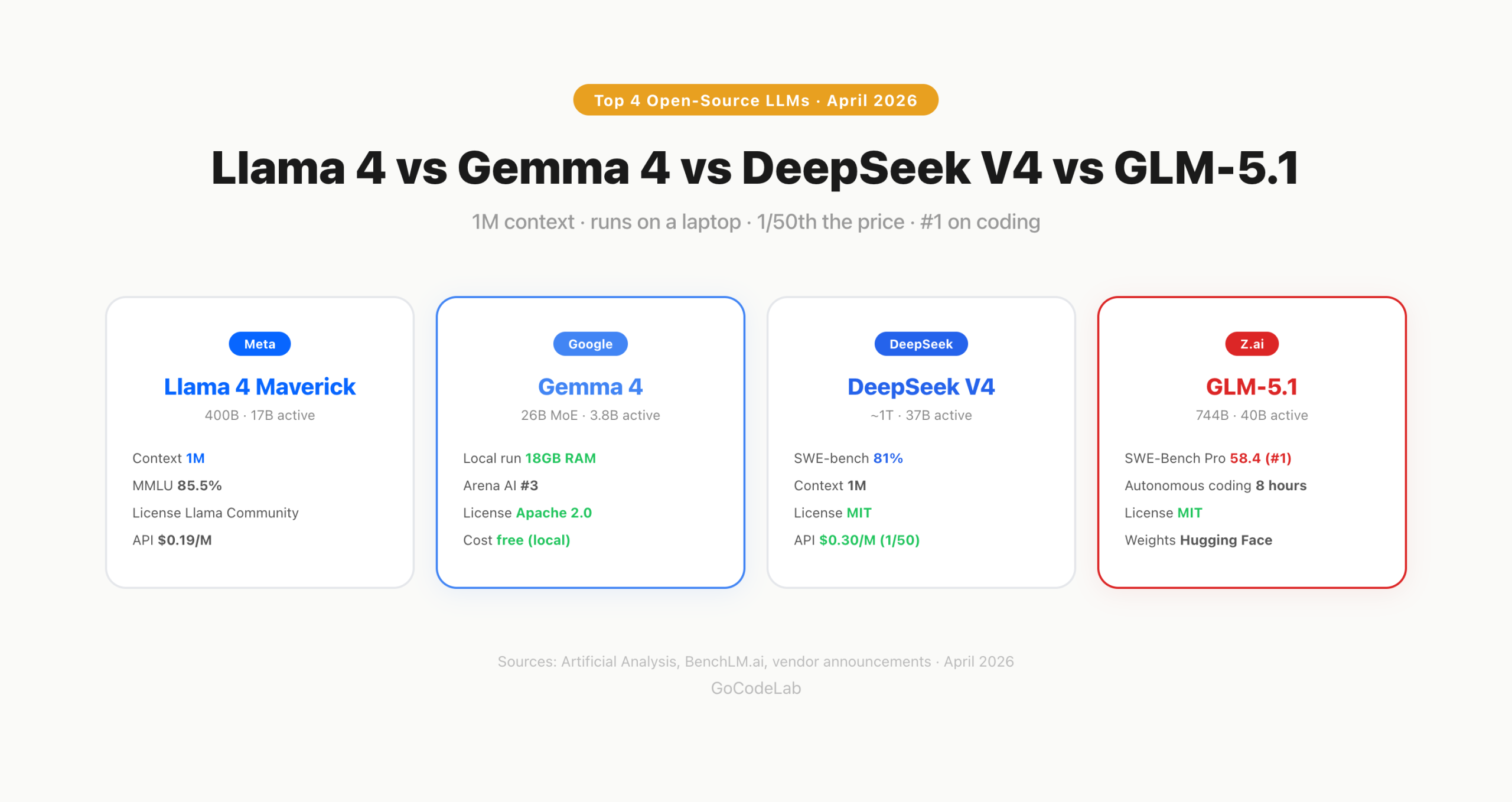

As of April 2026, the open-source LLM landscape has settled into a top 4. Meta's Llama 4 Maverick, Google's Gemma 4, DeepSeek V4, and Z.ai's GLM-5.1. Each model takes a completely different approach.

One handles 1 million tokens. One runs on a laptop. One costs 1/50 the price of GPT-5.4. One tops the coding benchmark. Here is a comparison covering benchmarks, licenses, pricing, and local deployment.

Quick Summary

• Llama 4 Maverick — 400B parameters, 1M token context, MoE architecture

• Gemma 4 — 26B MoE, runs locally on 18GB RAM, fully open Apache 2.0

• DeepSeek V4 — ~1T parameters, SWE-bench 81%, API at $0.30/M tokens

• GLM-5.1 — SWE-Bench Pro 58.4 (#1), MIT license, 8-hour autonomous coding

• Best for coding: GLM-5.1 / Cost: DeepSeek / Local: Gemma / Long-context: Llama

- Full Comparison — At a Glance

- Llama 4 Maverick — The 1M-Token Giant

- Gemma 4 — Frontier AI That Runs on a Laptop

- DeepSeek V4 — 1 Trillion Parameters, 1/50 the Price

- GLM-5.1 — The New #1 in Coding Benchmarks

- Detailed Benchmark Comparison

- License and Pricing Comparison

- Recommendations by Use Case

- FAQ

- Conclusion

1. Full Comparison — At a Glance

Here are the core specs of all four models.

| Spec | Llama 4 | Gemma 4 | DeepSeek V4 | GLM-5.1 |

|---|---|---|---|---|

| Developer | Meta | DeepSeek | Z.ai (Zhipu) | |

| Total Parameters | 400B | 26B / 31B | ~1T | 744B |

| Active Parameters | 17B | 3.8B (26B) | 37B | 40B |

| Context | 1M | 256K | 1M | — |

| SWE-bench | — | — | 81% | 58.4 (Pro) |

| License | Llama License | Apache 2.0 | MIT | MIT |

| API Price (Input/M) | $0.19 | Free (local) | $0.30 | Subscription |

| Local Deployment | Difficult (400B) | Possible (18GB) | Difficult (~1T) | Difficult (744B) |

All four models use MoE (Mixture of Experts) architecture. Only a fraction of total parameters activate per query, which boosts efficiency. Like a buffet that prepares every dish but serves each guest only what they need.

2. Llama 4 Maverick — The 1M-Token Giant

Llama 4 Maverick is Meta's latest open model. It has 400B parameters with 128 experts in an MoE setup. Only 17B are active at inference time, keeping costs low.

Its biggest weapon is the 1 million token context window. The widest among open-source models. You can feed in entire codebases or long documents in a single pass. It also scores 85.5% on MMLU, the highest among open models.

The weak point is the license. The Llama License is not fully open source. Services with over 700 million monthly active users need separate approval from Meta. Not an issue for startups, but a potential blocker for large-scale services.

Think of it as a team of specialists. When a question comes in, only the most relevant experts are activated. Out of the total 400B parameters, only 17B activate per query — so inference is fast and costs stay low.

3. Gemma 4 — Frontier AI That Runs on a Laptop

Gemma 4 is Google's model released under Apache 2.0. It comes in four sizes, and the 26B MoE version is the standout. Active parameters are just 3.8B. It runs locally on 18GB RAM.

If you have an M1 MacBook or newer, you can install it via Ollama in under 5 minutes. The key advantage is offline AI — your data never leaves the device.

Performance is impressive too. The 31B model hits 85.2% on MMLU Pro and ranks #3 among open models on Arena AI. The 26B MoE ranks #6. The performance-to-size ratio is outstanding. It supports over 140 languages, making it strong for multilingual tasks.

The license is the most permissive of all. Apache 2.0 means no MAU limits, no usage restrictions. Fully free for commercial use.

4. DeepSeek V4 — 1 Trillion Parameters, 1/50 the Price

DeepSeek V4 is a roughly 1 trillion (1T) parameter model. Active parameters are 37B, maximizing MoE efficiency. It scores 81% on SWE-bench Verified, approaching GPT-5.4 coding performance.

Pricing is the killer feature. The API costs $0.30 input and $0.50 output per million tokens. That is roughly 1/50 the price of GPT-5.4, while delivering about 90% of the performance. For cost-sensitive services, this is a decisive advantage.

It supports a 1 million token context window with 97% Needle-in-a-Haystack accuracy. It handles text, images, and video as a multimodal model. MIT license means no restrictions.

Running 1 million monthly requests on the GPT-5.4 API costs hundreds of dollars. The same workload on DeepSeek V4 costs tens of dollars. The performance gap is only about 10%. For startups and side projects, that difference is decisive.

5. GLM-5.1 — The New #1 in Coding Benchmarks

GLM-5.1 was released by Z.ai (formerly Zhipu AI) on April 7. It has 744B parameters with 40B active in an MoE setup. MIT license.

It scored 58.4 on SWE-Bench Pro, taking the #1 spot. An open-source model that beat Claude Opus 4.6 and GPT-5.4. One of the first cases where open source surpassed paid models in coding tasks.

Its most unique feature is 8-hour autonomous coding. It can work on a single coding task for up to 8 hours on its own. The AI keeps writing code even after you leave for the day.

It recorded a 3.6x speed improvement on KernelBench Level 3. That means it runs real machine learning workloads 3.6 times faster than before. Model weights are available on Hugging Face.

6. Detailed Benchmark Comparison

Here are the key benchmarks (think of them as standardized tests for AI performance) broken down by category.

| Benchmark | Llama 4 | Gemma 4 | DeepSeek V4 | GLM-5.1 |

|---|---|---|---|---|

| MMLU | 85.5% | 85.2% | — | — |

| SWE-bench Verified | — | — | 81% | — |

| SWE-Bench Pro | — | — | — | 58.4 |

| Arena AI (ELO) | 1,417 | #3 (31B) | — | — |

| Context Window | 1M | 256K | 1M | — |

| Local Deployment (Min RAM) | — | 18GB | — | — |

No single model leads in every category. For coding, GLM-5.1 and DeepSeek V4 lead. For general knowledge, Llama 4 and Gemma 4. For local deployment, only Gemma 4 is practical. Each excels in its own domain.

7. License and Pricing Comparison

When choosing an open-source model, the license matters. Not all "open source" is the same.

| Item | Llama 4 | Gemma 4 | DeepSeek V4 | GLM-5.1 |

|---|---|---|---|---|

| License | Llama License | Apache 2.0 | MIT | MIT |

| Commercial Use | Conditional (700M MAU limit) | Fully open | Fully open | Fully open |

| API Price (Input/M) | $0.19 | Free (local) | $0.30 | Subscription |

| Fine-tuning | Supported | Supported | Supported | Supported |

| Weights Available | Hugging Face | Hugging Face | Hugging Face | Hugging Face |

In terms of license freedom alone, Gemma 4 (Apache 2.0), DeepSeek V4 (MIT), and GLM-5.1 (MIT) are fully permissive. Llama 4 has restrictions for large-scale services. For startups, all four are fine.

8. Recommendations by Use Case

| Use Case | Recommendation | Reason |

|---|---|---|

| Coding Automation | GLM-5.1 | SWE-Bench Pro #1, 8-hour autonomous coding |

| Running an API Service | DeepSeek V4 | 1/50 the cost of GPT-5.4, 90% performance |

| Local / Offline AI | Gemma 4 | 18GB RAM, 5-minute setup via Ollama |

| Processing Large Documents | Llama 4 Maverick | 1M token context, MMLU 85.5% |

| License Freedom | Gemma 4 / DeepSeek V4 | Apache 2.0 / MIT, no restrictions |

| Data Privacy | Gemma 4 | Runs locally, data never leaves the device |

There is no single right answer. For coding, GLM-5.1. For cost, DeepSeek. For privacy, Gemma. For long context, Llama. All four are free or cheap, so mixing and matching multiple models is also a solid approach.

9. FAQ

Q. What is the best open-source LLM in 2026?

It depends on the use case. For coding, GLM-5.1. For overall performance, DeepSeek V4. For local deployment, Gemma 4. For long-context processing, Llama 4 Maverick. No single model leads in every category.

Q. Can Gemma 4 run on my laptop?

Yes. The 26B MoE model requires only 18GB RAM with 4-bit quantization. Any M1 MacBook or newer is enough. Install via Ollama and you can be running in under 5 minutes.

Q. Who made GLM-5.1?

Z.ai (formerly Zhipu AI) from China. It is released under the MIT license. It is an open-source model that beat Claude Opus 4.6 and GPT-5.4 on SWE-Bench Pro.

Q. How much does the DeepSeek V4 API cost?

Input $0.30, output $0.50 per million tokens. That is roughly 1/50 the cost of GPT-5.4, while scoring 81% on SWE-bench.

Q. Is Llama 4 Maverick truly open source?

Not entirely. It is released under the Llama License. Commercial use is allowed, but services with over 700 million monthly active users need separate approval from Meta. Gemma 4 (Apache 2.0) and GLM-5.1 (MIT) are more permissive.

10. Conclusion

The era of open source beating paid models is here. GLM-5.1 surpassed Claude and GPT in coding. DeepSeek V4 delivers comparable quality at 1/50 the price. Gemma 4 runs on a laptop.

The decision is straightforward. For coding, GLM-5.1. For cost, DeepSeek V4. For privacy, Gemma 4. For long context, Llama 4. You do not have to pick just one. All four are free or cheap.

• Meta — Llama 4 Models

• Google — Gemma 4

• DeepSeek — V4 Release

• VentureBeat — GLM-5.1 Beats Opus 4.6 on SWE-Bench Pro

Lazy Developer — Automate Everything

A journal of automating things because repetitive work is the worst

Start from EP.01 →GoCodeLab Blog

AI news and dev automation stories, published weekly

Benchmark scores and pricing in this article are as of April 10, 2026. They may change as models are updated.

Benchmark sources: Artificial Analysis, BenchLM.ai, and official announcements from each company.