Microsoft Drops 3 AI Models at Once — MAI-Transcribe, MAI-Voice, MAI-Image Explained

Microsoft announced 3 in-house AI models on April 2: MAI-Transcribe-1, MAI-Voice-1, and MAI-Image-2. Speech recognition, voice synthesis, and image generation — all without OpenAI. Here's what it means.

April 4, 2026 · AI News

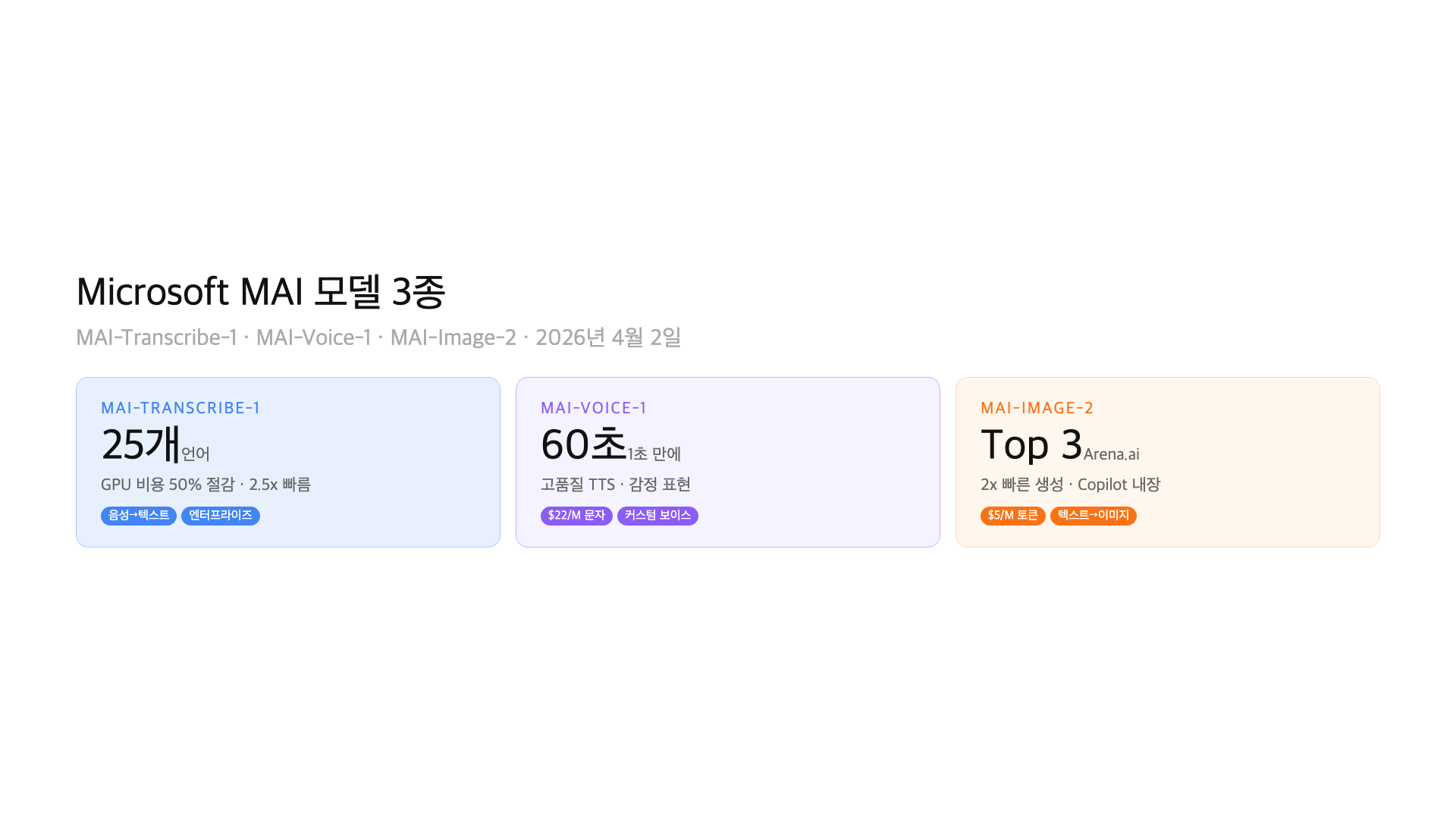

On April 2, Microsoft announced three in-house AI models simultaneously: MAI-Transcribe-1 (speech-to-text), MAI-Voice-1 (text-to-speech), and MAI-Image-2 (text-to-image). Releasing all three at once is unprecedented for Microsoft.

The notable part: these are Microsoft’s own models, not OpenAI technology. Microsoft has been using OpenAI models for Copilot and Bing, but now they’re building their own stack for voice and image. TechCrunch and VentureBeat both describe this as a strategy to “reduce OpenAI dependency.”

These models already power Copilot, Bing, PowerPoint, and Azure Speech. For a quiet announcement, the reach is substantial. Let’s break down each model.

– MAI-Transcribe-1: 25 languages, ~50% lower GPU cost, 2.5x faster than Azure Fast

– MAI-Voice-1: 60 seconds of audio in under 1 second on a single GPU, custom voices

– MAI-Image-2: Top 3 on Arena.ai, 2x faster generation, $5/million input tokens

Why Is Microsoft Building Its Own Models?

Microsoft’s partnership with OpenAI is well known. GPT-4 powers Copilot, DALL-E runs Bing Image Creator. But depending entirely on OpenAI for speech recognition, voice synthesis, and image generation creates risk.

OpenAI is increasingly focused on its own consumer products — ChatGPT, its own hardware plans, API pricing changes. Microsoft’s key AI partner is gradually becoming a competitor. GeekWire reported that “Microsoft is diversifying its AI supply chain.”

The MAI series is the response. Text generation still relies on OpenAI, but voice and image are now covered by Microsoft’s own technology. It’s a classic don’t-put-all-your-eggs-in-one-basket move.

MAI-Transcribe-1 — Speech Recognition at Half the Cost

MAI-Transcribe-1 is an enterprise-grade speech-to-text model. It supports 25 languages, cuts GPU costs by roughly 50%, and runs 2.5x faster than Azure’s Fast transcription mode.

Whisper (OpenAI) has been the de facto standard in speech recognition. Microsoft building its own model signals a deliberate move away from Whisper dependency. MAI-Transcribe-1 is already powering Copilot voice input and Teams meeting transcription.

For enterprises, cost is the key metric. A 50% GPU cost reduction is immediately tangible for call centers and media companies processing thousands of hours of audio daily. Accuracy is reported to match or exceed existing Azure models.

– Languages: 25 supported

– GPU cost: ~50% lower than current Azure models

– Speed: 2.5x faster than Azure Fast

– Use cases: call centers, meeting transcription, subtitles, voice commands

MAI-Voice-1 — 60 Seconds of Audio in 1 Second

MAI-Voice-1 is a high-fidelity text-to-speech model. It generates 60 seconds of audio in under 1 second on a single GPU. That’s remarkably fast.

It supports emotional range — happy, sad, angry tones can be applied to the generated voice. Where traditional TTS sounds robotic, MAI-Voice-1 aims for podcast narration and audiobook quality.

Custom voice cloning is supported. A few seconds of audio sample is enough to replicate a voice for TTS use. Pricing is $22 per million characters, which is competitive against ElevenLabs and other premium TTS services.

To put “60 seconds in 1 second” in perspective — that’s 60x real-time speed. In live streaming or real-time conversations, there’s virtually no latency. If Azure’s existing TTS was “decent,” MAI-Voice-1 is positioning itself to deliver ElevenLabs-level quality at Azure pricing.

– Speed: 60 seconds of audio in under 1 second on a single GPU

– Emotion: Happy, sad, angry tone control

– Custom voice: Clone from seconds of audio

– Pricing: $22 / million characters

– Deployed in: Copilot, Azure Speech

MAI-Image-2 — Top 3 on Arena.ai

MAI-Image-2 is a text-to-image generation model. It ranks in the top 3 on Arena.ai’s leaderboard. Generation speed is 2x faster than previous models, with pricing at $5 per million input tokens.

This is significant because Bing Image Creator has been running on DALL-E (now GPT Image). If MAI-Image-2 replaces it, Microsoft eliminates its dependency on OpenAI for image generation entirely.

Direct comparison with GPT Image 1.5 or Midjourney v7 is difficult at this stage, but the pricing and speed are competitive. Expect to see it powering automated slide images in PowerPoint and search result visuals in Bing.

– Quality: Top 3 on Arena.ai leaderboard

– Speed: 2x faster generation

– Pricing: $5 / million input tokens

– Deployed in: Copilot, Bing, PowerPoint

Where Can You Use Them?

All three models are available through Microsoft Foundry and MAI Playground. Azure customers can integrate directly with existing infrastructure. Foundry also provides standalone API access.

Several Microsoft products already use these models. Copilot (voice input, image generation), Bing (image search), PowerPoint (slide images), and Azure Speech (enterprise voice services) all have MAI models integrated.

For individual developers, the barrier to entry is still relatively high. Foundry access is required, and pricing is enterprise-oriented. However, some testing may be available through Azure free tiers. If you’re interested, sign up for the Foundry waitlist.

| Model | Domain | Key Strength | Products |

|---|---|---|---|

| MAI-Transcribe-1 | Speech → Text | 50% cost cut, 2.5x speed | Teams, Copilot |

| MAI-Voice-1 | Text → Speech | 60s/1s, emotional range | Azure Speech, Copilot |

| MAI-Image-2 | Text → Image | Arena.ai top 3, 2x speed | Bing, PowerPoint |

Reducing OpenAI Dependency — The Big Picture

Microsoft’s strategy in one sentence: “Text stays with OpenAI, everything else is ours.” GPT handles text generation, but voice and image are now in-house.

The reasoning is clear. OpenAI is evolving into an independent product company. ChatGPT directly serves consumers, API prices are rising, and there’s talk of OpenAI building its own hardware. Microsoft’s most important AI partner could become a competitor.

The MAI series isn’t just a product announcement. It’s part of a long-term strategy to take control of Microsoft’s AI supply chain. If Microsoft eventually builds in-house text generation models too, the OpenAI relationship could fundamentally change.

What This Means for Developers

You don’t need to change anything immediately. But there are a few things worth noting.

First, if you’re on Azure, speech-related costs could drop. MAI-Transcribe-1 claims 50% GPU cost reduction. If you have an existing Azure Speech pipeline, it’s worth evaluating a switch.

Second, if your project needs TTS, check MAI-Voice-1’s value proposition. At $22 per million characters, it could undercut ElevenLabs. Custom voice cloning from just seconds of audio is a strong feature for branded voice experiences.

Third, if you use image generation APIs, MAI-Image-2 is a new option. Cost comparisons against GPT Image or FLUX will require real testing, but a top-3 Arena.ai ranking validates the quality.

FAQ

Q. Are MAI models only available on Azure?

They’re available through Microsoft Foundry and MAI Playground. Azure customers can integrate directly with their existing infrastructure, and Foundry provides standalone API access. Some features may require waitlist registration as availability is still rolling out.

Q. Does MAI-Transcribe-1 support languages other than English?

Yes, it supports 25 languages. Accuracy may vary compared to English performance, so testing with your specific target language before production deployment is recommended.

Q. Can MAI-Voice-1 clone my voice?

Yes, it can create custom voices from just a few seconds of audio sample. This feature is available through the enterprise API and requires compliance with Microsoft’s ethical use guidelines, including consent procedures for voice cloning.

Q. Is Microsoft breaking up with OpenAI?

No, the partnership continues. GPT models still power Copilot’s core text capabilities. But Microsoft is clearly diversifying by building in-house models for voice and image. TechCrunch, VentureBeat, and GeekWire have all documented this strategic shift.

Q. Is MAI-Image-2 better than DALL-E?

It ranks top 3 on Arena.ai, which validates quality. Direct comparison with GPT Image 1.5 depends on the specific use case. MAI-Image-2 is 2x faster and priced at $5 per million input tokens, giving it a cost advantage in high-volume scenarios.

Wrap-up

What Microsoft demonstrated with the MAI series is simple: “We can build this ourselves.” Covering speech recognition, voice synthesis, and image generation with in-house technology in a single announcement is a statement.

The immediate impact on most developers is limited. But if you’re on Azure, need TTS, or use image generation APIs, the MAI models are worth evaluating. The bigger signal is that the era of depending on a single AI provider is ending. Even Microsoft has acknowledged that.

The AI model market is shifting fast. Subscribe to GoCodeLab to stay informed on every major announcement.

This article was written on April 4, 2026. Based on Microsoft’s official announcement from April 2, 2026. Features and pricing may change.

Related: 5 AI Image Generators Compared · Free AI Transcription Compared · Free Voice AI Comparison