Free AI Transcription Is Here — Cohere Transcribe vs Whisper vs ElevenLabs Accuracy Comparison

Free AI Transcription Is Here — Cohere Transcribe vs Whisper vs ElevenLabs Accuracy Comparison

April 1, 2026 · Comparison

When it comes to converting meeting recordings to text, there’s now a new option besides Whisper. On March 26, Cohere released Cohere Transcribe, and it hit #1 on the Hugging Face Open ASR Leaderboard. With 2B parameters and a free API, the numbers are quite impressive.

The AI transcription market is already half-dominated by OpenAI Whisper. ElevenLabs challenged with Scribe v2, and models like NVIDIA Canary and IBM Granite have also joined the competition. Now, Cohere — previously known for enterprise LLMs — has entered the STT space for the first time. We compared how the three models differ and which works best for different situations.

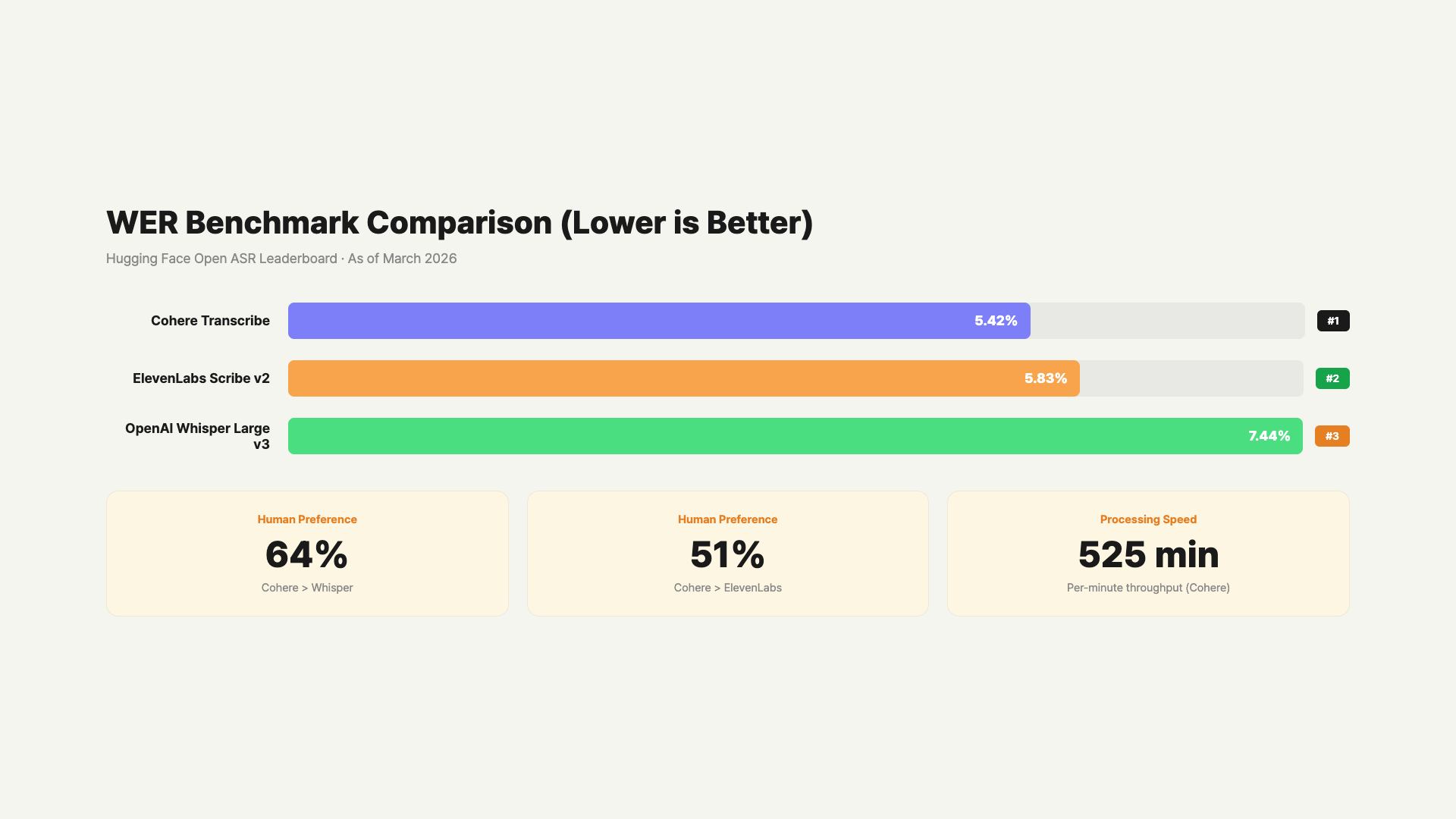

STT (Speech-to-Text) models measure accuracy using WER (Word Error Rate). The lower the number, the more accurate the transcription. Ranking the three models by this standard, the results are quite clear.

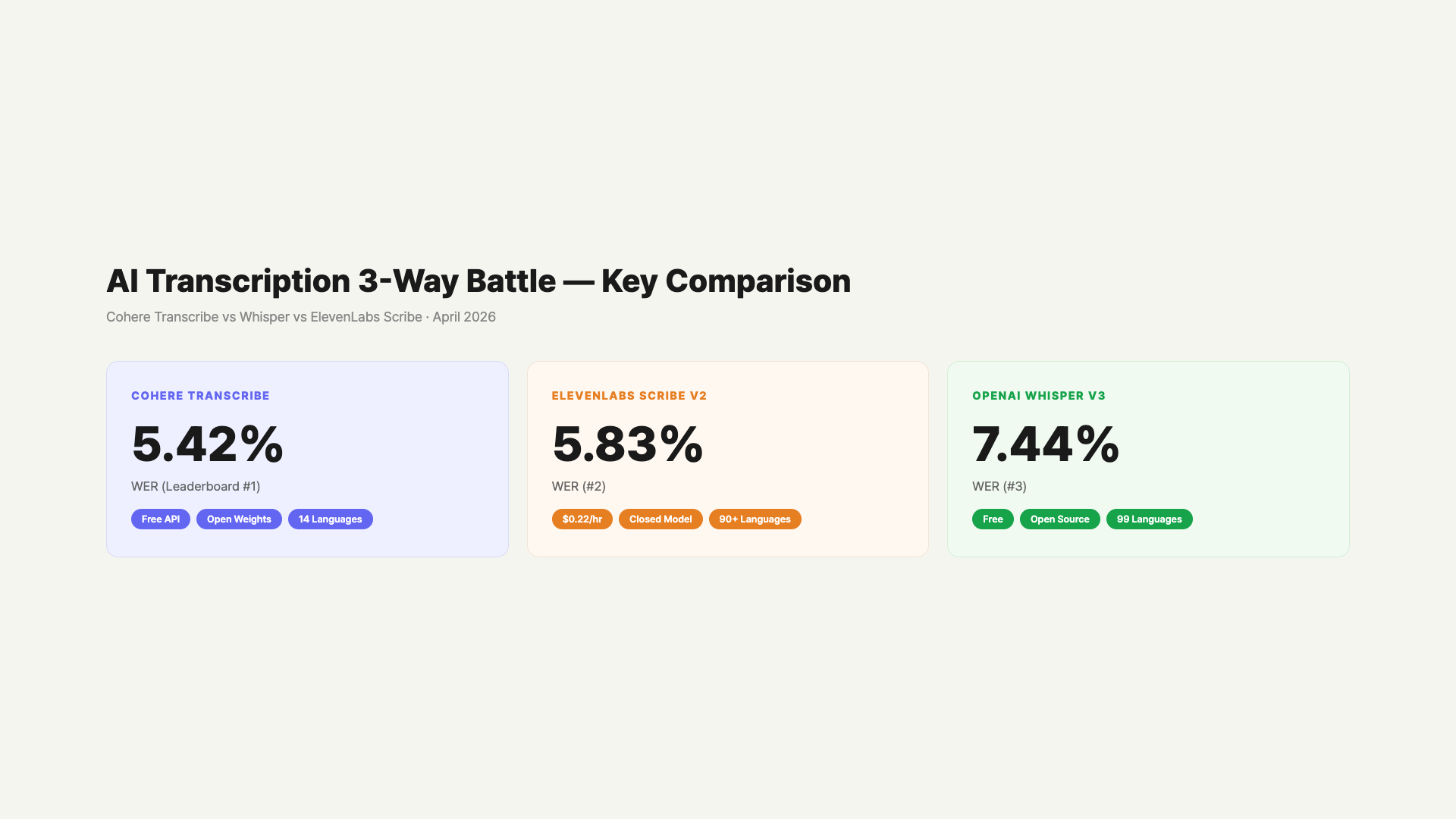

– Cohere Transcribe: WER 5.42%, free open-source (Apache 2.0), 14 languages (incl. Korean), 2B parameters

– ElevenLabs Scribe v2: WER 5.83%, paid ($0.22/hour), 90+ languages, closed model

– OpenAI Whisper Large v3: WER 7.44%, fully open-source, 99 languages, 1.55B parameters

– In human evaluations, Cohere was preferred over Whisper by 64% and over ElevenLabs by 51% (English)

– Cohere is weaker in German (44%), Spanish (48%), and Portuguese (48%)

– Korean accuracy is lower than English for all three — Whisper CER around 11%

- Where Did Cohere Transcribe Come From?

- Accuracy Comparison by WER Numbers

- Performance by Language — Where Is It Strong and Weak?

- Speed and Cost — What’s It Like in Practice?

- How Good Is Korean Support Really?

- Recommendations — Which One Should You Pick?

- Practical Uses — Meeting Notes, Subtitles, and Content Creation

- FAQ

- Wrap-Up

Where Did Cohere Transcribe Come From?

Cohere is a Toronto-based AI company founded in 2019. They’re known for enterprise text AI including the Command series LLMs, embedding models (Embed), and re-ranking models (Rerank). In 2025, their annual recurring revenue (ARR) exceeded $240 million, with an IPO expected in 2026. Their first entry into STT came as part of expanding their enterprise agent platform, North.

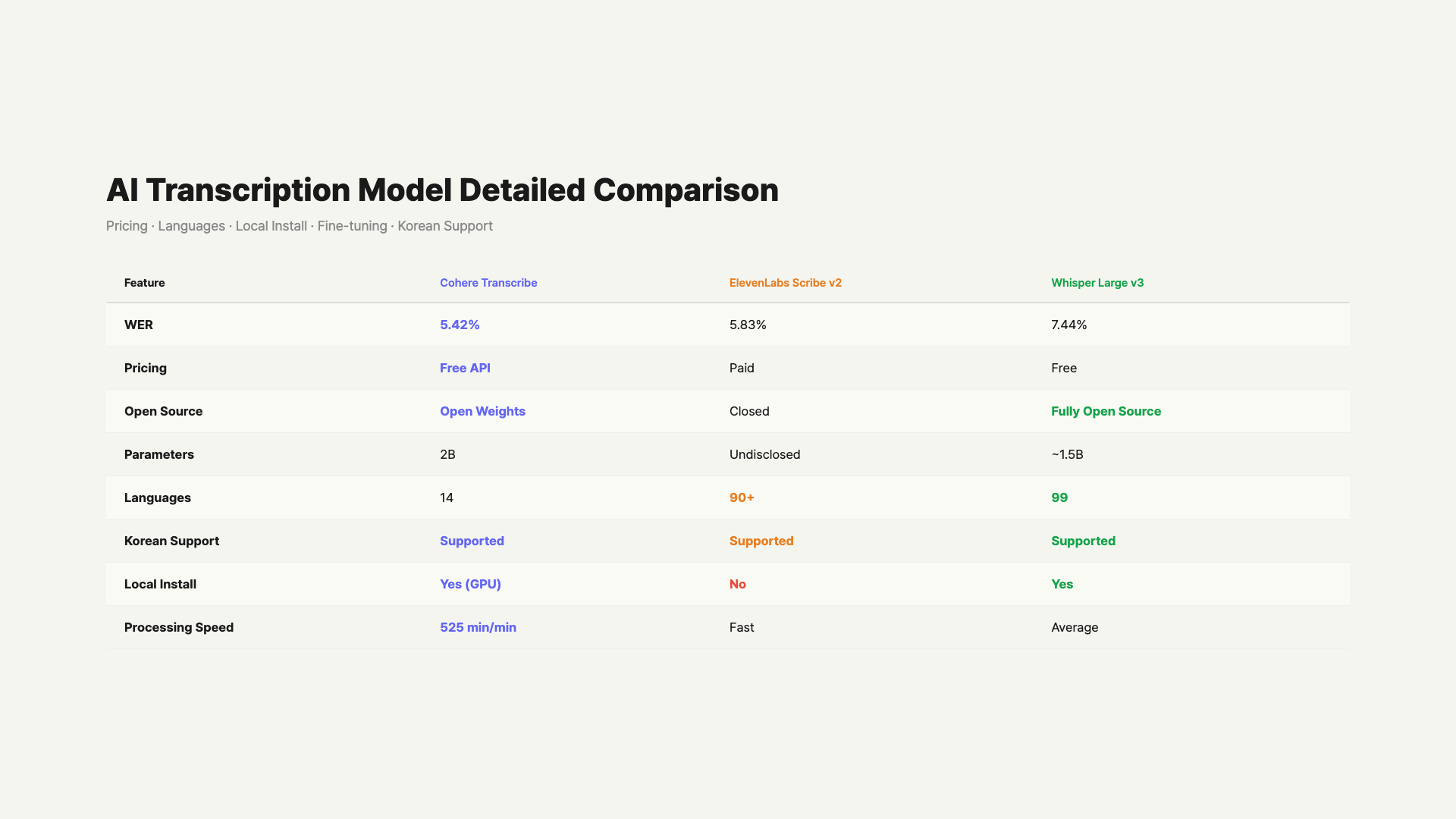

Transcribe is a Conformer-based ASR (Automatic Speech Recognition) model. Conformer is an architecture that combines CNN and Transformer, widely used in speech recognition. The model size is 2B (2 billion) parameters — about 30% larger than Whisper Large v3 at 1.55B. It’s a dedicated model that takes audio input and outputs text, with no text generation or conversation capabilities. It focuses purely on transcription.

The model weights are published on Hugging Face under the Apache 2.0 license, meaning commercial use is allowed. The API is currently free on Cohere’s official page, and it’s also available through their managed inference platform, Model Vault. It will soon be integrated into North, Cohere’s enterprise agent orchestration platform launched in August 2025, which lets companies run AI agents behind their own firewalls.

Local installation is also possible. With quantization (INT4, etc.), it can run on less than 8GB VRAM, making it compatible with consumer GPUs like the RTX 3070. There’s even a WebGPU demo on Hugging Face where you can test it directly in your browser.

Accuracy Comparison by WER Numbers

WER (Word Error Rate) is the standard performance metric for transcription models. It represents the percentage of incorrectly recognized words out of the total. It combines insertion, deletion, and substitution errors. A WER of 5.42% means about 5-6 words out of 100 are wrong.

The numbers below are all from the Hugging Face Open ASR Leaderboard (as of March 26, 2026). Since they’re measured under the same benchmark conditions, it’s a fair comparison.

| Model | WER (Lower is Better) | Price | Open Source | Languages |

|---|---|---|---|---|

| Cohere Transcribe | 5.42% | Free API | Open weights (Apache 2.0) | 14 |

| ElevenLabs Scribe v2 | 5.83% | Paid ($0.22/hour) | Closed | 90+ |

| OpenAI Whisper Large v3 | 7.44% | Free | Fully open-source | 99 |

Looking purely at benchmark numbers, Cohere leads. The WER gap between Cohere and Whisper represents about a 27% relative improvement. However, WER can vary significantly depending on test datasets and language composition. Cohere’s 5.42% is the leaderboard average, and the variance is significant when you look at individual languages.

There are also human evaluation results. Evaluators compared them directly based on accuracy, consistency, and usability. For English, Cohere Transcribe was preferred over Whisper Large v3 by 64% and over ElevenLabs Scribe v2 by 51%. Against IBM Granite 4.0 1B, the preference rate reached 78%. The average win rate was 61% against other models. Keep in mind, though, that these evaluations were conducted by Cohere themselves.

The leaderboard also features other strong models like NVIDIA Canary Qwen 2.5B (WER 5.63%), IBM Granite Speech 3.3 8B (WER 5.85%), and Qwen3-ASR-1.7B (WER 5.76%). Cohere achieved a lower WER with a smaller model size (2B), meaning it’s more efficient relative to its size.

Performance by Language — Where Is It Strong and Weak?

Cohere Transcribe supports 14 languages: English, French, German, Italian, Spanish, Portuguese, Greek, Dutch, Polish, Chinese, Japanese, Korean, Vietnamese, and Arabic. Compared to Whisper’s 99 languages, it’s definitely fewer, but it covers most major languages.

The issue is that it’s not #1 across all 14 languages. Cohere acknowledged weaknesses in their official announcement. In human evaluations, German scored 44%, Spanish and Portuguese each scored 48%. Below 50% means it actually lost to competing models. It fell behind in three European languages.

On the other hand, it’s clearly strong in some languages. In Japanese, it showed 66% preference over Whisper and 70% over Qwen3-ASR. Italian was also high at 60%. Cohere clearly leads in English and East Asian languages (Japanese, Korean, Chinese).

If you plan to use it primarily for European languages (German, Spanish, Portuguese), Whisper Large v3 might be a better choice. Cohere officially acknowledged this weakness. If you’re focused on English or East Asian languages, Cohere is clearly the better option.

ElevenLabs Scribe v2 supports over 90 languages. In terms of language coverage alone, it rivals Whisper (99). However, since it’s a closed model, per-language WER numbers haven’t been published. ElevenLabs reported achieving 93.5% accuracy on the FLEURS multilingual benchmark in their own tests.

Speed and Cost — What’s It Like in Practice?

Cohere announced that Transcribe processes at 525 minutes of audio per minute. That means uploading a 30-minute meeting recording would produce text in about 3-4 seconds. However, this figure was self-reported by Cohere and hasn’t been independently verified. Actual processing speed may vary depending on server hardware and batch settings.

The cost structure is where the three models differ the most. Here’s the current situation.

| Model | API Cost | Local Install | Notes |

|---|---|---|---|

| Cohere Transcribe | Free | Possible (under 8GB VRAM) | May become paid later |

| ElevenLabs Scribe v2 | $0.22/hr (batch) $0.39/hr (real-time) |

Not possible | Real-time processing (150ms) billed separately |

| OpenAI Whisper Large v3 | Free (self-hosted) | Possible | GPU costs separate |

Cohere’s API is currently free. Sign up for the Cohere API and you can use the Transcribe endpoint right away. However, there’s a possibility that Cohere may charge for premium features after integrating it into their enterprise platform North. For now, the free tier is generous.

ElevenLabs is definitely paid. Batch processing costs $0.22/hour, and real-time processing is $0.39/hour. Not a big deal for small-scale use, but costs add up with large volumes. There’s a free tier, but quotas are limited.

Whisper is fully open-source, so there’s no API cost. However, running your own server requires GPU costs — either renting cloud GPUs or using local GPUs. Third-party hosting services like Groq or Together AI offer affordable API access.

On cost alone, Cohere has the biggest advantage. They offer a free API plus open weights. It’s accuracy and free pricing in one package. That said, free may not last forever, so it’s worth testing while you can.

How Good Is Korean Support Really?

Korean is included in Cohere Transcribe’s list of 14 supported languages. The official announcement explicitly mentions Korean. However, they haven’t published Korean-specific WER numbers. Given the high preference rate of 66-70% for Japanese, Korean might perform similarly, but that’s just speculation.

Whisper supports 99 languages and has supported Korean since its earliest versions. For Korean speech recognition, CER (Character Error Rate) is used more commonly than WER, because Korean has irregular spacing that makes word-level comparison less accurate. Whisper Large v3’s Korean CER is reportedly about 11.13% on the KsponSpeech benchmark — roughly 3x higher than English (CER 3.91%).

ElevenLabs Scribe v2 supports over 90 languages, including Korean. However, individual Korean performance metrics haven’t been published separately.

All three models support Korean, but accuracy is definitely lower compared to English. Errors increase especially with colloquial speech, fast speaking, and conversations with mixed English words. There are no direct head-to-head Korean comparisons yet, so if Korean performance matters to you, testing all three models yourself is the most accurate approach.

One more thing to note about Korean STT: in domains with heavy specialized terminology like medical, legal, or financial, general-purpose STT models tend to have higher error rates. In these environments, models that support fine-tuning like Whisper or Cohere Transcribe have the advantage. ElevenLabs’s model is closed, so fine-tuning isn’t possible.

Recommendations — Which One Should You Pick?

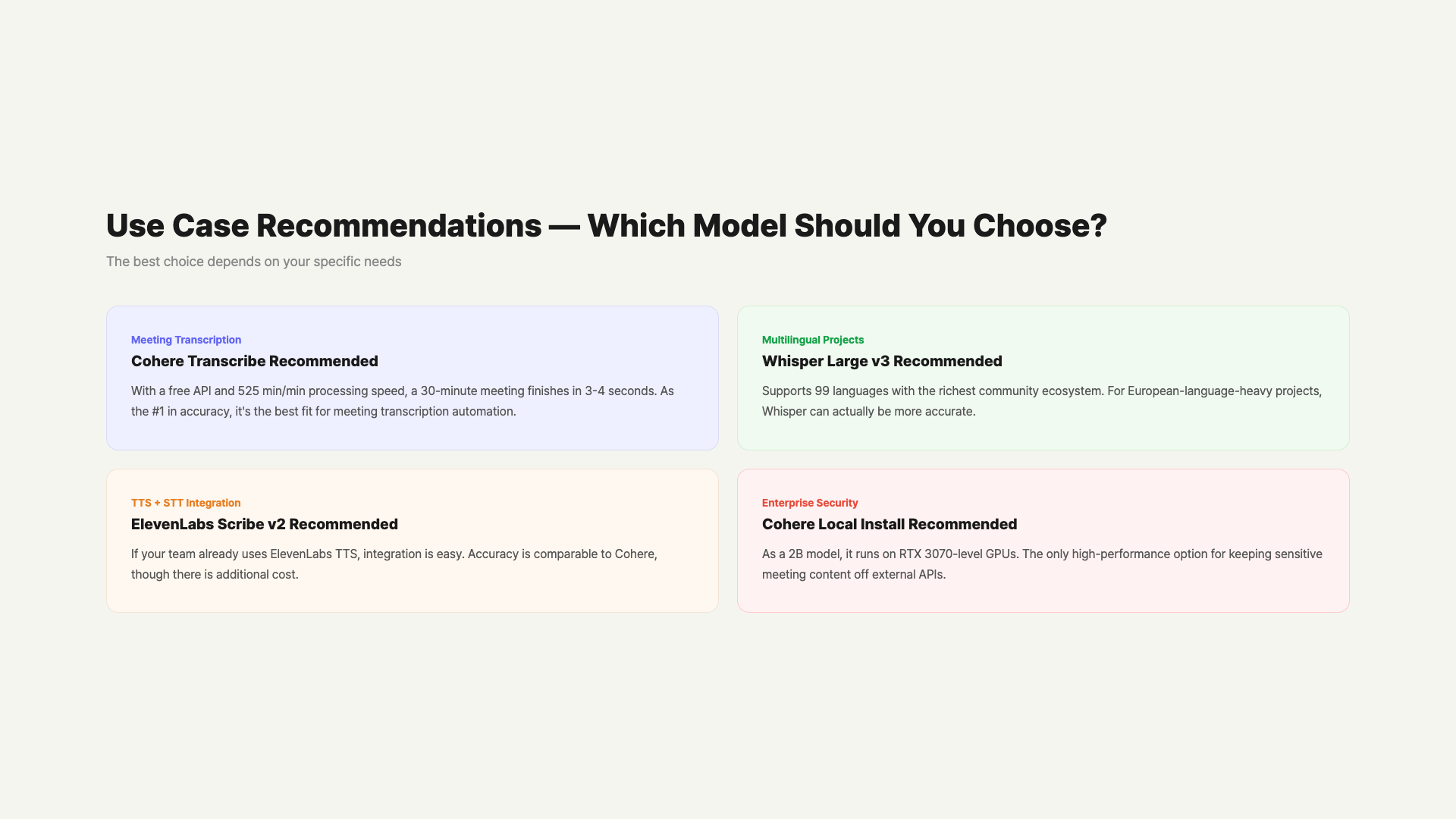

Each of the three models fits different situations. Your choice changes depending on the context.

If accuracy is your top priority and you want to start without cost burden, Cohere Transcribe is currently the most reasonable choice. It’s a free API with leaderboard-leading numbers. Just keep in mind that your target language should be one where Cohere is strong, like English, Korean, Japanese, or Chinese. For European languages, it’s actually weaker.

If you need one of 99 languages, or need a battle-tested model in a fully local environment, Whisper Large v3 is the stable choice. It has the highest WER, but its community support and fine-tuning references are the most abundant. There are hundreds of Whisper-based fine-tuned models, making it easy to boost performance for specific domains.

If your team already uses ElevenLabs for TTS, using Scribe v2 alongside it isn’t a bad idea. Workflow integration is easy, and accuracy isn’t far off from Cohere. If you need real-time processing, Scribe v2 Realtime (150ms latency) is a strong advantage — just be prepared for the additional cost.

– English/Korean focus, zero cost to start: Cohere Transcribe

– Multilingual (incl. European), established community, fine-tuning: Whisper Large v3

– ElevenLabs TTS integration workflow, real-time needed: ElevenLabs Scribe v2

– Enterprise security, offline required: Cohere local install or Whisper local install

Practical Uses — Meeting Notes, Subtitles, and Content Creation

STT models are most commonly used for meeting transcription automation. You upload a meeting recording, get text back, and connect it to an LLM like ChatGPT or Claude for summary extraction or action item generation. This pipeline is widely used. Cohere Transcribe delivers both accuracy and speed for this use case. Being a free API means you can build a prototype and test it right away, which is another plus.

YouTube subtitle creation is another major use case. Extract audio from a video file, run STT, and convert to SRT format — done. If Cohere’s processing speed (525 min/min, self-reported) holds true, even a 1-hour video would be transcribed in seconds. This could significantly cut subtitle creation time for YouTube creators.

It’s also used for repurposing podcast content into blog posts or newsletters. There’s a growing workflow of transcribing entire podcast episodes with STT, then using LLMs to summarize and edit them into articles. In this case, processing speed might matter more than accuracy — and Cohere Transcribe handles both well.

For environments where enterprise security is critical, the local installation option is decisive. You don’t have to send sensitive meeting content to external APIs. Cohere Transcribe can run on under 8GB VRAM when quantized, meaning it works right on a developer’s PC without a separate server. Whisper also supports local installation, and smaller variants (Turbo, Distil) run even lighter.

There are also environments that process large volumes of audio regularly, like call center voice analysis or medical transcription. API costs can pile up quickly in these settings, and Cohere’s free API significantly reduces the initial cost burden. As scale grows, switching to local deployment is also a viable strategy.

FAQ

Q. Is Cohere Transcribe really free?

Yes, it’s currently free. Sign up for the Cohere API and you can use the Transcribe endpoint at no cost. Model weights are also available on Hugging Face under Apache 2.0, so you can run it locally and use it commercially. However, Cohere may introduce paid premium features as it integrates with the North platform. Best to test while it’s still free.

Q. How much better is it compared to Whisper Large v3?

On the Hugging Face Open ASR Leaderboard, Cohere has WER 5.42% vs Whisper’s 7.44% — about a 27% relative improvement. Human evaluations showed 64% preference for Cohere over Whisper in English, rising to 66% for Japanese. However, Cohere falls behind in German (44%), Spanish (48%), and Portuguese (48%). The accurate understanding is “clearly ahead in certain languages,” not “better than Whisper in every way.”

Q. Is Cohere Transcribe the best for Korean?

Hard to say definitively since there are no official Korean-only comparison numbers. Note that Korean speech recognition commonly uses CER (Character Error Rate) instead of WER. Whisper Large v3’s Korean CER is about 11% on KsponSpeech. Cohere showed high preference for Japanese, so Korean might be similar — but that’s speculation. All three support Korean, so testing with the same audio yourself is the most accurate approach.

Q. Does ElevenLabs Scribe have any advantages?

Yes. First, it supports 90+ languages — much broader than Cohere’s 14. For real-time processing, Scribe v2 Realtime operates at 150ms latency, making it strong for live captioning or real-time interpretation. If your team already uses ElevenLabs TTS, managing STT on the same platform is convenient. WER is 5.83%, not far from Cohere. If you can handle the cost ($0.22-$0.39/hour), it’s a perfectly good choice.

Q. Are there audio file format restrictions?

According to Cohere’s official docs, major audio formats (MP3, WAV, M4A, FLAC, etc.) are supported. Same goes for Whisper. ElevenLabs Scribe also supports most common audio formats. For the exact list, check each service’s official documentation. If you have an unsupported format, convert it to WAV or MP3 with ffmpeg before uploading.

Q. Is the 525 min/min processing speed really accurate?

That’s the figure Cohere stated in their official announcement. However, this speed is based on Cohere’s own infrastructure and hasn’t been independently verified. Actual processing speed may vary depending on server hardware, batch size, audio length, and concurrent requests. Running on a local GPU could be significantly slower. Treat it as a reference number and benchmark in your own environment.

Q. What is the Conformer architecture?

Conformer is a speech recognition-specific architecture combining Convolution + Transformer. CNN captures local audio patterns (pronunciation, syllables), while Transformer’s attention handles long-range context (sentence flow). Published by Google in 2020, most high-performance ASR models since have been based on this architecture. Whisper uses a pure Transformer structure, while Cohere adopted Conformer, potentially offering better speech recognition efficiency per parameter.

Wrap-Up

Cohere Transcribe’s combination of free, open weights (Apache 2.0), and #1 leaderboard position is quite attractive. If you focus on English, Korean, or Japanese, it’s worth testing right now. Just keep in mind it’s weaker in German, Spanish, and Portuguese, and the processing speed claims haven’t been independently verified.

Whisper Large v3 has the highest WER, but its 99-language support and rich community ecosystem remain major advantages. With hundreds of fine-tuning references, it’s the easiest model to customize for specific domains. If you need a “safe choice,” Whisper is still the answer.

ElevenLabs Scribe v2’s strengths are 90+ language support, 150ms real-time processing, and TTS ecosystem integration. If you can handle the cost, it’s the most convenient commercial service.

Korean colloquial performance still isn’t as good as English across all three models. If you’re using them for meeting note automation or subtitle generation, a review step is still needed. That said, the STT market has been advancing rapidly in 2026, and Cohere’s entry has raised the competition another level. It’ll be interesting to see how Whisper and ElevenLabs respond.

Curious about AI transcription? You can test the Cohere Transcribe API for free right now.

This article was written on April 1, 2026. Numbers are based on the Hugging Face Open ASR Leaderboard (as of March 26, 2026) and official announcements from each company. Cohere Transcribe is a newly launched model, and performance and pricing may change.

At GoCodeLab, we try AI tools hands-on and share honest reviews. Subscribe for more AI news.

Related: A Free Voice AI That’s Supposedly Better Than ElevenLabs — Mistral Voxtral TTS · OpenAI Shut Down Sora — All-In on the ‘Spud’ Model? · MCP Protocol Surpasses 97 Million Installs