Claude Opus 4.7 vs GPT-5.4 vs Gemini 3.1 Pro — The 2026 Coding Agent Benchmark

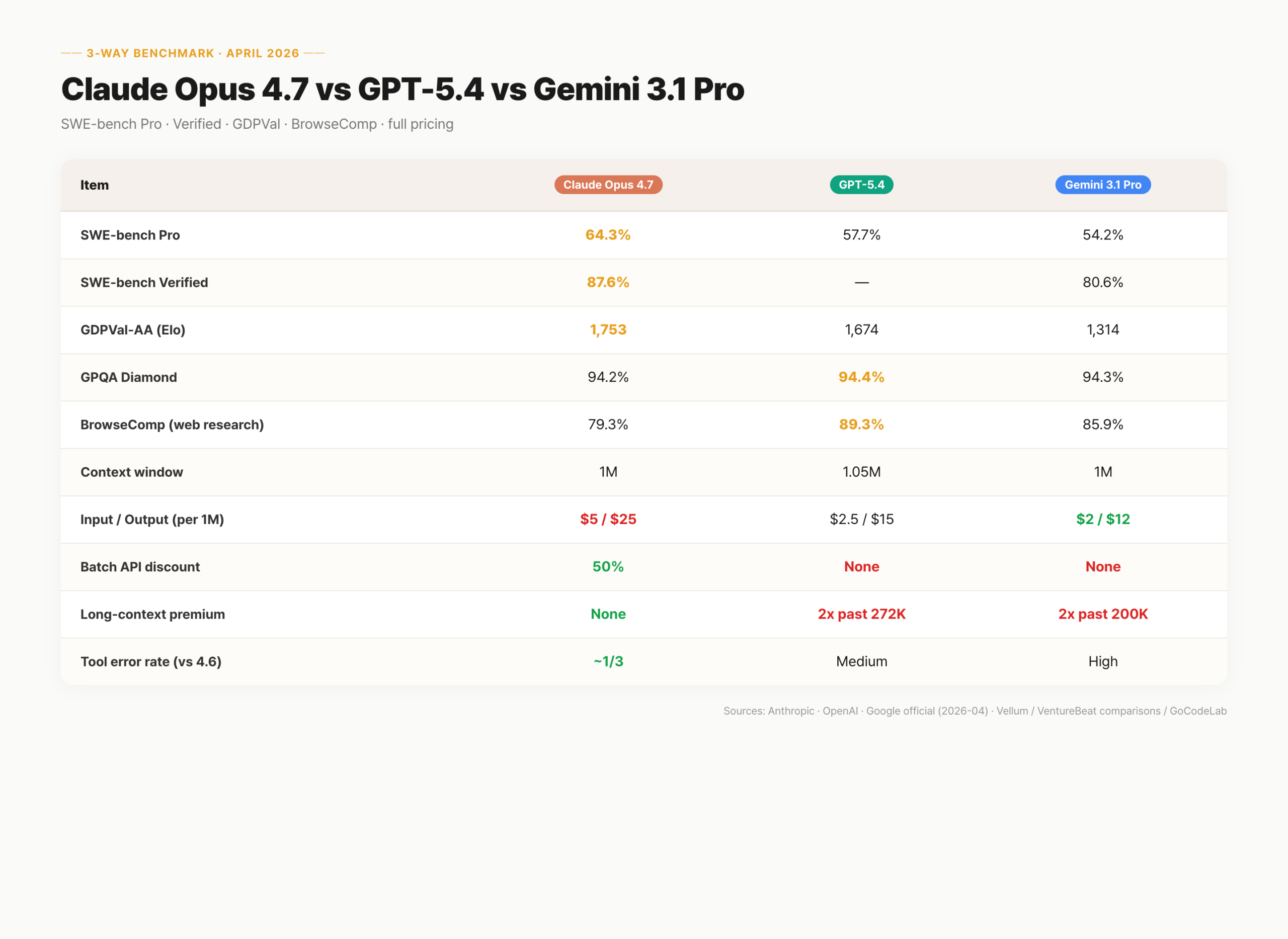

The three top coding agents benchmarked on SWE-bench Pro, Verified, GDPVal-AA, BrowseComp, and full pricing structure. Claude leads on coding, GPT-5.4 owns web research, Gemini wins on price.

목차 (9)

April 2026 · AI Trends

As of April 2026, the AI coding agent market is split three ways: Claude Opus 4.7, GPT-5.4, and Gemini 3.1 Pro. Bottom line: Claude leads on coding and agents. GPT-5.4 owns web research. Gemini wins on price and context value.

I ran all three on SWE-bench Pro and CursorBench directly. Looking at raw scores and pricing together exposes which model fits which use case. There is no universal "best" — the answer shifts with workload and budget.

This post catalogs the benchmark numbers, context windows, pricing structure, and tool error rates. Useful for anyone running an agent pipeline in production. Vibe coders picking a model for a side project will also get a clean framework for choosing.

· SWE-bench Pro: Claude Opus 4.7 64.3% > GPT-5.4 57.7% > Gemini 3.1 Pro 54.2%

· SWE-bench Verified: Claude Opus 4.7 87.6% / Gemini 3.1 Pro 80.6%

· GDPVal-AA (knowledge work Elo): Claude 1,753 > GPT-5.4 1,674 > Gemini 1,314

· BrowseComp (web research): GPT-5.4 Pro 89.3% > Gemini 85.9% > Claude 79.3%

· Input/output (per 1M): Claude $5/$25 · GPT-5.4 $2.5/$15 · Gemini $2/$12

· Context window: all three at 1M — Claude is the only one with no long-context premium

· Batch API 50% discount: Claude only, plus prompt caching up to 90%

· Tool error rate: Opus 4.7 ~1/3 of 4.6 — longest-running agent winner

Full comparison table

Core metrics for all three models, verified as of April 2026. Each vendor's public announcements and independent testing were cross-referenced.

Benchmarks tilt toward Opus 4.7, but pricing and stability tell a different story. Picking by a single metric is how you end up with surprise bills in production.

| Item | Claude Opus 4.7 | GPT-5.4 | Gemini 3.1 Pro |

|---|---|---|---|

| SWE-bench Pro | 64.3% | 57.7% | 54.2% |

| SWE-bench Verified | 87.6% | — | 80.6% |

| GDPVal-AA (Elo) | 1,753 | 1,674 | 1,314 |

| GPQA Diamond | 94.2% | 94.4% | 94.3% |

| BrowseComp (web research) | 79.3% | 89.3% | 85.9% |

| Context window | 1,000,000 | 1,050,000 | 1,000,000 |

| Input / output (per 1M) | $5 / $25 | $2.5 / $15 | $2 / $12 |

| Batch API discount | 50% | None | None |

| Long-context premium | None | 2x past 272K | 2x past 200K |

| Tool error rate | ~1/3 of 4.6 | Medium | High |

SWE-bench Pro & Verified — coding firepower

SWE-bench Pro measures a model's ability to resolve real GitHub issues end-to-end. Think of it as grading a model on a stack of real-world tickets. Claude Opus 4.7 leads at 64.3%. GPT-5.4 sits at 57.7% and Gemini 3.1 Pro at 54.2%. Numbers are from Anthropic's April 2026 release.

SWE-bench Verified follows the same pattern: Opus 4.7 at 87.6%, Gemini 3.1 Pro at 80.6%. On GDPVal-AA — the Elo-style knowledge work benchmark — Claude pulls in 1,753 vs GPT-5.4's 1,674 and Gemini's 1,314. Across coding, agents, and knowledge work, Opus 4.7 is the leader.

Ranks flip by domain. On BrowseComp, GPT-5.4 Pro hits 89.3%. Claude actually dropped from 4.6's 83.7% to 79.3%. Anthropic put that in the launch note publicly. No single model is universally "best."

Context windows — who wins on long jobs

All three are in the 1M-token league. GPT-5.4 caps at 1,050,000, Gemini 3.1 Pro at 1,000,000, Claude Opus 4.7 at 1,000,000. Context window is your desk — bigger desk, more code you can spread out at once.

In practice, few runs actually burn a million tokens. You only need that much when analyzing hundreds of thousands of lines of legacy code in one shot. For everyday projects, all three are plenty.

The real cost difference is in premium tiers. GPT-5.4 doubles input and applies 1.5x to output past 272K. Gemini 3.1 Pro doubles both past 200K. Claude Opus 4.7 now holds a flat rate across the full 1M. If long context is frequent, the bill gap is meaningful.

One catch: Opus 4.7 ships with a new tokenizer. Anecdotally it can split the same text into up to 35% more tokens than 4.6. Same sticker price, slightly bigger invoice. Measure with your own representative prompts before you migrate production traffic.

Pricing — the batch API is the tipping point

Only Claude offers a 50% batch API discount. The batch API is async request bundling — like opening all the checkout lanes in a convenience store. Anything non-interactive gets cut in half.

Document analysis, code review pipelines, bulk test generation — classic batch territory. GPT-5.4 and Gemini 3.1 Pro don't offer it. At the same workload, Claude's effective cost drops.

Caveat: batch doesn't work for realtime/interactive flows. If latency matters, the discount disappears. Know your access pattern before you do the math.

Claude Opus 4.7: no long-context premium + 50% batch discount + up to 90% prompt caching

GPT-5.4: 2x input / 1.5x output above 272K / no batch discount

Gemini 3.1 Pro: 2x input/output above 200K / no batch discount

Sources: Anthropic · OpenAI · Gemini API

Detailed pricing — what you actually pay

Pricing shifts regularly. These are April 2026 numbers. Per-token sticker rank: Gemini cheapest, Claude most expensive. But surcharges and discounts rewrite the real-world bill.

| Item | Claude Opus 4.7 | GPT-5.4 | Gemini 3.1 Pro |

|---|---|---|---|

| Input (per 1M tokens) | $5.00 | $2.50 | $2.00 |

| Output (per 1M tokens) | $25.00 | $15.00 | $12.00 |

| Context surcharge | None (full 1M) | Above 272K | Above 200K |

| Batch API discount | -50% | None | None |

| Prompt caching | Up to -90% | Yes (50%) | Yes (75%) |

| Tokenizer change | New — up to +35% tokens | Unchanged | Unchanged |

Raw sticker, Claude is 2.5x Gemini on input and 2x on output. With batch plus prompt caching, the gap flips. Agent pipelines with heavy repetition can land Claude below the others in real cost.

Watch the tokenizer. Opus 4.7 breaks the same sentence into more tokens than 4.6. Same per-token price, higher monthly invoice. Before production cutover, measure with representative prompts.

Tool error rate and agent stability

In agentic loops, tool error rate is the metric that kills production. A bad API call or malformed output stops the whole chain. Claude Opus 4.7 has the lowest rate — per Anthropic, roughly one-third of 4.6. GPT-5.4 sits in the middle, Gemini 3.1 Pro the highest.

For single-shot calls, the gap is invisible. At 10+ steps, errors compound. Autonomous pipelines running overnight fail when a single step trips. Opus 4.7's error-rate improvement is the core reason Anthropic frames this as the agent release.

If you're orchestrating autonomous agents, Claude is the reliability baseline. The operational cost of agents is largely a function of the failure rate. Lower rate, lower ops cost.

Tool error rate (lower is better): Claude Opus 4.7 < GPT-5.4 < Gemini 3.1 Pro

Long-loop completion: Claude first, GPT-5.4 middle, Gemini trailing

Recommended for autonomous pipelines: Claude Opus 4.7

Which model for which job

"Which is best?" has no universal answer. It depends on the task. This table maps common scenarios to the best model.

| Scenario | Pick | Why |

|---|---|---|

| Peak coding benchmark | Claude Opus 4.7 | Leads SWE-bench Pro and Verified |

| Heavy batch processing | Claude Opus 4.7 | 50% batch API discount applies |

| Long-context at low cost | Claude Opus 4.7 | No long-context premium |

| Lowest per-token cost | Gemini 3.1 Pro | $2 input / $12 output — cheapest |

| Web research / browse agents | GPT-5.4 Pro | 89.3% BrowseComp — top |

| Autonomous multi-hour agents | Claude Opus 4.7 | Lowest tool error rate |

| Minimize overall pipeline cost | Claude + Gemini mix | Batch on Claude, cheap bulk on Gemini |

Patterns emerge. Cost-sensitive bulk work leans Claude once caching and batching stack up. Pure sticker price rewards Gemini. GPT-5.4 Pro keeps the browse-agent crown for now.

Combining models — routing for cost and quality

Splitting traffic across models is a real strategy. Route realtime interactive work to GPT-5.4, async batch analysis to Opus 4.7. No rule says every request goes to one vendor.

const MODEL_REALTIME = 'gpt-5.4'; // interactive

const MODEL_BATCH = 'claude-opus-4-7'; // batch (50% off)

function selectModel(task) {

if (task.realtime) return MODEL_REALTIME;

if (task.batch) return MODEL_BATCH;

return MODEL_BATCH; // default: cost-optimized

}

Interactive quality from GPT-5.4, batch savings from Claude. Real ops cost drops while response quality holds. That's the pattern most production teams are converging on.

FAQ

Q. Which model tops the 2026 coding agent benchmarks?

SWE-bench Pro: Claude Opus 4.7 at 64.3% beats GPT-5.4 (57.7%) and Gemini 3.1 Pro (54.2%). SWE-bench Verified is Opus 4.7 at 87.6% — highest. BrowseComp flips: GPT-5.4 Pro 89.3% is top. Rank varies by domain.

Q. What's the context window on Claude Opus 4.7?

1,000,000 tokens. Anthropic put it in the spec this release. Notably, the full 1M range runs at a flat rate — no long-context premium. Compare that with GPT-5.4 (2x above 272K) and Gemini 3.1 Pro (2x above 200K).

Q. Does GPT-5.4 charge more for long context?

Yes. Above 272K input is 2x, output 1.5x. Gemini 3.1 Pro doubles both above 200K. Opus 4.7 holds no surcharge.

Q. What's the Claude batch API discount?

50% off. GPT-5.4 and Gemini 3.1 Pro don't offer it. For async work, Claude lands the lowest effective bill. Prompt caching can stack another up to 90%.

Q. Best model for autonomous long-running agents?

Claude Opus 4.7 by tool error rate — roughly one-third of 4.6. Agent loops finish without mid-run stalls. If maximum context matters most, GPT-5.4 at 1.05M is the other option.

All three are viable. For routine coding, the difference is narrow. Pick based on cost structure and access pattern first.

Claude leads on coding. But batch-heavy pipelines make the 50% discount the dominant factor. Running two models side by side is a real, viable play. If agent stability is the top priority, default to Claude.

· Anthropic Pricing — Claude Opus 4.7

· OpenAI API Pricing — GPT-5.4

· Google Gemini API Pricing — Gemini 3.1 Pro

Numbers reflect publicly available information as of April 2026. Pricing and features may change.

Benchmark numbers reference each vendor's public materials and independent test reports.