OpenAI Foundation — What Will They Do with $1 Billion?

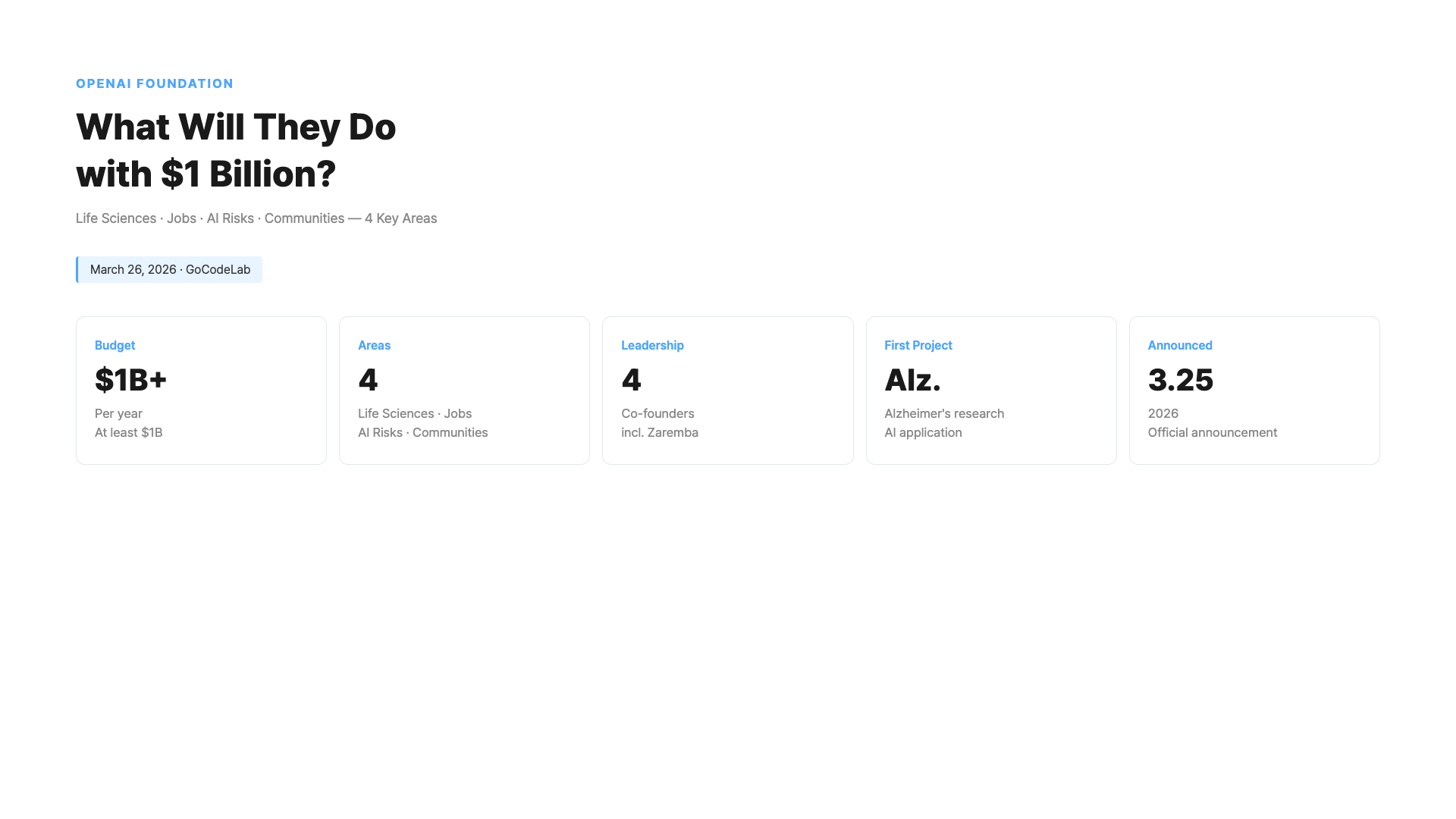

OpenAI announced a $1 billion investment through its nonprofit foundation. From job displacement and Alzheimer's research to AI risk response — here's why Sam Altman personally warned about AI threats and what the foundation plans to do.

March 26, 2026 · Trends

On March 25, OpenAI unexpectedly announced it would put up $1 billion. This isn’t a simple donation. They’re creating a nonprofit foundation to take responsibility for the damage AI could cause. What’s interesting is Sam Altman’s statement. He wrote in the official announcement that “AI will bring new threats to society.” It’s rare for a CEO leading the AI industry to issue this kind of warning. There’s another reason the foundation is getting attention. OpenAI recently transitioned from a nonprofit to a public benefit corporation (for-profit). During that process, criticism poured in that “it became just a money-making company.” This $1 billion foundation announcement is also a response to that criticism.- What Is the OpenAI Foundation?

- $1 Billion Across 4 Focus Areas

- Using AI for Alzheimer’s Research?

- “No Company Can Solve AI Threats Alone” — Sam Altman’s Warning

- From Nonprofit to For-Profit, and Back to a Foundation?

- Is $1 Billion Enough? — Critics Say No

- Jobs Lost to AI — How Will They Respond?

- FAQ

- Wrap-Up

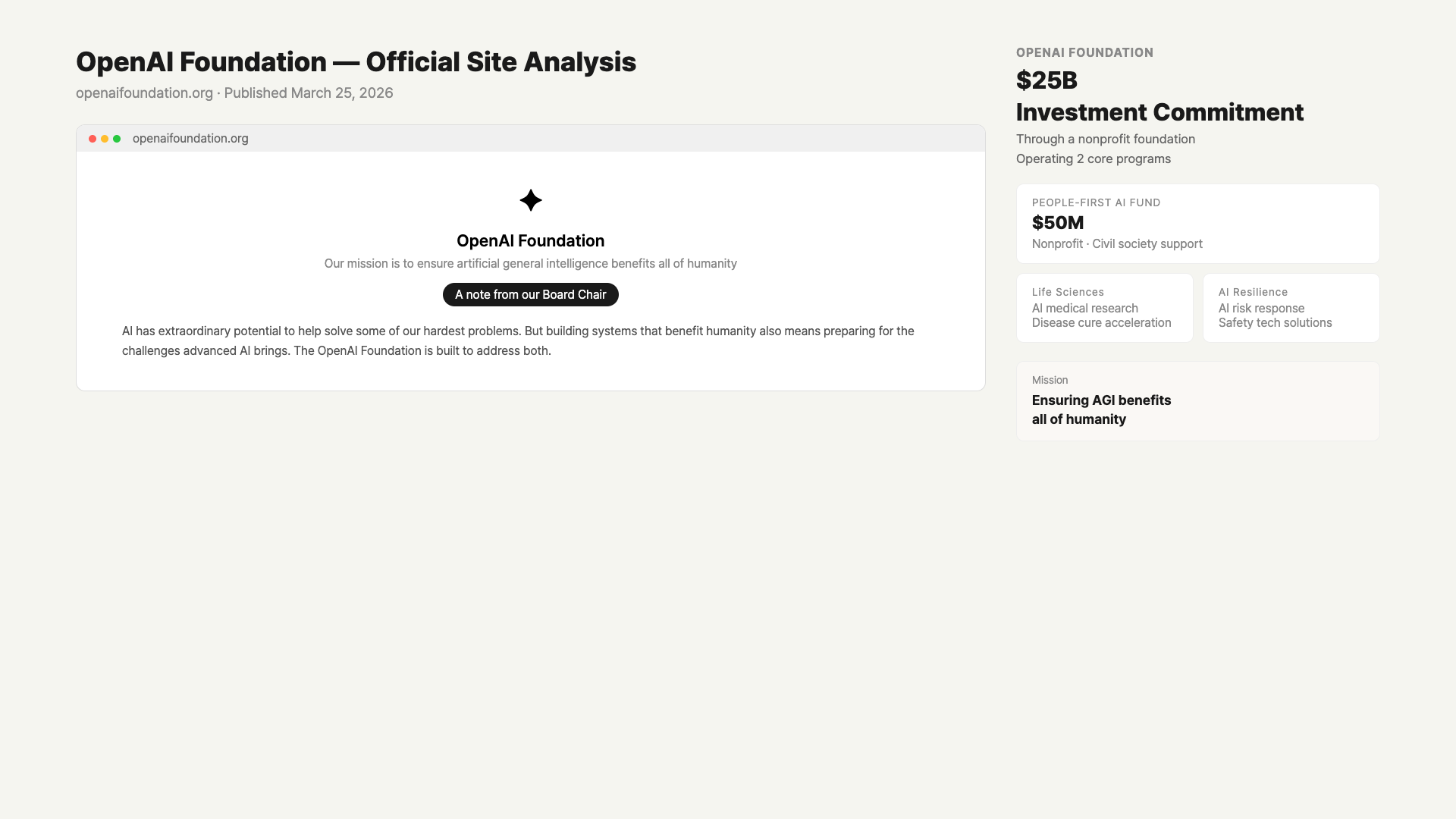

What Is the OpenAI Foundation?

The OpenAI Foundation is OpenAI’s nonprofit arm. But this time it’s different. Until now, the foundation operated quietly internally. This is the first time they’ve officially appointed independent leadership and disclosed an annual budget. The foundation is a nonprofit organization maintained separately even after OpenAI’s transition to a public benefit corporation. It’s structured so that a portion of headquarters revenue goes to this side to fulfill social responsibility. In simple terms, “money-making OpenAI” and “problem-solving OpenAI Foundation” coexist. Four leaders were appointed this time. Jacob Trefethen for life sciences, Anna Makanju for civil society and philanthropy, Wojciech Zaremba for AI risk, and Jeff Arnold for operations. Among them, Zaremba is an OpenAI co-founder. It’s highly unusual for a co-founder to leave the for-profit side and join the foundation.

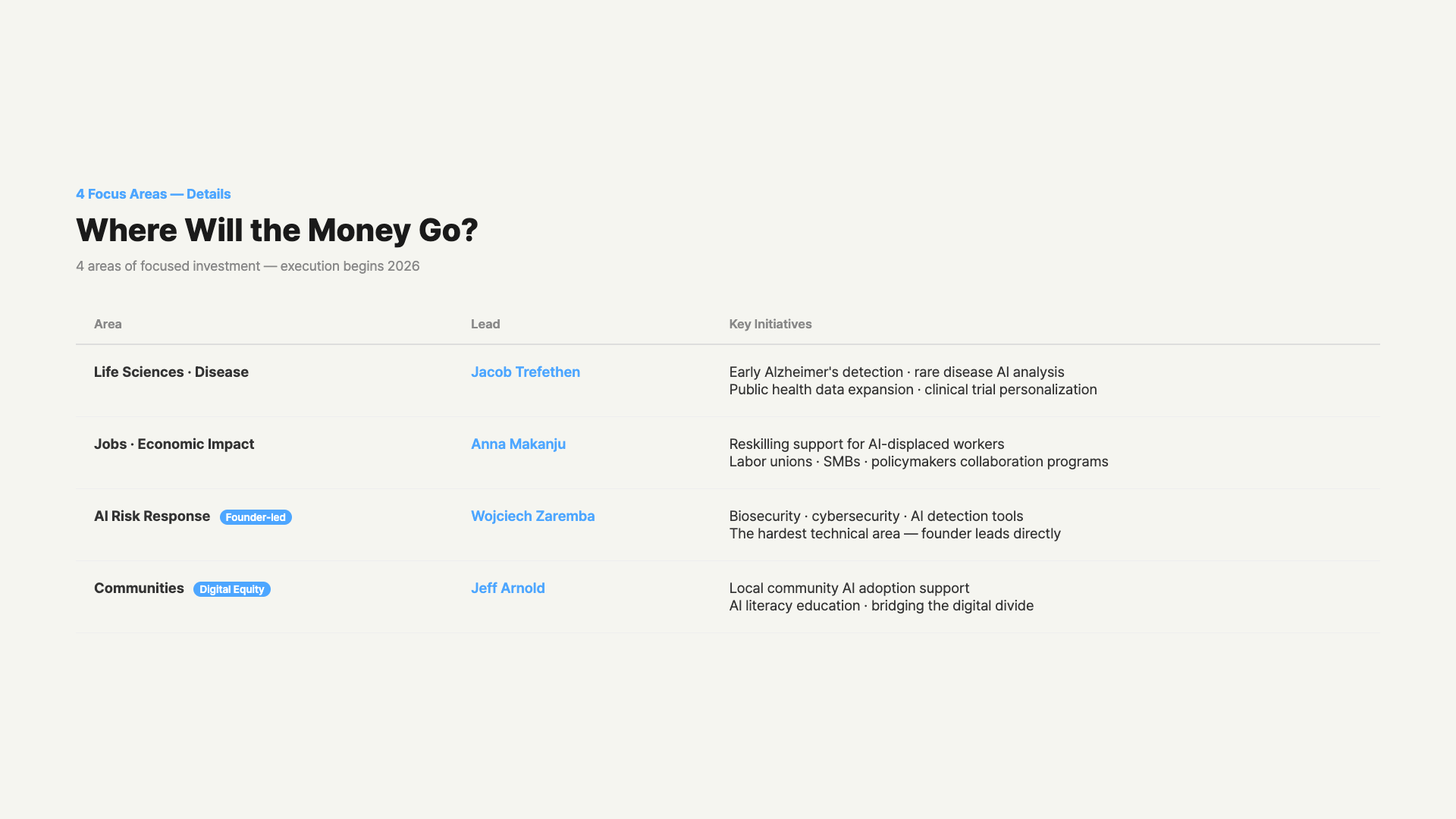

$1 Billion Across 4 Focus Areas

The foundation disclosed four spending categories. Let’s look at where and why each one matters. First: life sciences and disease research. Key priorities include Alzheimer’s, high-mortality diseases, and expanding public health data. The idea is to leverage AI’s ability to rapidly analyze complex medical data. Second: job and economic impact response. Investment goes to programs that mitigate the social shock from AI rapidly replacing jobs. Specific methods haven’t been fully disclosed yet. It will likely involve job training support. Third: AI risk response. This covers biological threats, cybersecurity, and complex social problems AI could create. Zaremba personally leads this area. It’s technically the heaviest of the four domains. Fourth: community programs. This includes support to help local communities adapt to AI-driven changes. It will likely focus on reducing the digital divide and increasing AI literacy.

Using AI for Alzheimer’s Research?

Among the foundation’s life sciences work, Alzheimer’s research stands out. There’s a reason the foundation put this first. Alzheimer’s has intricately tangled causes. Protein accumulation, genetic factors, and lifestyle habits are all connected. Researchers have been accumulating data for decades, but finding patterns hasn’t been easy. This is where AI’s strengths come in. AI can find patterns in vast clinical data that humans might miss. It’s already being used for biomarker detection, disease pathway mapping, and clinical trial personalization. The foundation plans to collaborate with partner research labs to develop AI tools related to Alzheimer’s. A cure is still far off, but improving early detection accuracy is a realistic goal. Earlier detection timing means a better quality of life for patients.“No Company Can Solve AI Threats Alone” — Sam Altman’s Warning

The most impressive part of this announcement was Sam Altman’s statement. He directly wrote in the official announcement that “AI will bring new threats to society.” He specifically mentioned three things. First, novel biological threats. As AI advances, new types of dangerous biological threats could become possible. Second, the speed of economic change. If AI transforms the economy too quickly, society can’t keep up. Third, complex emergent effects. As AI models become more powerful, unpredictable social changes emerge. Altman stated that “no single company can solve this problem alone” and called for a society-wide response. It’s rare for an AI company CEO to issue this kind of warning in a public announcement. It’s a signal that they view the situation seriously.From Nonprofit to For-Profit, and Back to a Foundation?

OpenAI started as a nonprofit in 2015. But it recently transitioned to a Public Benefit Corporation. During this process, several figures including Elon Musk pushed back hard. The criticism was that “it ultimately became a money-making company.” The foundation needs to be understood in this context. OpenAI decided to maintain its nonprofit arm even after the for-profit transition. They allocated $1 billion to that nonprofit arm and established independent leadership. Looking at it critically, there’s an element of image management here. Whether the $1 billion is spent meaningfully and whether execution results are disclosed transparently remains to be seen. However, co-founder Zaremba personally stepping up to lead AI risk can’t be taken lightly. A real technology leader is taking on responsibility.| Area | Leader | Key Priorities |

|---|---|---|

| Life Sciences / Disease Research | Jacob Trefethen | Alzheimer’s, high-mortality diseases, public health data |

| Jobs / Economic Impact | Anna Makanju | Career transition support, community programs |

| AI Risk Response | Wojciech Zaremba | Biological threats, cybersecurity, AI emergent effects |

| Operations | Jeff Arnold | Foundation operations with CFO Robert Kaiden |

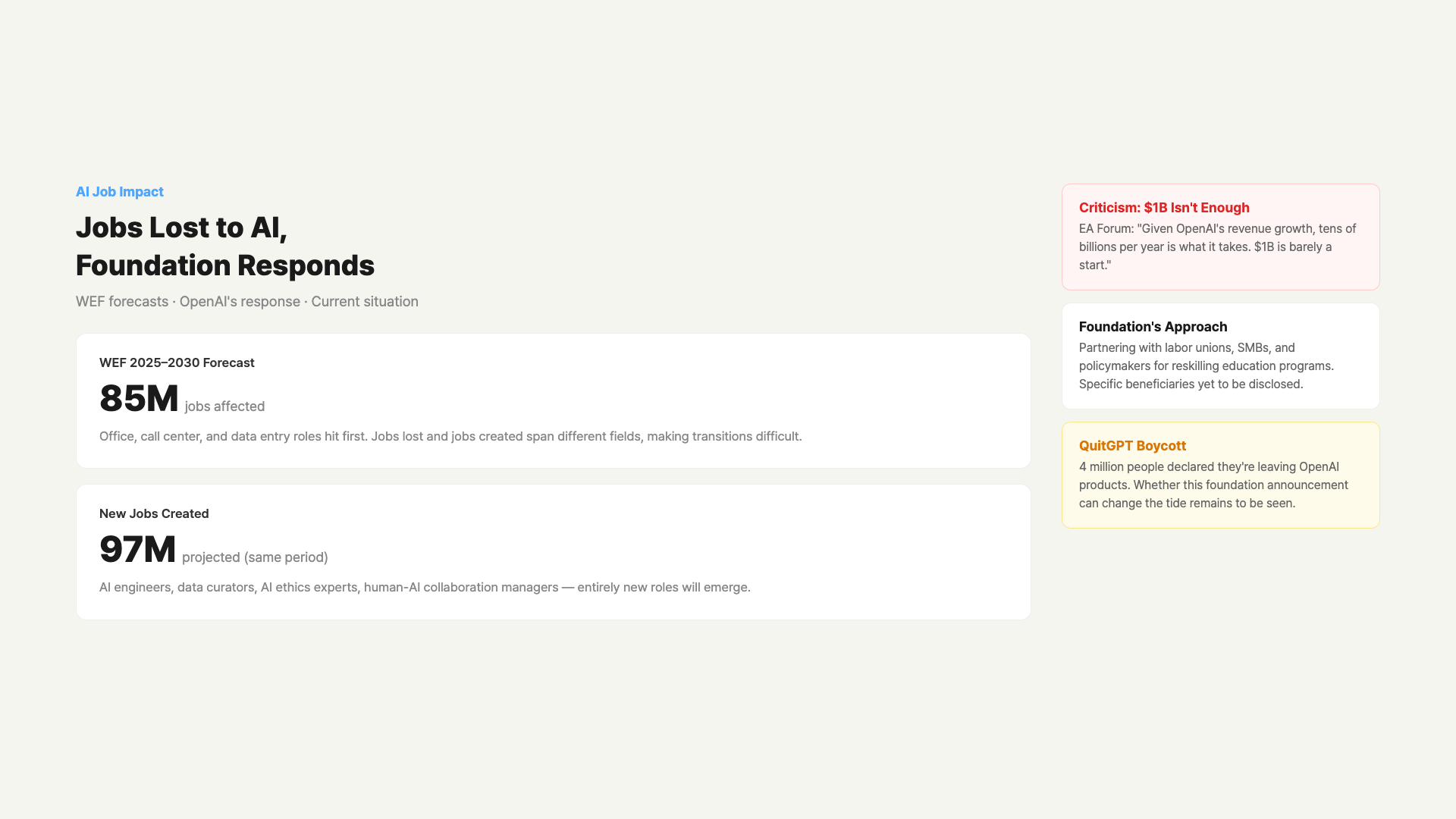

Is $1 Billion Enough? — Critics Say No

The foundation announcement wasn’t met with praise alone. In fact, criticism that “it’s too little” emerged quickly. On the Effective Altruism community (EA Forum), a post arguing “$1B isn’t even a starting point” gained attention. The argument goes like this: OpenAI’s current revenue is growing 3x rapidly, and the foundation budget is too small relative to its social impact. “If they really believe AI is that dangerous, they should be spending tens of billions per year.” $1 billion is less than 5% of OpenAI’s annual revenue. Fortune reported the announcement from the angle of “OpenAI pledges $1 billion to mitigate the jobs it will destroy.” The framing is sharp. It’s profiting from AI while “managing” the damage AI creates. From this perspective, the foundation is risk management, not accountability.Jobs Lost to AI — How Will They Respond?

Among the foundation’s four focus areas, “jobs and economic impact” is the one most people are directly connected to. Specific execution plans haven’t been disclosed yet, but the direction is clear. The foundation said it would bring together labor unions, small businesses, economists, civil organizations, and policymakers to address “the job impact created by AI.” Job training support, reskilling programs, and strengthening local economic resilience are expected. In the US, the sectors hit hardest first are data entry, call centers, and simple office work. What about globally? Many countries are already seeing rapid AI automation. Manufacturing robotization is being followed by quick AI adoption in service industries. It may take time for the OpenAI Foundation’s support to reach globally, but the pace of job restructuring won’t wait. If you’re in a field where AI transition is happening fast, it’s realistic to start thinking about your response now.

FAQ

Q. Why announce the foundation now?

This comes right after OpenAI transitioned from nonprofit to public benefit corporation (PBC). During that transition, criticism poured in that “it ultimately became just a money-making company.” The foundation announcement is the official response to that criticism. It also coincides with the expanding QuitGPT boycott (4 million participants). The timing clearly has a public relations element, which is one reason some view this announcement skeptically.Q. Is the OpenAI Foundation different from OpenAI headquarters?

Strictly speaking, they’re different organizations. OpenAI headquarters (the PBC) runs commercial operations like ChatGPT and APIs. The foundation operates as a nonprofit and receives a portion of headquarters revenue to invest in social challenges. When headquarters makes money, the foundation channels some back to society. The two organizations coexist with divided roles.Q. Will the full $1 billion actually be spent?

The official goal is to spend “at least” $1 billion (~1 trillion KRW) over one year. The word “at least” is important — more could be allocated depending on circumstances. However, how transparently the foundation discloses spending results remains to be seen. Donation execution records are typically published in annual reports.Q. Does AI actually help with Alzheimer’s research?

A cure is still a long way off. But AI’s ability to analyze large-scale medical data is already recognized in numerous studies. Real results are emerging in areas like drug candidate screening, clinical trial participant matching, and biomarker detection. If the foundation’s investment concentrates here, meaningful progress is possible.Q. Can people outside the US benefit from this foundation?

Nothing about international programs has been disclosed in the current plans. Initial investments will likely focus on US-based research labs and organizations. However, AI job displacement and biological threat response are global issues. There’s potential for international cooperation to expand in the long term.Q. Why did Wojciech Zaremba, an OpenAI co-founder, join the foundation?

The official announcement didn’t detail the reasons. However, Zaremba is known for his strong interest in AI safety and risk. Choosing to lead AI risk at the foundation rather than the commercial division signals that he considers this problem highly important. A founder choosing a social role over directly managing the for-profit business is a rare case even in Silicon Valley.Wrap-Up

OpenAI putting up $1 billion is certainly a big number. But whether this leads to real change or remains a declaration to ease criticism — only time will tell. The real evaluation point is checking where and how the $1 billion was spent one year from now. One thing is clear. In March 2026, the leading company in the AI industry officially acknowledged that “AI can be dangerous.” And it stepped up to take organizational responsibility. OpenAI itself has admitted that AI is no longer just a technology tool but a force that changes all of society. The foundation’s execution results will be disclosed going forward. Whether meaningful progress comes from Alzheimer’s research, and whether job impact response programs actually work — there’s now reason to keep watching.At GoCodeLab, we verify AI industry news firsthand and share honest summaries. Subscribe so you don’t miss out.

This article was written on March 26, 2026. Actual execution results of the OpenAI Foundation may be updated later. Base financial or investment decisions on official sources.

At GoCodeLab, we try AI tools hands-on and share honest reviews. Subscribe to the blog for more AI news.

Related: Ads Coming to ChatGPT Free Plan · Cursor vs Windsurf vs OpenAI Codex Comparison · GPT-5.4 — What’s New?