Meta Muse Spark vs GPT-5.4 vs Gemini 3.1 Pro — 2026 Big Tech AI Showdown

Meta launched Muse Spark. Compared with GPT-5.4 and Gemini 3.1 Pro by benchmarks, pricing, and context window.

April 2026 · AI Trends

Meta showed up with Muse Spark. They dropped the Llama series and built an entirely new model. It's the first product from Meta Superintelligence Labs (MSL). This time, it's not open source.

The rivals are OpenAI's GPT-5.4 and Google's Gemini 3.1 Pro. As of April 2026, these three are the big tech AI top 3. Each heads in a different direction. One is free, one leads in coding, and one has 2 million tokens.

We compared benchmark scores, pricing, context windows (the amount of text an AI can read at once), and unique features head to head. The bottom line: "It depends on what you need."

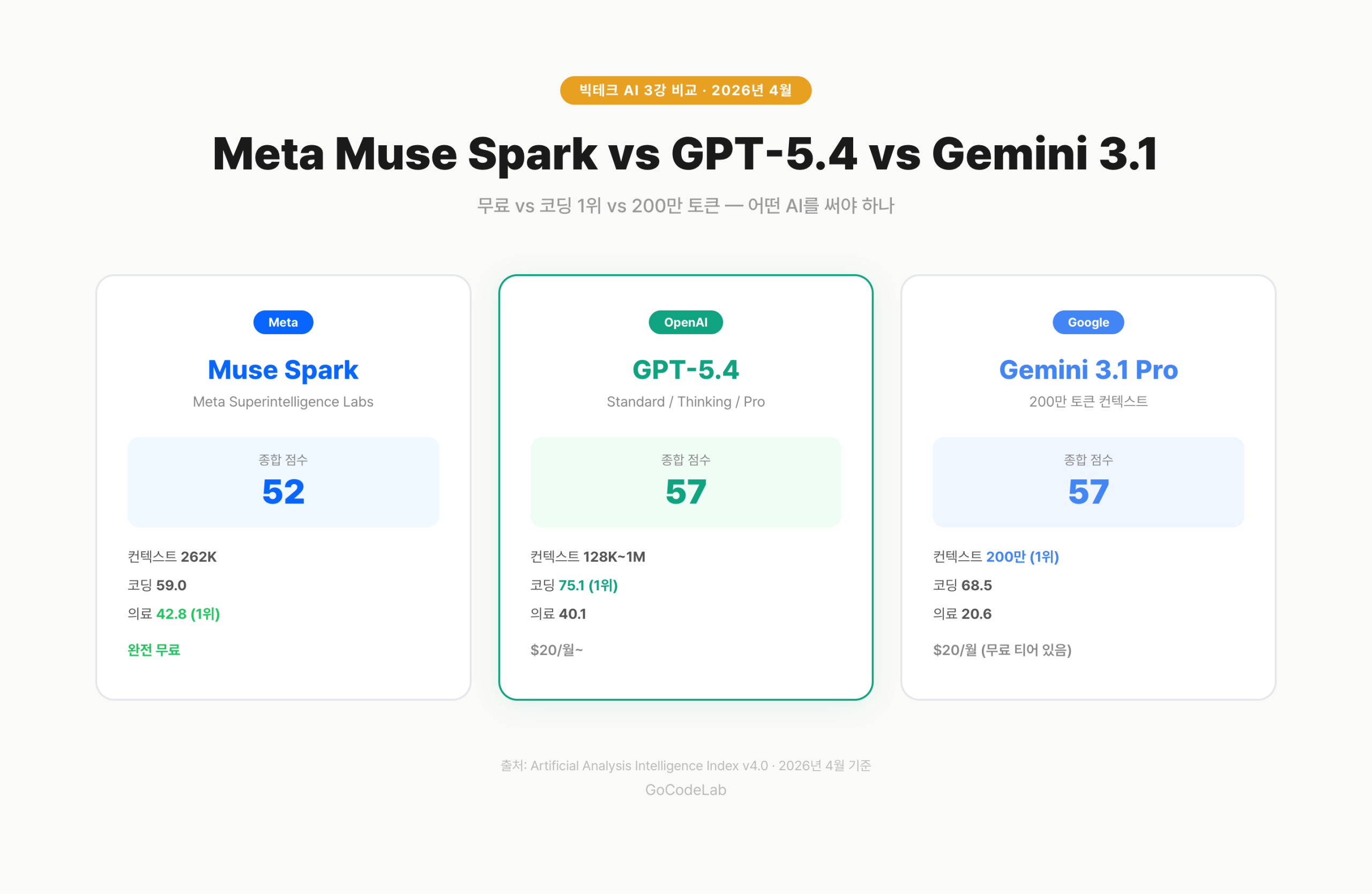

Quick Summary

• Meta Muse Spark — Free, multimodal, Contemplating mode (parallel reasoning), #1 in healthcare

• GPT-5.4 — Tied for #1 overall (57 pts), dominant #1 in coding benchmarks

• Gemini 3.1 Pro — Tied for #1 overall (57 pts), 2M token context, built-in code execution

• Overall: GPT-5.4 = Gemini 3.1 Pro (57) > Claude Opus 4.6 (53) > Muse Spark (52)

• For free use, Muse Spark. For coding, GPT-5.4. For large-scale analysis, Gemini.

1. Full Comparison — At a Glance

Here are the core specs of all three models.

| Category | Muse Spark | GPT-5.4 | Gemini 3.1 Pro |

|---|---|---|---|

| Developer | Meta (MSL) | OpenAI | |

| Overall Score | 52 pts | 57 pts | 57 pts |

| Context Window | 262K | 128K–1M | 2M |

| Multimodal | Text, Image, Voice | Text, Image, Voice | Text, Image, Voice, Video |

| Coding (Terminal-Bench) | 59.0 | 75.1 | 68.5 |

| Free Access | Completely Free | Paid ($20/mo+) | Free tier available |

| Open Source | Closed | Closed | Closed |

| Unique Feature | Contemplating mode | Thinking/Pro mode | Built-in code execution |

By the numbers, GPT-5.4 and Gemini 3.1 Pro are tied for first. Muse Spark trails in overall score. But the "free" card is powerful. Let's break each one down below.

2. Meta Muse Spark — Free with Contemplating

Muse Spark is the first model from Meta Superintelligence Labs (MSL). It's led by Alexandr Wang (former Scale AI CEO). The architecture is completely different from the Llama series.

The biggest draw is that it's completely free. Anyone can use it on meta.ai and the Meta AI app. No credit card required. It will soon be available on Facebook, Instagram, and WhatsApp as well.

There are three reasoning modes: Instant (fast response), Thinking (deep analysis), and Contemplating (parallel agents). In Contemplating mode, multiple AIs solve the same problem simultaneously and pick the best answer. Think of it as a panel of experts debating.

Multiple reasoning agents tackle the same problem at once. Each tries a different approach, and the best answer gets selected. It competes with Google's Gemini Deep Think and OpenAI's GPT Pro as a deep reasoning feature. It scored 50.2% on Humanity's Last Exam.

The weaknesses are clear. It falls significantly behind GPT-5.4 and Gemini in coding (Terminal-Bench 59.0) and abstract reasoning (ARC AGI 2: 42.5). On the other hand, it ranked #1 among the three models in healthcare (HealthBench Hard 42.8). This is a model heading in a different direction.

3. GPT-5.4 — Current #1 in Coding and Reasoning

GPT-5.4 is OpenAI's latest flagship. It has three modes: Standard, Thinking, and Pro. It scored 57 on the Artificial Analysis Intelligence Index, tied with Gemini 3.1 Pro for first place.

It's dominant in coding. With a Terminal-Bench score of 75.1, it leads second-place Gemini (68.5) by 6.6 points. It also ranked #1 in real desktop task testing (GDPval-AA) with 1,672 ELO. It's the most accurate when writing code or fixing bugs.

The downside is the price. ChatGPT Plus costs $20/month, and the free tier is limited. Pro mode costs extra. A sharp contrast to Muse Spark being completely free.

The context window ranges from 128K to 1M depending on the plan. Not enough compared to Gemini's 2 million tokens. But 128K is sufficient for most tasks.

4. Gemini 3.1 Pro — 2M Tokens and Code Execution

Gemini 3.1 Pro is Google's latest model. It scored 57 overall, tied with GPT-5.4. But the character is different.

The biggest weapon is the 2 million token context window. That's roughly 15 books in a single prompt. It's overwhelming for large document analysis and full codebase reviews. Far ahead of GPT-5.4 (128K–1M) and Muse Spark (262K).

Its multimodal coverage is also the widest. It natively handles text, images, audio, and video. Gemini is the only one of the three that can analyze video directly.

Gemini 3.1 Pro has a Sandboxed Code Execution tool built in. The AI can write, run, and verify code results during a conversation. Instead of using a separate calculator, the AI runs the code itself to produce accurate answers.

In abstract reasoning (ARC AGI 2), it scored 76.5, narrowly ahead of GPT-5.4 (76.1). In coding, it trailed at 68.5 vs. GPT-5.4's 75.1. Pricing is $20/month for Gemini Advanced, and a free tier exists.

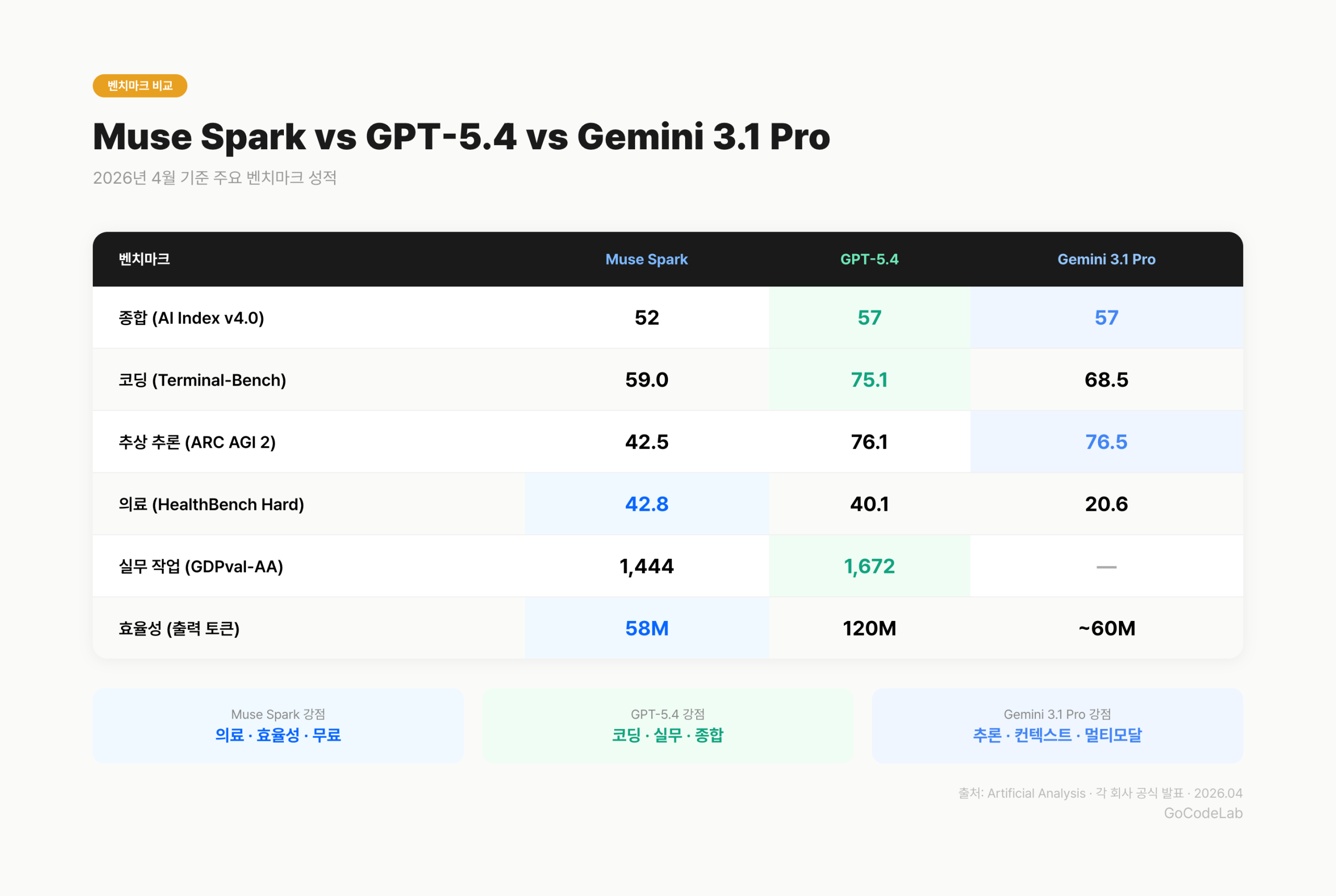

5. Detailed Benchmark Comparison

Here are the benchmarks (AI performance tests — think of them like standardized exam scores) broken down by category. Sources are Artificial Analysis and each company's official announcements.

| Benchmark | Muse Spark | GPT-5.4 | Gemini 3.1 Pro |

|---|---|---|---|

| Overall (AI Index v4.0) | 52 | 57 | 57 |

| Coding (Terminal-Bench) | 59.0 | 75.1 | 68.5 |

| Abstract Reasoning (ARC AGI 2) | 42.5 | 76.1 | 76.5 |

| Healthcare (HealthBench Hard) | 42.8 | 40.1 | 20.6 |

| Deep Reasoning (HLE) | 50.2% | — | — |

| Real-world Tasks (GDPval-AA) | 1,444 ELO | 1,672 ELO | — |

Muse Spark excels in healthcare and deep reasoning. GPT-5.4 leads in coding and real-world tasks. Gemini 3.1 Pro has a slight edge in abstract reasoning. No single model dominates every category.

Efficiency is also worth noting. Muse Spark used 58M output tokens across the full evaluation. Compared to GPT-5.4 (120M) and Claude Opus 4.6 (157M), that's less than half. It means delivering similar performance with fewer resources.

6. Pricing Comparison

The price difference is the key to choosing.

| Category | Muse Spark | GPT-5.4 | Gemini 3.1 Pro |

|---|---|---|---|

| Free Plan | Completely Free | Limited free tier | Free tier available |

| Base Paid Plan | — | $20/mo (Plus) | $20/mo (Advanced) |

| API | Partner preview | Pay-as-you-go | Pay-as-you-go |

| Free Context | 262K | Limited | 1M (Gemini Advanced) |

Muse Spark costs nothing. That's its biggest advantage. GPT-5.4 and Gemini 3.1 Pro both need $20/month or more for full specs. This is why Muse Spark is attractive to students and individual users.

However, the API is still in partner preview. Developers who want to integrate it into their apps need to use GPT-5.4 or Gemini APIs instead. Pricing hasn't been announced, so developers will have to wait.

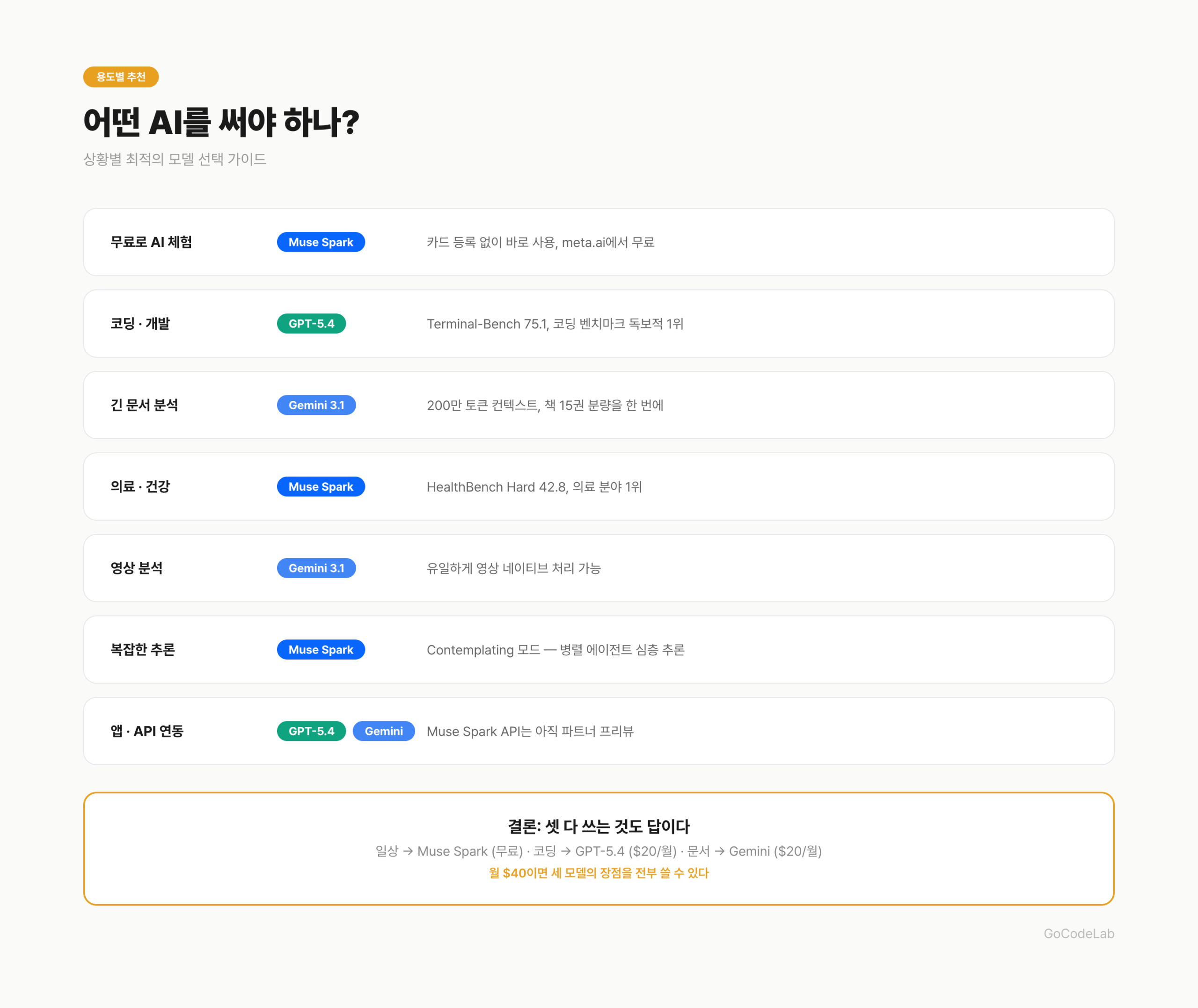

7. Recommendations by Use Case

| Scenario | Pick | Why |

|---|---|---|

| Want to try AI for free | Muse Spark | No credit card, instant access |

| Coding & development | GPT-5.4 | Terminal-Bench 75.1, dominant #1 |

| Long documents & papers | Gemini 3.1 Pro | 2M tokens — 15 books at once |

| Healthcare & medical questions | Muse Spark | HealthBench Hard 42.8, #1 |

| Video analysis | Gemini 3.1 Pro | Only model with native video |

| Complex reasoning & math | Muse Spark | Deep reasoning via Contemplating mode |

| Integrating AI into apps | GPT-5.4 / Gemini | Muse Spark API not yet public |

"If I had to pick just one?" — that's a hard question to answer. For coding, GPT-5.4. For large-scale work, Gemini. For free, Muse Spark. When the purpose is clear, the choice is easy.

8. How to Use All Three Together

Using all three is also an option. In practice, many users combine them.

Everyday questions

→ Muse Spark (free)

→ "What's the weather today?" "What does this word mean?"

Coding & development

→ GPT-5.4 (Plus $20/mo)

→ "Refactor this code" "Fix this bug"

Documents & research

→ Gemini 3.1 Pro (Advanced $20/mo)

→ "Summarize this 200-page paper" "Analyze this video"

With this approach, $40/month (GPT Plus + Gemini Advanced) gives you the strengths of all three. Everyday tasks go to Muse Spark for free — you only pull out the paid tools for specialized work.

Developers can combine APIs too. Use Gemini Flash (cheap) for lightweight tasks, GPT-5.4 API for coding, and Gemini 3.1 Pro API for large-scale processing. That's how you optimize costs.

9. FAQ

Q. Is Meta Muse Spark free?

Yes. It's available for free on meta.ai and the Meta AI app. The API is currently in partner preview, so general developers can't use it yet. Pricing hasn't been announced.

Q. What is Muse Spark's Contemplating mode?

Multiple AI agents solve the same problem simultaneously and pick the best answer. Think of it as several experts debating. It's a deep reasoning feature that competes with Google's Gemini Deep Think and OpenAI's GPT Pro.

Q. Which is better — GPT-5.4 or Gemini 3.1 Pro?

Both scored 57 on the overall benchmark — a tie. GPT-5.4 leads in coding, while Gemini has a slight edge in abstract reasoning. Gemini's context window at 2M tokens is overwhelming. It depends on your use case.

Q. Why did Meta abandon open source?

Muse Spark is the first model from MSL. After the Llama 4 launch controversy, they shifted strategy. But Meta said "future versions might be open-sourced." It's not a permanent departure.

Q. Can I use all three models at the same time?

Absolutely. Using Muse Spark (free) for everyday questions, GPT-5.4 for coding, and Gemini 3.1 Pro for large document analysis is the most efficient combination. $40/month covers it.

10. Wrap-up

Meta created a three-way race with Muse Spark. The "free" card hits hard. Performance still trails GPT-5.4 and Gemini, but the Contemplating mode and healthcare benchmarks showed real potential.

The conclusion is simple. If you don't want to spend money, Muse Spark. If coding is your job, GPT-5.4. If you deal with long documents, Gemini 3.1 Pro. Using all three is also a perfectly valid answer. No need to pick just one.

• Meta AI — Introducing Muse Spark

• Artificial Analysis — Muse Spark Performance

• Lushbinary — Muse Spark vs GPT-5.4 vs Claude vs Gemini

• LLM Stats — Muse Spark Pricing & Benchmarks

Lazy Developer — Automate Everything

Stories of automating things because repetitive work is boring

Read from EP.01 →GoCodeLab Blog

AI news and development automation stories, updated weekly

Benchmark scores and pricing in this article are as of April 10, 2026. They may change with model updates.

Benchmark sources: Artificial Analysis Intelligence Index v4.0, official announcements from each company.