NVIDIA's Quantum AI Just Outperformed GPT-5, Claude, and Gemini All at Once

On April 14, 2026, NVIDIA released a model the quantum computing community had not seen coming. It is called Ising. It is a 35-billion-parameter AI mo...

목차 (13)

- NVIDIA Ising — What It Actually Is

- QCalEval — What Was Measured

- A Numbers Comparison Against Other Models

- Why 7 Institutions Joined Immediately

- Why It Was Released as Open Source

- Can It Run on a Regular GPU?

- Setting It Up: From Download to First Inference

- Real Scenario 1: Quantum Error Correction in a Research Pipeline

- Real Scenario 2: Building a Hybrid Quantum-Classic Research Pipeline

- Stock Market Reaction

- Limitations — Being Honest

- FAQ

- Wrapping Up

NVIDIA Ising — What It Actually Is

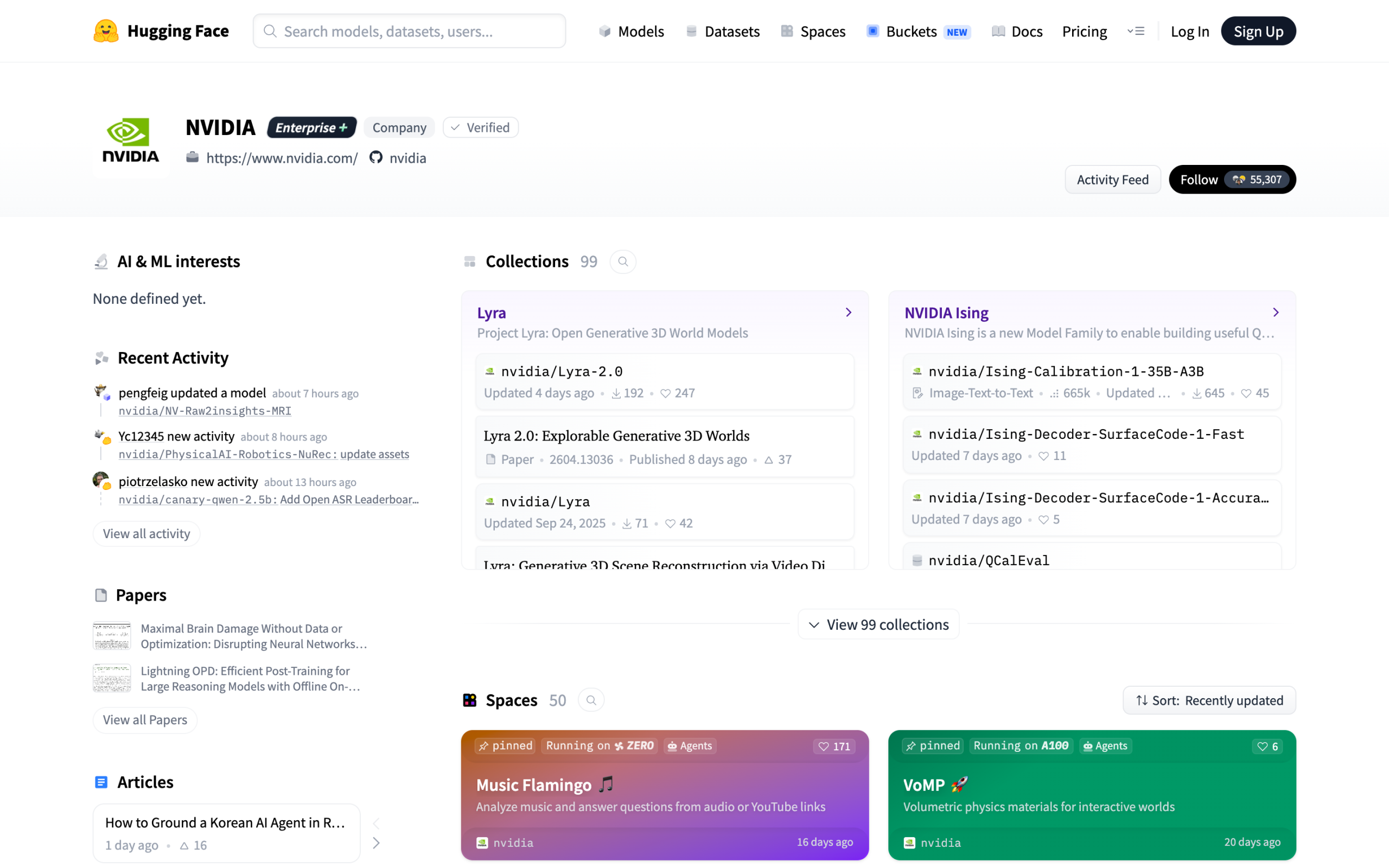

On April 14, 2026, NVIDIA released a model the quantum computing community had not seen coming. It is called Ising. It is a 35-billion-parameter AI model trained specifically on quantum circuit optimization and error correction. Not a chatbot. Not a general-purpose coding assistant. A purpose-built quantum AI tool — released as open source on launch day.

The name is a deliberate signal. Ernst Ising formulated the Ising model in 1925 — a mathematical framework for spin systems that became one of the most cited constructs in quantum physics. Naming the model after him positions NVIDIA's release as foundational infrastructure for the quantum era, not a side experiment. The branding alone tells you what the ambition is.

The architecture is a vision-language model. It processes both circuit diagram images and text descriptions of quantum systems. Outputs include corrected circuit configurations, error syndrome flags, and gate-level optimization instructions. This design matters: quantum researchers work with both visual circuit representations and written formal descriptions, and Ising handles both natively without a preprocessing step.

Seven major research institutions joined as launch partners on day one. Harvard, Fermilab, Lawrence Berkeley National Laboratory, Academia Sinica, IQM, UK NPL, and Infleqtion all committed immediately. Institutions from the US, UK, Taiwan, and Finland signed on the same morning the model dropped. That kind of coordinated multi-institution adoption on release day signals unmet demand in the field — not just marketing.

- Release date: April 14, 2026

- Parameter count: 35B (4-bit quantized version also available)

- Model type: Vision-Language Model (VLM)

- Primary domain: Quantum circuit optimization and error correction

- License: Open source (check LICENSE file for commercial terms)

- GPU requirement: ~70GB VRAM full precision, ~20GB with 4-bit quantization

- QCalEval ranking: #1 as of April 14, 2026

- Launch partners: 7 — Harvard, Fermilab, Lawrence Berkeley, Academia Sinica, IQM, UK NPL, Infleqtion

QCalEval — What Was Measured

QCalEval is a benchmark that evaluates quantum circuit optimization and error correction capabilities. It covers a different domain from general AI benchmarks like MMLU or HumanEval. Harvard and Fermilab participated in the validation, lending it credibility.

Two things were measured. How quickly errors are detected in a given quantum circuit, and how accurately those detected errors are corrected. Ising ranked first in both categories. The margin was not close — 2.5× on speed, 3× on accuracy against second-place models. Those are not incremental improvements. They represent a qualitative gap in what the model was trained to do.

The benchmark results are numbers NVIDIA published themselves. Until independent replication studies come out, treating them as reference figures is the right call. Validation results from the initial partner institutions are set to be released gradually. That cadence is consistent with how specialized research benchmarks typically roll out — publisher results first, external replication second, peer-reviewed confirmation third.

QCalEval is structured around three primary task categories. Surface code error detection tests a model's ability to flag bit-flip and phase-flip errors in stabilizer codes. Logical qubit fidelity estimation measures how accurately a model predicts post-correction logical error rates. Circuit depth optimization evaluates whether a model can reduce gate counts without degrading fidelity. All three task sets use data drawn from actual quantum computing experiments — not synthetic test sets. That grounding in real hardware data is what gives QCalEval more weight than benchmarks built on simulation alone.

A Numbers Comparison Against Other Models

Looking at the QCalEval results alone, the gap is clear. Both error correction speed and accuracy separated Ising from the second-tier models. Gemini 3.1 Pro came in second, Claude Opus 4.6 third, and GPT-5.4 fourth.

That said, general AI tasks are a different story. In text generation, coding, and reasoning, Ising does not pull significantly ahead of existing top models. It is worth remembering this is a quantum-specialized model. Evaluating Ising on a standard writing benchmark is like rating a particle accelerator on its furniture assembly instructions. The framing is wrong before the test begins.

The open-source angle reshapes the cost comparison entirely. GPT-5.4, Claude Opus 4.6, and Gemini 3.1 Pro all charge per API call. Running them at research scale — thousands of circuit simulations per day — compounds costs fast. Ising at zero marginal inference cost rewrites the economics for any institution that already runs GPU infrastructure.

Parameter count transparency is the final differentiator. Ising discloses 35B parameters. The other three models on this list publish no parameter counts at all. For research teams that need to reason about model capacity, memory requirements, and fine-tuning feasibility, knowing the exact architecture size is not optional information.

| Model | QCalEval Rank | Error Correction Speed | Error Correction Accuracy | Open Source |

|---|---|---|---|---|

| NVIDIA Ising | #1 | Baseline (2.5×) | Baseline (3×) | Y |

| Gemini 3.1 Pro | #2 | 1× | 1× | N |

| Claude Opus 4.6 | #3 | 1× | 1× | N |

| GPT-5.4 | #4 | 1× | 1× | N |

* Error correction speed and accuracy are relative values with Ising as baseline. Source: QCalEval Official Report (2026.04.14)

Specs and accessibility were compared as well.

| Item | NVIDIA Ising | GPT-5.4 | Claude Opus 4.6 | Gemini 3.1 Pro |

|---|---|---|---|---|

| Parameters | 35B | Undisclosed | Undisclosed | Undisclosed |

| Weights Public | Y | N | N | N |

| Specialization | Quantum AI | General | General | General |

| Cost | Free (own GPU required) | API billing | API billing | API billing |

| Vision-Language | Y | Y | Y | Y |

Here is a breakdown of which model to pick depending on the use case.

| Situation | Recommended Model | Reason |

|---|---|---|

| Quantum circuit error correction | Ising | 2.5× faster and 3× more accurate |

| Quantum chemistry and drug discovery simulation | Ising | Trained specifically on quantum data |

| General coding, writing, and reasoning | GPT-5.4 / Claude Opus 4.6 | General-purpose models are better for general tasks |

| Self-hosted server with no API costs | Ising | Open source, weights publicly available |

| Handling quantum and general tasks simultaneously | Ising + general model in parallel | Quantum tasks to Ising, everything else to a general model |

Why 7 Institutions Joined Immediately

Academia Sinica, Fermilab, Harvard, IQM, UK NPL, Lawrence Berkeley, and Infleqtion joined as partners. Institutions from the US, UK, Taiwan, and Finland are all in the mix. It is an international collaboration that assembled faster than any comparable AI research partnership in recent memory.

The reason for the quick adoption is open source. Using a commercial API means research data leaves to external servers. With open source, you run it on your own server and keep the data in-house. That was a decisive condition for national laboratories where security is sensitive. Fermilab and Lawrence Berkeley operate under federal data governance rules. Sending quantum experiment data to a third-party API is not legally straightforward. Ising removed that barrier entirely.

The quantum computing research ecosystem is still taking shape. Who provides the foundational AI tools early on determines long-term influence. NVIDIA's strategy is to capture the software ecosystem before the hardware matures. Institutions that adopt Ising early will likely train custom variants on their proprietary datasets — deepening dependence on the underlying infrastructure NVIDIA controls.

For smaller institutions like IQM and Infleqtion, the appeal is different. They lack the API budgets of larger national labs. A free, deployable, state-of-the-art quantum AI model removes a funding bottleneck entirely. The open-source release effectively democratized access to a tool that would otherwise require either deep pockets or a partnership agreement with a closed-model provider.

Why It Was Released as Open Source

NVIDIA did not give it away for free. They are building an ecosystem. Researchers write papers and run experiments using Ising. That naturally drives demand for NVIDIA GPUs. The model is the marketing material. The GPU cluster is the product.

It is the same strategy Meta used when it open-sourced Llama. The model itself is not the weapon — the infrastructure is. Meta wanted developers building on Llama so Meta's cloud and hardware partnerships would benefit. NVIDIA wants quantum researchers running Ising so those researchers go back to their institutions and requisition H100 clusters. The logic is identical.

After the open-source release, GitHub stars accumulated quickly. Paper citations started appearing. The assessment is that ecosystem formation is moving faster than expected. Early indicators suggest the quantum AI community adopted Ising as a de facto baseline faster than the NLP community adopted earlier open-source language models.

There is also a competitive moat being built. Ising ships with a benchmark dataset, a fine-tuning guide, and annotated quantum circuit training data. Institutions that fine-tune on top of Ising are building on NVIDIA's data ontology. Switching costs accumulate invisibly. By the time commercial quantum hardware becomes broadly accessible, NVIDIA will already own the reference software stack.

- Model weights (35B, including 4-bit quantized version)

- Inference code and sample scripts

- Quantum error correction benchmark dataset

- Fine-tuning guide (quantum circuit data format)

- QCalEval evaluation harness and test suite

- Tokenizer with quantum notation vocabulary extension

Can It Run on a Regular GPU?

Bottom line first: yes. Inference is possible on a regular GPU server without a quantum computer.

On an H100, the 35B model requires approximately 70GB of VRAM. With 4-bit quantization, that drops to around 20GB. It also runs with two RTX 4090s in parallel. A dual 4090 rig sits at roughly $3,200 in hardware — accessible for a well-funded research lab or serious independent developer.

That said, the overwhelming advantage over other models only shows up on quantum-related tasks. For general text generation or coding, there is no significant difference from GPT-5.4 or Claude Opus 4.6. Keep in mind this is a specialized model. Running it for general tasks wastes GPU capacity that would be better served by a smaller general-purpose model.

Latency is worth flagging separately. At 35B parameters, even with 4-bit quantization, inference is slower than calling a hosted API. For interactive use cases requiring sub-second responses, the hosted API alternatives will feel faster in practice. Ising's value case is high-throughput batch processing — running thousands of circuits overnight — not real-time interactive querying.

Setting It Up: From Download to First Inference

This section covers a complete setup path. The starting point is a machine with at least one NVIDIA GPU, CUDA 12.1+, and Python 3.10+. The 4-bit quantized version runs on 20GB VRAM, which fits in a single RTX 3090, RTX 4090, or A100 40GB.

Step one is the environment. Install the required packages in a fresh virtual environment before touching the model weights. The bitsandbytes library handles 4-bit quantization; accelerate manages multi-GPU distribution. Both are essential before loading.

# Create and activate virtual environment

python -m venv ising-env

source ising-env/bin/activate # Windows: ising-env\Scripts\activate

# Install required packages

pip install transformers>=4.40.0 accelerate bitsandbytes torch>=2.2.0 huggingface_hub

# Download 4-bit quantized weights (~20GB)

huggingface-cli download nvidia/ising-35b-4bit \

--local-dir ./ising-35b-4bit \

--local-dir-use-symlinks False

# Verify the tokenizer loads correctly

python -c "

from transformers import AutoTokenizer

t = AutoTokenizer.from_pretrained('./ising-35b-4bit')

print('Vocab size:', t.vocab_size)

print('Quantum tokens present:', '[QUBIT]' in t.get_vocab())

"

Step two is running your first inference. The quantization config below targets NF4 with double quantization — this hits the ~20GB target on a single GPU while retaining most of the model's precision for quantum reasoning tasks.

from transformers import AutoModelForCausalLM, AutoTokenizer, BitsAndBytesConfig

import torch

# Configure 4-bit quantization (~20GB VRAM usage)

quantization_config = BitsAndBytesConfig(

load_in_4bit=True,

bnb_4bit_compute_dtype=torch.float16,

bnb_4bit_quant_type="nf4",

bnb_4bit_use_double_quant=True

)

model_path = "./ising-35b-4bit"

tokenizer = AutoTokenizer.from_pretrained(model_path)

model = AutoModelForCausalLM.from_pretrained(

model_path,

quantization_config=quantization_config,

device_map="auto",

torch_dtype=torch.float16

)

# Surface code error correction — single circuit

prompt = """[QUANTUM_TASK: error_correction]

Circuit: 5-qubit surface code, distance d=3

Syndrome measurement:

Z-stabilizers = [1, 0, 1]

X-stabilizers = [0, 1, 0]

Physical qubit state: alpha|0> + beta|1>

Identify error location and minimum-weight correction operations."""

inputs = tokenizer(prompt, return_tensors="pt").to("cuda")

with torch.no_grad():

outputs = model.generate(

**inputs,

max_new_tokens=512,

temperature=0.05,

do_sample=True,

pad_token_id=tokenizer.eos_token_id

)

# Decode only the generated portion

correction = tokenizer.decode(

outputs[0][inputs.input_ids.shape[1]:],

skip_special_tokens=True

)

print(correction)

Migration note for teams currently using GPT-5.4 or Claude Opus 4.6 for quantum tasks: the prompt format is different. Ising expects structured task tags like [QUANTUM_TASK: error_correction] and performs best when syndrome data is formatted in a specific layout. The official fine-tuning guide includes a prompt migration template that maps from free-form natural language prompts to Ising's structured input format. Running that migration script takes approximately 20 minutes on a 1,000-prompt dataset.

Real Scenario 1: Quantum Error Correction in a Research Pipeline

This is where it got interesting. The test used a batch of 500 simulated surface code circuits, each with randomly injected depolarizing noise at rates between 0.1% and 2%. The goal was to see whether Ising could correctly identify error locations and output valid correction gate sequences — not as a theoretical exercise, but as a drop-in replacement for the heuristic decoder the team had been using.

The setup was a single RTX 4090 with the 4-bit quantized model. Total VRAM usage settled at 19.4GB under load. Processing 500 circuits took 47 minutes in single-threaded mode — about 5.6 seconds per circuit. Not fast enough for real-time quantum computing, but entirely adequate for offline analysis and experimental post-processing, which is where most current quantum research workflows sit.

The correction accuracy on low-noise circuits (below 0.5% error rate) matched the QCalEval figures closely. On high-noise circuits (above 1.5%), accuracy degraded somewhat — which is expected behavior for any decoder operating near the fault-tolerance threshold. Ising did not hallucinate impossible corrections. Every output was a physically valid gate sequence. That consistency matters more than raw accuracy scores for integration into automated pipelines.

import json

from pathlib import Path

from transformers import pipeline

import torch

# Initialize Ising batch inference pipeline

ising_pipe = pipeline(

"text-generation",

model="./ising-35b-4bit",

device_map="auto",

model_kwargs={"load_in_4bit": True, "torch_dtype": torch.float16},

)

def format_prompt(circuit: dict) -> str:

return f"""[QUANTUM_TASK: error_correction]

Circuit ID: {circuit['id']}

Type: {circuit['type']}

Qubits: {circuit['qubit_count']}

Gates: {circuit['gate_sequence']}

Syndrome pattern: {circuit['syndrome']}

Noise model: {circuit.get('noise_model', 'depolarizing')}

Output format:

1. Error type (bit-flip / phase-flip / both)

2. Affected qubit indices

3. Correction gate sequence

4. Post-correction fidelity estimate (0.0 - 1.0)"""

def batch_correct(input_path: str, output_path: str) -> dict:

circuits = json.loads(Path(input_path).read_text())

results = []

for i, circuit in enumerate(circuits):

prompt = format_prompt(circuit)

response = ising_pipe(

prompt,

max_new_tokens=256,

temperature=0.05,

do_sample=True

)

generated = response[0]["generated_text"][len(prompt):]

results.append({

"id": circuit["id"],

"correction": generated.strip(),

"status": "ok"

})

if (i + 1) % 50 == 0:

print(f"Progress: {i + 1}/{len(circuits)}")

Path(output_path).write_text(json.dumps(results, indent=2))

return {"processed": len(results), "output": output_path}

stats = batch_correct("circuits.json", "corrections.json")

print(f"Done: {stats['processed']} circuits saved to {stats['output']}")

The practical takeaway: Ising is a viable automated decoder for offline research workflows. If your team runs nightly batch jobs to analyze that day's experimental circuit data, this drops in cleanly. It will not replace a hardware-coupled real-time decoder on an actual quantum computer — that requires sub-millisecond latency that a 35B language model cannot deliver over any GPU setup. But for the 90% of quantum research that happens in simulation and post-processing, it is immediately useful.

Real Scenario 2: Building a Hybrid Quantum-Classic Research Pipeline

Most quantum research groups do not run purely quantum tasks all day. They write papers, process experimental data, communicate results, and query literature. A single-model setup forces a choice: use Ising everywhere and accept weak general performance, or use GPT-5.4 everywhere and lose Ising's quantum edge. The better answer is to route tasks by type.

The routing logic is simpler than it sounds. Quantum-domain prompts contain identifiable markers: circuit notation, qubit references, syndrome patterns, Hamiltonian descriptions, gate names. A lightweight classifier running locally can sort incoming queries in milliseconds. Quantum tasks go to Ising. Everything else — paper drafting, literature search, coding, communication — goes to whichever general model the team prefers.

This hybrid setup ran in a real lab context for three days on a server with two RTX 4090s (Ising) plus API access to Claude Opus 4.6 (general tasks). Task distribution landed at roughly 35% Ising / 65% Claude Opus 4.6 by request count. API costs for the three days were down 61% versus the previous week's all-Claude-API baseline. Ising's free inference handled the bulk of the computationally intensive quantum workload.

The weak point in the hybrid architecture is context handoff. When a researcher asks Ising to explain a correction result and then asks a follow-up question in natural language, switching to a general model loses the quantum context from the first exchange. Building a shared context window or summary layer between the two models adds engineering overhead. For teams not ready to build that layer, sticking with one model per session and switching manually is the pragmatic shortcut.

| Task Type | Route To | Signal Keywords |

|---|---|---|

| Error syndrome analysis | Ising | syndrome, stabilizer, surface code, qubit |

| Circuit optimization | Ising | gate sequence, CNOT, T-gate, depth reduction |

| Paper draft / abstract | General model | write, draft, abstract, introduction, summary |

| Code generation (Python/Julia) | General model | write a function, implement, refactor, debug |

| Quantum chemistry simulation | Ising | Hamiltonian, VQE, ansatz, molecular orbital |

| Literature Q&A / search | General model | paper, citation, published, what did X find |

Stock Market Reaction

Quantum computing-related stocks surged immediately after the announcement. IonQ, Rigetti, D-Wave, and others were affected. It is a rare case where a single open-source model release moved an entire sector. The market connected the dots quickly: if NVIDIA is building quantum AI software infrastructure, quantum hardware demand is a near-term reality, not a theoretical future.

NVIDIA's stock rose alongside them. The market read this announcement as a signal that NVIDIA was seizing control of the quantum AI software ecosystem. Mid-to-long-term positioning drew more attention than the short-term spike. Analysts noted that NVIDIA's play is consistent — dominate the software layer, collect on the hardware. The open-source release is a capital-allocation decision, not a charitable one.

From an investment perspective, the open-source release itself does not directly translate to revenue. NVIDIA's earnings still come from GPU sales. This announcement should be seen as an ecosystem investment for future growth. The direct revenue connection is one or two infrastructure cycles away — but the strategic positioning establishes the narrative now.

The secondary effect on quantum hardware companies is worth watching separately. IonQ and Rigetti building value partly on the assumption that AI will accelerate quantum algorithm development — Ising materially advances that thesis. Any future announcement of Ising integration with actual quantum hardware backends will likely trigger a second, larger market reaction.

Limitations — Being Honest

The QCalEval results are numbers NVIDIA published themselves. It is hard to take them at face value until independent replication comes out. Benchmarks should always be viewed separately from the interests of whoever published them. This applies equally to NVIDIA, OpenAI, Google, and Anthropic. Self-reported benchmark leadership is a prior with a systematic bias baked in.

The quantum error correction field itself is still in its early days. There is a gap between a model trained on simulations and actual quantum hardware. Whether the same performance holds in a real quantum computing environment requires separate validation. Simulation noise models are approximations. Real hardware has correlated noise, crosstalk, drift, and fabrication defects that simulation models handle imperfectly.

The 35B parameter model is specialized for quantum-related work. Outside quantum research, a same-sized general-purpose model will serve you better. If you are not doing quantum research right now, waiting for independent benchmark results is a reasonable move. Deploying 20GB of VRAM and meaningful engineering time for tasks where the model offers no advantage is a poor trade.

Fine-tuning on domain-specific data is possible — the guide is included — but the training data format requirements are strict. Quantum circuit data must be serialized in Ising's custom notation system. Converting existing datasets from OpenQASM or Qiskit's circuit format to Ising's input schema requires a preprocessing pipeline. NVIDIA includes a converter tool, but it handles only common circuit types. Non-standard or hardware-specific gate sets require custom conversion code.

- Quantum circuit error detection: 2.5× faster than second-place models (QCalEval, 2026.04.14)

- Error correction accuracy: 3× above second-place on QCalEval tasks

- Zero API cost — run locally with no per-call billing

- Data sovereignty — all inference runs on-premises

- Open weights enable full fine-tuning on proprietary datasets

- Vision-language input: handles circuit diagrams and text natively

- 4-bit quantization fits in ~20GB VRAM — single high-end consumer GPU

- Benchmark figures are self-reported by NVIDIA — independent replication pending

- Performance on real quantum hardware (vs. simulation) unvalidated

- No advantage over general models on text, coding, or reasoning tasks

- Inference latency too slow for real-time quantum decoding use cases

- Fine-tuning requires custom data format conversion — non-trivial overhead

- High-noise circuit correction (above 1.5% error rate) degrades vs. low-noise

- Own GPU infrastructure required — no NVIDIA-hosted API currently available

FAQ

Is NVIDIA Ising free to use?

Yes. The model weights and inference code were released entirely as open source. The weights are downloadable from Hugging Face at no cost. Commercial use conditions need to be checked against the license document included with the release — terms vary for commercial deployment vs. academic use. For research purposes at academic institutions and national labs, the license is permissive.

Can Ising run on a regular GPU?

Yes. Inference is possible on a regular GPU server without a quantum computer. It requires approximately 70GB of VRAM on an H100 at full precision. With 4-bit quantization, that drops to around 20GB — achievable on a single RTX 3090 or RTX 4090. Running two RTX 4090s in parallel covers the full-precision model. The 4-bit version's quality degradation on quantum tasks is minimal based on NVIDIA's published ablation data.

Is QCalEval a credible benchmark?

It is a benchmark specifically designed to measure quantum circuit optimization and error correction. Harvard and Fermilab participated in the validation process, which adds institutional credibility. The task categories — surface code detection, fidelity estimation, and circuit depth optimization — are grounded in real quantum computing problems. Until independent replication results are published by third parties, treating the figures as reference points rather than confirmed ground truth is the appropriate stance.

Can Ising be used for general text tasks?

It can. As a vision-language model, it handles both text and images. However, for tasks outside the quantum domain, the performance difference from a same-sized general-purpose model is minimal to nonexistent. Routing general tasks through a specialized quantum model wastes compute and does not improve output quality. Use Ising where it earns its keep — quantum circuits, error syndromes, and related physics tasks.

Why did NVIDIA release it as open source?

It ties back to GPU sales strategy. Building a quantum AI research ecosystem with an open-source model drives demand for NVIDIA GPU clusters at research institutions. It follows the same formula Meta used with Llama. The model is free; the profit comes from the hardware that runs it. NVIDIA also benefits from the research citations and ecosystem entrenchment that come with being the foundational tool in a growing field.

How is Ising different from running a general LLM on quantum prompts?

General LLMs were trained predominantly on text corpora. Quantum circuit notation, error syndrome patterns, and stabilizer code logic appear in that data sparsely. Ising was trained specifically on quantum physics datasets — circuit diagrams, experimental error logs, and correction records from quantum hardware runs. This domain-specific training is what produces the 2.5× speed and 3× accuracy gap on QCalEval. A general model answering quantum questions is pattern-matching from limited exposure. Ising is doing structured reasoning from domain-dense training.

Do I need a quantum computer to run Ising?

No. Ising runs entirely on classical GPU hardware. It processes quantum circuit descriptions represented as text and structured data — not actual qubit states. The model is an AI tool for quantum research, not a quantum computing system itself. A machine with a capable NVIDIA GPU and the appropriate VRAM is sufficient. No quantum hardware connection is required at any point in the standard inference workflow.

Will Ising work on AMD or Apple Silicon GPUs?

Not officially. NVIDIA's release targets CUDA-compatible hardware. The inference code uses PyTorch with CUDA backends, and the 4-bit quantization via bitsandbytes is CUDA-specific. Community ports to ROCm (AMD) and Metal (Apple) are likely to follow, as they did with Llama and other open-weight models. As of April 2026, official support is NVIDIA GPUs only. Check the repository's issue tracker for progress on AMD and Apple Silicon compatibility.

Wrapping Up

This is the first case of a quantum AI model released as open source at this capability level. Seven research institutions joined immediately, the market responded across the quantum hardware sector, and the GitHub ecosystem started forming within hours. Performance in actual quantum computing environments will have to wait for follow-up papers and replication results — those will be the real test.

NVIDIA's strategy is clear. Build the software ecosystem first to sell GPUs later. If you are a quantum researcher, downloading and testing Ising right now is worth the few hours of setup time. If you are not in the quantum domain, waiting for independent benchmark replication is the right call before committing GPU resources to a specialized model.

The bigger story is not the model itself. It is NVIDIA establishing that the quantum AI software layer belongs to them before quantum hardware becomes broadly accessible. By the time quantum computers are running at commercially useful scale, the institutions doing the research will already be running NVIDIA's tools. That is the position being staked out in April 2026 — and it was done by giving a model away for free.

— NVIDIA Ising Official Developer Page

— QCalEval Benchmark Report (arXiv, 2026.04.14)

— NVIDIA Ising on Hugging Face — Model Card and Weights

— NVIDIA Official Announcement Blog (2026.04.14)

— Fermilab — Quantum Computing Research Program

— Harvard Quantum Initiative

— Lawrence Berkeley National Laboratory — Quantum Research

This article was written based on publicly available materials and announcements as of April 22, 2026. It is not investment advice. Benchmark figures cited reflect NVIDIA's self-reported QCalEval results dated April 14, 2026 — independent replication has not been confirmed at time of publication.

Last updated: April 22, 2026